Best Testing Tools for Microservices Architecture (2026)

The best testing tools for microservices are specialized platforms used to validate APIs, service contracts, integrations, and performance across distributed systems. They help engineering teams test services independently, detect breaking changes early, and ensure reliable communication between independently deployed microservices.

Microservices architectures solve real problems — team autonomy, independent scaling, technology flexibility, and resilience through isolation. But they introduce a new category of testing challenge that monolithic application testing strategies cannot address: how do you verify the behavior of a system where services communicate over networks, evolve independently, and fail in ways that are invisible to any single service's test suite? This guide evaluates the six best testing tools for microservices architectures in 2026, with practical guidance on how they work together as a cohesive testing strategy.

Table of Contents

- Introduction: Why Microservices Testing Requires a Different Approach

- What to Look for in Microservices Testing Tools

- The Unique Testing Challenges of Microservices Architecture

- Best Testing Tools for Microservices in 2026

- Comparison Table

- Real-World Implementation: A Microservices Testing Strategy with Shift-Left API

- How to Build a Complete Microservices Testing Stack

- Best Practices for Microservices Test Automation

- Microservices Testing Checklist

- FAQ

- Conclusion

Introduction: Why Microservices Testing Requires a Different Approach

Testing a monolith is relatively straightforward, even if not simple: you deploy one application, run your test suite against it, and you have a reasonable picture of whether it works. Testing a microservices system is a fundamentally different problem. You are not testing one application — you are testing dozens of independently deployed services, each with their own API contracts, their own dependencies, and their own failure modes. The interactions between those services create an emergent complexity that no single service's test suite can fully capture.

This is why microservices teams that try to test their systems using the same tools and strategies they used for monolithic applications find themselves with two unappealing options: either run full integration tests against all services deployed together (which is slow, brittle, and requires complex environment management), or test services in isolation and hope the contracts between them do not break (which they routinely do, in production, at the worst possible times).

The right approach for microservices testing in 2026 combines service-level API testing, contract testing, service virtualization (mocking), integration testing with real dependencies, performance testing, and distributed observability into a cohesive strategy. This approach embodies the shift-left testing philosophy applied to distributed architectures. No single tool addresses all of these needs, but the right combination of purpose-built tools covers the complete microservices testing landscape.

This guide identifies the six categories of tools your microservices testing strategy needs and recommends the best tool in each category, showing how they fit together into a complete testing approach.

What to Look for in Microservices Testing Tools

Microservices testing tools must satisfy requirements that monolithic testing tools often do not consider:

Service Isolation Capability. The ability to test a service in isolation — without requiring all of its upstream and downstream dependencies to be running — is essential for fast, reliable CI/CD testing in microservices environments. Look for tools with built-in mocking, service virtualization, or stub server capabilities.

Contract Awareness. Microservices communicate through API contracts. Testing tools that understand and validate these contracts — rather than just testing request/response pairs in isolation — provide a fundamentally more reliable quality signal for distributed systems.

OpenAPI/Schema Integration. Most microservices are documented with OpenAPI specifications. Testing tools that can import these specifications and generate tests, mocks, or contract definitions from them dramatically reduce the manual effort required to achieve meaningful coverage.

CI/CD Pipeline Integration at Service Level. In microservices environments, each service has its own CI/CD pipeline. Testing tools must integrate with per-service pipelines, not just with a single monolithic pipeline. They must also handle the complexity of testing against different service versions as they evolve independently.

Environment Complexity Handling. Microservices testing environments are inherently more complex than monolith environments — multiple services, multiple databases, multiple message queues. The best microservices testing tools minimize environment complexity through mocking, containerization, or cloud-hosted test execution.

Cross-Service Observability. When a test fails in a microservices environment, the failure may originate in a different service than the one being tested. Tools that provide distributed tracing and cross-service request visibility make debugging in microservices contexts significantly faster.

Team Autonomy Support. Microservices are built around team autonomy — each service team owns their service. Testing tools should support this autonomy: each team should be able to manage their own test suites, their own contracts, and their own quality gates without depending on a centralized QA team to operate testing infrastructure.

The Unique Testing Challenges of Microservices Architecture

Before diving into specific tools, it is worth articulating the concrete challenges that make microservices testing uniquely difficult — because the tool selection directly addresses each of these challenges:

The integration environment problem. Spinning up all services for integration testing requires orchestrating dozens of containers, databases, and message queues. This is slow, resource-intensive, and often produces an environment so different from production that tests are unreliable. Testing individual services in isolation is faster and more reliable, but requires realistic mocks for all dependencies.

The contract drift problem. Services evolve independently. Service A's team updates their API response format for their own reasons — perhaps adding a new required field or changing a field name. Service B's team, which consumes Service A's API, does not find out about this change until their service starts returning unexpected errors in production. Contract testing is the solution to this problem, but it requires dedicated tooling and cross-team coordination.

The test data problem. Microservices tests often need consistent test data across multiple services — creating a test user in Service A that can then be used in Service B's tests. Managing this state across independent service deployments is significantly more complex than test data management in a monolith.

The distributed failure problem. When a test fails against a microservices system, the failure might originate anywhere in the call chain. A failing API test against the order service might actually be caused by a bug in the inventory service, the payment service, or the notification service. Without distributed tracing, debugging these failures requires extensive manual investigation.

The deployment coordination problem. Testing a microservices system requires deciding which version of each service to test against. Should a PR for Service A be tested against the current production version of Service B, the latest main branch version, or a specific staging version? These coordination decisions have significant implications for test reliability and coverage.

Best Testing Tools for Microservices in 2026

Shift-Left API — API Testing and Mocking for Microservices

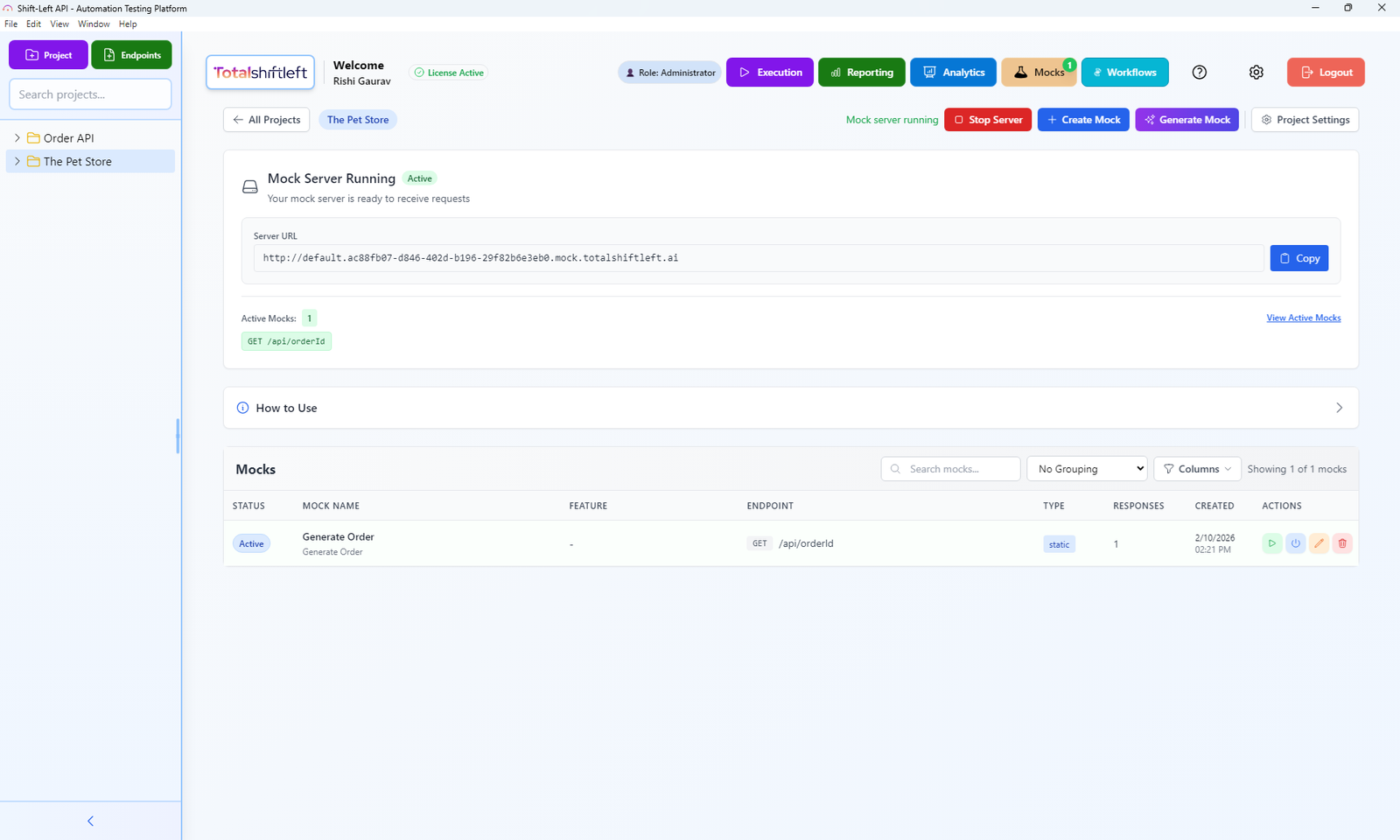

What it is: Shift-Left API is an AI-powered no-code API test automation platform that serves as the central API testing and service virtualization hub for microservices teams. It addresses two of the biggest microservices testing challenges simultaneously: comprehensive API test automation for each individual service, and realistic mock servers for testing services in isolation from their dependencies.

API testing for each microservice: Shift-Left API's AI-powered test generation works at the service level — each microservice with an OpenAPI specification gets its own auto-generated test suite. These tests run in each service's CI/CD pipeline independently, giving each service team autonomous ownership of their API quality gates without requiring coordination with other teams.

For a microservices architecture with 15 services, each with an OpenAPI spec, Shift-Left API can generate comprehensive test suites for all 15 services — covering all endpoints, authentication flows, error conditions, and edge cases — in an afternoon, compared to the weeks of manual test authoring that alternative approaches would require.

Service mocking for isolation testing: Shift-Left API's built-in mock server is particularly powerful in microservices contexts. When Service A's tests need Service B to be available, teams can configure Shift-Left API to serve as a mock for Service B — responding to API calls with realistic responses generated from Service B's OpenAPI specification. This eliminates the need to deploy real dependencies for every CI/CD test run.

The mock server is configured directly from OpenAPI specifications — no custom mock programming required. Teams define response scenarios (success responses, error responses, specific edge cases) through Shift-Left API's visual interface, and the mock server handles all incoming requests with the configured responses. When Service B's OpenAPI spec updates, the mock updates automatically to reflect the new contract.

CI/CD integration at the service level: Each service team configures Shift-Left API to integrate with their service's CI/CD pipeline independently. Tests for Service A run in Service A's GitHub Actions workflow; tests for Service B run in Service B's pipeline. This per-service autonomy aligns perfectly with the team autonomy model that microservices architectures are designed to support.

Pros:

- AI generates comprehensive API tests from each service's OpenAPI spec automatically

- Built-in mock server enables service isolation testing without deploying real dependencies

- Per-service CI/CD integration supports team autonomy

- No-code interface accessible to all team members regardless of coding background

- Supports REST, GraphQL, and SOAP — covering all API protocols common in microservices

- Analytics dashboard provides cross-service visibility into API health trends

- Forever-free Citizen Developer Edition (single user, no expiry, BYO AI key) plus a 15-day Enterprise trial — no credit card required

Cons:

- Focused on API-level testing — does not address contract testing (Pact), performance testing (k6), or distributed tracing (Jaeger)

- Maximum value requires OpenAPI documentation for each service

- Growth and Enterprise pricing for large microservices fleets requires direct engagement

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

Best for: The central API testing and service virtualization platform in a microservices testing stack. Every microservices team building REST, GraphQL, or SOAP services should have Shift-Left API as their primary API quality tool.

Website: totalshiftleft.ai | Start Free Trial | View Platform

Pact — Consumer-Driven Contract Testing

What it is: Pact is the leading open-source framework for consumer-driven contract testing — a testing pattern where the consuming side of a service relationship defines the API contract it expects, and the provider side verifies it meets that contract, without either side needing to be deployed with the other.

How it works in microservices: Service B (consumer) writes a Pact test that defines what API responses it expects from Service A (provider). When Service B's tests run, Pact generates a contract file (a "pact") describing these expectations. Service A then runs Pact's provider verification step, checking that its actual API responses match the contract Service B defined. If Service A makes a change that would break Service B's expectations, the provider verification fails — catching the contract violation before either service is deployed.

Why it matters for microservices: Contract testing with Pact solves the contract drift problem that causes so many production incidents in microservices systems. By making contract verification part of each service's CI/CD pipeline, teams catch breaking changes before deployment — the consuming service's contract acts as a safety net that prevents the provider service from shipping changes that would break its consumers.

Pact Broker and Pactflow: Sharing contracts between service teams requires a central repository — the Pact Broker (self-hosted, open source) or Pactflow (commercial, hosted). The broker stores contract versions and provides the "can I deploy?" query that lets teams check whether they can safely deploy their service without breaking any consumers.

Pros:

- Prevents API contract regressions between independently deployed microservices

- Supports consumer-driven model where consumer teams define their expectations

- Available for Java, JavaScript, Python, Go, Ruby, .NET, and more

- Supports both REST APIs and message-based (event-driven) contracts

- Pactflow provides hosted broker with advanced analytics and deployment recording

Cons:

- Significant coordination overhead — all consumer and provider teams must adopt Pact for it to work

- Contracts must be authored in each consumer service's codebase (code-based, not no-code)

- Pact Broker setup is additional infrastructure to manage

- Does not replace functional API testing — contract tests verify shape, not business logic. For methodology details, see our contract testing for microservices guide

- Learning curve for teams new to consumer-driven contract testing concepts

Best for: Microservices organizations with multiple service teams where contract drift between services is a recurring source of production incidents. Works best alongside Shift-Left API (functional API testing) to provide both contract verification and behavioral testing.

Website: pact.io | Pactflow: pactflow.io

WireMock — HTTP Service Mocking

What it is: WireMock is an open-source HTTP service mocking and stubbing framework that provides realistic fake versions of HTTP services for testing purposes. It can run as a standalone mock server or as a library embedded in tests.

Role in microservices testing: WireMock solves the service isolation problem by allowing teams to create realistic stubs for any HTTP service their code depends on — third-party APIs, internal microservices, databases with HTTP interfaces, or any other HTTP-speaking dependency. Tests run against the WireMock stub rather than the real service, eliminating flakiness from external dependency availability.

WireMock vs. Shift-Left API mock server: Both provide HTTP service mocking, but with different approaches. WireMock requires teams to write stub definitions in JSON or Java code — providing more programmability for complex mock behaviors but requiring more setup effort. Shift-Left API's mock server generates stubs automatically from OpenAPI specifications — requiring less setup but with less programmatic control. For teams with documented OpenAPI specs, Shift-Left API's mock server is faster to set up. For teams needing highly custom mock behavior (stateful mocks, scripted responses, complex request matching), WireMock provides more flexibility.

Pros:

- Flexible, programmable mock definitions support complex simulation scenarios

- Standalone server or embedded library modes

- Request verification lets tests assert that specific calls were made to mocked services

- Record-and-replay mode captures real service responses for offline testing

- Strong Spring Boot integration via WireMock Spring Boot Starter

- Free and open source

Cons:

- Mock definitions must be authored manually — no OpenAPI-based auto-generation

- More setup effort than Shift-Left API's integrated mock server for API-spec-driven mocking

- Stateful mock scenarios can become complex to maintain

- No built-in analytics or cross-team visibility

Best for: Java microservices teams using Spring Boot who need programmable HTTP mocking for integration tests, particularly for complex stateful scenarios that require more control than auto-generated mocks provide.

Website: wiremock.org

Testcontainers — Integration Testing with Real Dependencies

What it is: Testcontainers is an open-source library that provides lightweight, throwaway Docker container instances for use in automated tests — enabling integration tests to run against real database instances, message brokers, and other service dependencies without complex environment management.

Role in microservices testing: While mocking and contract testing focus on isolating services from their dependencies, some scenarios require testing with real dependencies. When your service logic includes complex database transactions, message queue behavior, or third-party API interactions that mocks cannot accurately simulate, Testcontainers provides a clean way to spin up real Docker containers for these dependencies within the test lifecycle — starting fresh containers per test run and tearing them down when done.

Integration with Shift-Left API: Testcontainers handles the data-layer and infrastructure-level integration testing that Shift-Left API's API tests do not cover. In a complete microservices testing stack, Testcontainers spins up real PostgreSQL, Redis, or Kafka containers for service integration tests, while Shift-Left API handles the API-level contract validation and functional testing. These two tools operate at different layers of the testing pyramid and complement rather than compete with each other.

Pros:

- Real dependency instances eliminate the gap between mocked and production behavior

- Completely disposable — each test run starts fresh, eliminating test data contamination

- Supports virtually any containerized dependency (PostgreSQL, MySQL, Redis, Kafka, RabbitMQ, Elasticsearch, and more)

- Available for Java, Python, Go, JavaScript, Rust, and more

- Ryuk (resource reaper) handles container cleanup automatically

Cons:

- Requires Docker on CI/CD agents — not available on all hosted runners without configuration

- Slower than pure mocking approaches — container startup adds time to test suites

- Not suitable for testing service-to-service API interactions (use Shift-Left API or Pact for that)

- Resource-intensive for large parallel test runs with many containers

Best for: Teams that need integration tests with real infrastructure dependencies (databases, message queues, caches) rather than mocked equivalents. Essential for validating service behavior that depends on specific infrastructure characteristics.

Website: testcontainers.com

k6 — Performance and Load Testing

What it is: k6 is a modern open-source load testing tool built for developers, providing a JavaScript API for defining performance test scenarios and a Go-based execution engine that can simulate thousands of virtual users with minimal compute overhead.

Role in microservices testing: Performance testing in microservices environments is more complex than in monolithic applications because a performance problem in one service can cascade through all dependent services — but identifying which service is the bottleneck requires testing at the service level as well as at the system level. k6 enables teams to performance test individual microservices in isolation as well as entire system call chains.

k6 in CI/CD: k6 integrates naturally into CI/CD pipelines through its CLI, enabling performance regression testing as part of automated pipelines. Teams define performance thresholds (maximum response time, maximum error rate) in k6 scripts, and pipeline execution fails if measured performance falls below those thresholds. This catches performance regressions before deployment rather than during production incidents.

Pros:

- JavaScript-based test scripts — familiar syntax for most developers

- Extremely efficient — k6 can simulate thousands of VUs with moderate compute resources

- Built-in support for cloud-based distributed load testing via Grafana Cloud k6

- CI/CD integration through CLI with threshold-based pass/fail

- Outputs results to Grafana, InfluxDB, Datadog, and other monitoring platforms

- Free and open source core

Cons:

- Requires JavaScript scripting — not no-code

- Performance test scenarios must be authored manually (no auto-generation from specs)

- Interpreting performance results requires expertise to distinguish service-level from system-level issues

- Cloud execution via Grafana Cloud k6 is paid

Best for: DevOps teams that need to include performance regression testing in their CI/CD pipelines, particularly for high-traffic microservices where performance degradation can quickly become a production incident.

Website: k6.io

Jaeger — Distributed Tracing

What it is: Jaeger is an open-source distributed tracing platform originally developed by Uber, providing end-to-end request tracing across microservices through the OpenTelemetry standard. It enables teams to visualize the complete path of a request through a microservices system and identify where failures or latency occurs.

Role in microservices testing: Jaeger does not run tests — it provides the observability that makes understanding test failures and production incidents possible in microservices environments. When a test fails against a microservices system, Jaeger traces allow developers to see exactly which service in the call chain produced the error, how long each service took to respond, and where the request path deviated from the expected flow.

Relationship with other testing tools: Jaeger is most valuable in combination with other testing tools in this list. When Shift-Left API's API tests fail against a microservices environment, Jaeger traces provide the cross-service context needed to understand whether the failure originates in the tested service or in a downstream dependency. When k6 performance tests show elevated response times, Jaeger flame graphs identify which service is the bottleneck.

Pros:

- OpenTelemetry compatible — works with virtually any modern language and framework

- Root cause analysis for distributed failures becomes dramatically faster

- Service dependency maps provide architectural visibility

- Adaptive sampling reduces overhead in high-throughput production environments

- Free and open source (Jaeger backend and UI)

Cons:

- Requires instrumentation of all services — each service must emit trace data

- Jaeger backend infrastructure management (or use a managed provider like Grafana Tempo)

- Learning curve for interpreting traces and flame graphs

- Not a testing tool itself — complements but does not replace automated testing

Best for: All microservices teams as an operational observability component, particularly those debugging complex cross-service failures or performance issues that are difficult to attribute to a specific service.

Website: jaegertracing.io

Comparison Table

| Tool | Category | Pricing | No-Code | CI/CD Integration | API Testing | Service Mocking | Best For |

|---|---|---|---|---|---|---|---|

| Shift-Left API | API testing + mocking | Free Citizen Developer Edition + 15-day trial; team plans custom | Yes | Native, all platforms | Yes — REST, GraphQL, SOAP | Yes — OpenAPI-based | Central API testing and service virtualization |

| Pact | Contract testing | Free; Pactflow paid | No — code required | Yes | Contract verification only | Yes (consumer side) | Consumer-driven contract testing |

| WireMock | HTTP mocking | Free (OSS) | No — JSON/code | Yes (embedded) | No | Yes — programmable | Java integration testing with complex mocks |

| Testcontainers | Integration testing | Free (OSS) | No — code required | Yes | No | No (real containers) | Real dependency integration testing |

| k6 | Performance testing | Free; Cloud paid | No — JS scripting | Yes (CLI) | Performance/load | No | Performance regression testing in CI/CD |

| Jaeger | Distributed tracing | Free (OSS) | No — infra setup | N/A (observability) | No | No | Cross-service failure debugging and tracing |

Real-World Implementation: A Microservices Testing Strategy with Shift-Left API

A healthcare technology company with 12 microservices (patient records, appointments, billing, notifications, authentication, and more) was struggling with microservices testing. Their challenges were representative: integration tests that took 40 minutes to run, frequent contract drift incidents where service updates broke consumers, and production debugging sessions that lasted hours because there was no distributed tracing to identify which service in the call chain had failed.

They implemented a layered microservices testing strategy over 3 months:

Month 1 — API testing with Shift-Left API:

All 12 services had OpenAPI specifications. The team uploaded each spec to Shift-Left API, which generated comprehensive test suites for each service in a single day. Shift-Left API's mock server was configured to mock dependent services for each service's isolated tests.

The results from week one were immediate and striking. The Shift-Left API API tests running in each service's CI/CD pipeline caught 11 API regressions that would previously have been discovered in the shared integration test environment — 3 were breaking changes that would have caused production incidents. The test runs completed in under 4 minutes per service, compared to 40 minutes for the full integration test suite.

Month 2 — Contract testing with Pact:

The team implemented Pact for the highest-risk service relationships — specifically the three pairs of services with the most frequent contract drift incidents. Each consumer service team wrote Pact consumer tests defining their API expectations. Provider verification ran in each provider service's CI/CD pipeline, breaking builds when provider changes would violate consumer contracts.

Within the first month of Pact deployment, the provider verification step caught two breaking changes before they were deployed — a changed field name in the patient records service and a removed optional field in the billing service. Both were caught in CI/CD and fixed in the same development cycle, rather than discovered in production.

Month 3 — Testcontainers and k6:

Critical services with complex database logic were augmented with Testcontainers-based integration tests, replacing a shared test database that had been a source of test flakiness for over a year. k6 performance tests were added to the CI/CD pipelines for the three highest-traffic services, establishing performance baselines and alerting when response times regressed.

Jaeger distributed tracing was instrumented across all 12 services, immediately transforming how the team debugged both test failures and production incidents. What previously took 2-3 hours of log investigation became 15-minute trace analysis sessions.

Outcomes at 6 months:

- Production API incidents: decreased by 71%

- Mean time to diagnose production incidents: decreased from 2.1 hours to 22 minutes (Jaeger)

- Integration test environment dependency: eliminated for PR-level testing (replaced by Shift-Left API mock server)

- Contract drift incidents in production: zero in the last 4 months (Pact)

- Developer confidence in deployments: dramatically increased, anecdotally validated in team retrospectives

How to Build a Complete Microservices Testing Stack

Building a microservices testing stack is not about adopting all six tools simultaneously. It is about understanding which testing challenges are causing the most pain and addressing them in order of impact:

Start with per-service API testing using Shift-Left API. If each service does not have automated functional API tests running in its CI/CD pipeline, that is the highest-priority gap to fill. Shift-Left API's OpenAPI-based test generation makes this the fastest win available — comprehensive API coverage for an entire microservices fleet in days rather than months.

Add contract testing with Pact for high-risk service relationships. As part of your broader DevOps testing strategy, once per-service API testing is in place, identify which service-to-service relationships have the most frequent contract drift incidents. Implement Pact for these specific relationships first, prove the value, then expand to broader coverage. Full Pact adoption across all service relationships is a multi-month organizational commitment.

Replace shared integration environments with mocking and Testcontainers. Shared integration environments that all service teams depend on are a source of slow, flaky tests that create bottlenecks. Systematically replace shared-environment dependencies with Shift-Left API mock servers (for service-to-service dependencies) and Testcontainers (for infrastructure dependencies like databases).

Add performance testing with k6 for critical services. Identify the services where performance degradation would have the highest business impact and add k6 performance regression tests to those services' CI/CD testing pipelines. Start with response time thresholds based on current baselines, then tighten them over time as you optimize.

Instrument distributed tracing with Jaeger or OpenTelemetry. Tracing instrumentation pays dividends from the first production incident it helps diagnose — and the average microservices team has multiple opportunities to use it every month. Even without a specific testing goal, distributed tracing should be considered foundational infrastructure for any microservices system.

Best Practices for Microservices Test Automation

Test each service's API contract at the service boundary. The most valuable tests for a microservices architecture are those that validate the API contract each service exposes — not the internal implementation details. Shift-Left API's API tests validate at the service boundary, which means they remain valid even as implementation changes, and they catch exactly the regressions that would affect service consumers.

Keep service tests independent of other services. Tests that require multiple services to be deployed together are slow, flaky, and difficult to maintain. Use Shift-Left API's mock server or WireMock stubs for all inter-service dependencies in unit and component test stages. Reserve multi-service integration tests for post-merge validation against staging environments.

Follow test automation best practices for DevOps across all service teams. Standardized testing practices ensure that every microservice team applies the same quality standards, making cross-service quality predictable and maintainable.

Adopt an "API specification first" development practice. The quality of AI-generated tests from Shift-Left API is directly proportional to the quality and completeness of the OpenAPI specifications they are generated from. Teams that write their OpenAPI spec before writing implementation code — spec-first development — get the best test generation results and simultaneously improve documentation quality.

Version your API contracts and test against specific versions. Microservices evolve independently. Tests should specify which version of a service's API they are testing against, and CI/CD pipelines should make explicit decisions about which service versions to test against (production, latest-main, or PR-specific). Pact's "can I deploy?" check formalizes this versioning discipline.

Invest in test data management for cross-service scenarios. Integration tests that span multiple services need consistent test data. Invest in test data factories or test data management tooling that can seed consistent data across services for integration test scenarios. This is one of the areas where microservices testing remains genuinely difficult — robust test data management pays compounding dividends.

Treat distributed tracing as testing infrastructure, not just operations. Instrumented distributed tracing is not just useful for production debugging — it makes test failure debugging in microservices environments dramatically faster. Teams that have Jaeger data for their test environments diagnose test failures in minutes rather than hours.

Microservices Testing Checklist

Use this checklist to evaluate your microservices testing strategy:

- Per-service API testing: Does each microservice have an automated API test suite running in its own CI/CD pipeline (ideally using Shift-Left API)?

- Service isolation: Can each service's tests run without deploying real upstream or downstream services (using Shift-Left API mock server or WireMock)?

- Contract testing: Are the highest-risk service-to-service contracts verified automatically (using Pact or equivalent)?

- Real dependency testing: Are services that depend on specific database or message queue behavior tested with real infrastructure (using Testcontainers)?

- Performance baselines: Do the highest-traffic services have performance regression tests in their CI/CD pipelines (using k6)?

- Distributed tracing: Is distributed tracing instrumented across all services to support test failure debugging (using Jaeger or OpenTelemetry)?

- Team autonomy: Can each service team manage their own test suites and quality gates independently, without depending on a centralized QA team?

- Deployment safety: Do teams have a reliable "can I deploy?" signal before deploying new service versions to production?

Frequently Asked Questions

What are the best tools for testing microservices in 2026?

A complete microservices testing stack in 2026 includes: Shift-Left API for API test automation and service mocking, Pact for consumer-driven contract testing, WireMock or Shift-Left API's built-in mock server for service virtualization, Testcontainers for integration testing with real dependencies, k6 for performance testing, and Jaeger for distributed tracing when debugging cross-service failures.

How do you test microservices without deploying all services together?

Service mocking and contract testing are the two key approaches. Shift-Left API's built-in mock server creates API mocks directly from OpenAPI specifications, allowing teams to test API consumers without the real backend service running. Pact enables consumer-driven contract testing where each service team defines and verifies contracts independently.

Why is contract testing important for microservices?

Contract testing verifies that the API contracts between microservices are honored without requiring all services to be deployed together. Consumer-driven contract testing with Pact lets each consuming service team define what they expect from upstream services, and those upstream services verify they still meet those expectations on every build — preventing silent contract regressions.

What tools does Shift-Left API provide for microservices testing?

Shift-Left API serves as the central API testing and mocking platform for microservices teams. It imports OpenAPI specifications for each service and auto-generates comprehensive API test suites, runs those tests in CI/CD pipelines per service, and provides a built-in mock server so services can be tested in isolation without requiring all dependent services to be running.

Conclusion

Testing microservices effectively requires a layered strategy that addresses each of the unique challenges the architecture introduces: service isolation, contract drift, environment complexity, performance at scale, and cross-service failure attribution. No single tool solves all of these challenges — but the right combination does.

At the center of that combination is Shift-Left API, which addresses the two highest-priority microservices testing needs simultaneously: comprehensive API functional testing for each individual service, and realistic mock servers for service isolation testing. By generating test suites from OpenAPI specifications and running them in per-service CI/CD pipelines, Shift-Left API gives microservices teams the autonomy and coverage depth they need without the overhead of building custom test automation frameworks for each service.

Surrounding Shift-Left API, Pact provides the contract testing layer that prevents cross-service contract regressions. Testcontainers provides real dependency testing for infrastructure-sensitive scenarios. k6 provides performance regression protection. And Jaeger provides the distributed observability that makes debugging test failures and production incidents fast rather than painful.

If you are building or maintaining a microservices architecture and have not yet implemented comprehensive per-service API testing, the fastest path to that coverage is Shift-Left API. Upload your first OpenAPI spec today and see your service's test suite generated in minutes.

Start your free 15-day trial — no credit card required. Solo developer? Grab the forever-free Citizen Developer Edition — single-user, no expiry.

Related: What Is Shift Left Testing: Complete Guide | API Testing Strategy for Microservices | Contract Testing for Microservices | Best Shift Left Testing Tools | DevOps Testing Strategy | How to Build a CI/CD Testing Pipeline | No-code API testing platform | Total Shift Left home | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.