API Testing Strategy for Microservices Architecture (2026)

An API testing strategy for microservices is a structured plan for validating service interfaces, contracts, and integrations across distributed systems. It defines which tests run at each pipeline stage, how contracts are enforced, and how teams prevent breaking changes from cascading across dependent services.

An API testing strategy for microservices is a layered plan that defines how each service, service-to-service interaction, and system-wide workflow is validated — using contract testing, service isolation, mocking, and chaos techniques — to prevent integration failures in distributed architectures.

Table of Contents

- Introduction

- What Is an API Testing Strategy for Microservices?

- Why Microservices Make API Testing More Complex

- The Testing Pyramid Adapted for Microservices

- Key Components of a Microservices API Testing Strategy

- Service Isolation and Mocking

- Contract Testing and Consumer-Driven Contracts

- Chaos and Resilience Testing

- Microservices Testing Architecture Diagram

- Tools Comparison

- Real Implementation Example with Shift-Left API

- Common Challenges and Solutions

- Best Practices

- Microservices API Testing Checklist

- FAQ

- Conclusion

Introduction

The promise of microservices is speed and autonomy — small teams owning small services, deploying independently, and scaling horizontally. But that autonomy comes at a cost: integration complexity. When your system is composed of 20, 50, or 200 individual services, each communicating over HTTP or message queues, the number of potential failure surfaces grows exponentially.

Traditional API testing — write a Postman collection, run it manually, call it done — simply does not scale to this environment. A change in the user-service response schema can silently break the order-service weeks before anyone notices. A network timeout between payment-service and fraud-service can cause cascading failures that no unit test would ever catch.

This guide presents a complete API testing strategy for microservices built for 2026 realities: AI-assisted test generation, spec-first development, consumer-driven contracts, and automated CI/CD gates. Whether your team is just beginning the microservices journey or managing a mature distributed system, the patterns and practices here will reduce your mean time to detection and let you ship with confidence. For foundational context on embedding quality earlier in development, start with What Is Shift Left Testing?.

What Is an API Testing Strategy for Microservices?

An API testing strategy for microservices is a structured, multi-layer approach to validating the behavior and reliability of services in a distributed system. Unlike testing a monolith — where you can instrument a single codebase end-to-end — microservices testing must account for:

- Independent deployability: each service can change and deploy on its own schedule

- Network boundaries: services communicate over the wire, introducing latency, timeouts, and partial failure scenarios

- Multiple teams: different squads own different services, making coordination around breaking changes essential

- Polyglot environments: services may be written in different languages and frameworks

A solid strategy answers four fundamental questions:

- How do we validate that each service behaves correctly in isolation?

- How do we verify that services still work together after changes?

- How do we detect breaking changes before they reach production?

- How do we test failure modes and resilience?

Why Microservices Make API Testing More Complex

The Distributed Testing Problem

In a monolith, an integration test spins up one process and makes function calls. In microservices, "integration" means coordinating multiple running services, databases, and message brokers. Standing up the full environment for every test is slow, brittle, and expensive.

Cascading Failures

A single service returning a 500 error can cascade through dependent services, producing failures that look completely unrelated to the root cause. Testing must explicitly model failure propagation.

Schema Drift

When the product-service team adds a required field to their response payload, every consumer is implicitly affected. Without contract tests, that drift goes undetected until runtime.

Deployment Velocity

High-performing microservices teams deploy dozens of times per day across multiple services. Manual test runs cannot keep pace. Automation is not optional — it is the only viable path.

Independent Rollouts

Blue-green and canary deployments mean that multiple versions of the same service may be running simultaneously. Your testing strategy must account for version compatibility across consumers and providers.

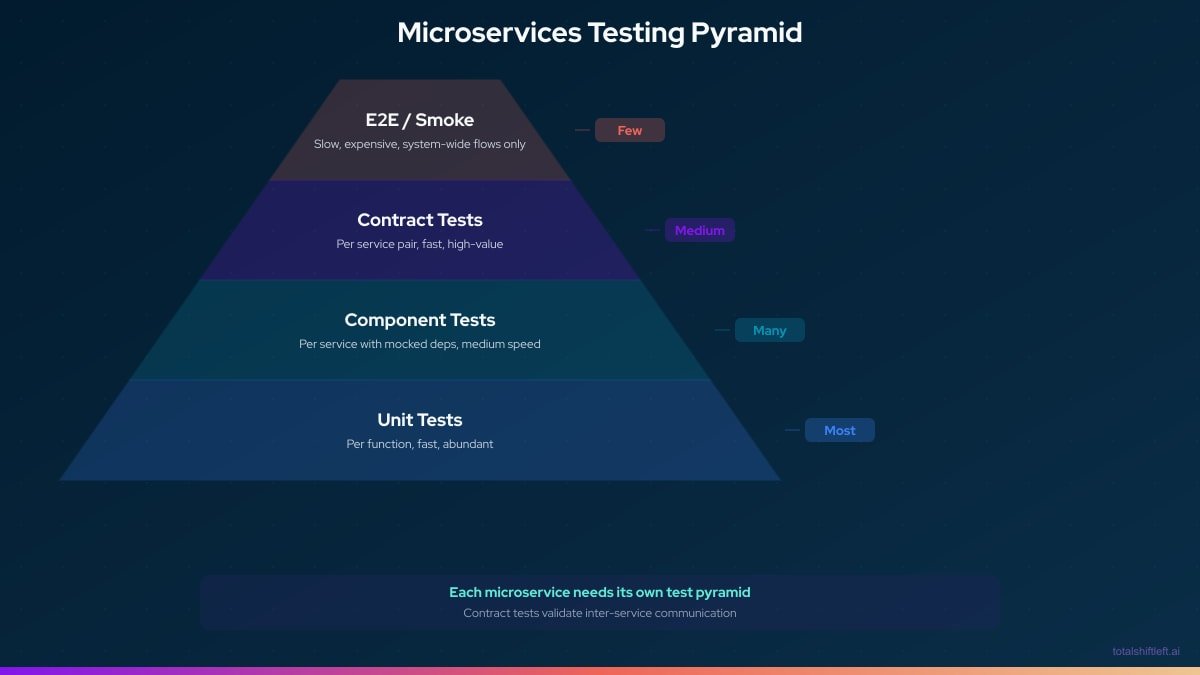

The Testing Pyramid Adapted for Microservices

The classic testing pyramid (unit → integration → E2E) needs adaptation for microservices:

In a microservices context:

- Unit tests validate business logic within a single service's codebase

- Component tests validate a single service's API contract using mocked dependencies — this is where API testing tools shine

- Contract tests validate the agreement between a consumer and a provider service

- E2E smoke tests validate critical user journeys across the assembled system — kept minimal due to cost and fragility

The key insight: most of your microservices API testing investment should go into component and contract tests, not end-to-end tests. This is the shift-left principle in action — learn more in our shift left testing strategy guide. For a deep dive into the contract layer specifically, see our contract testing for microservices guide.

Key Components of a Microservices API Testing Strategy

1. Spec-First API Design

Every service should have a machine-readable API specification (OpenAPI 3.x, AsyncAPI for events, GraphQL SDL). These specs are the single source of truth for:

- Auto-generating test suites

- Generating mock servers for consumers

- Enforcing contract compliance in CI/CD

2. Service-Level API Tests

Each service gets a dedicated test suite that validates all endpoints against the spec — covering happy paths, edge cases, authentication, and error responses. These tests run in the service's own CI pipeline, not a shared environment.

3. Consumer-Driven Contract Tests

Consumers define the subset of the provider's API they depend on. Providers verify that their implementation satisfies every registered consumer contract before merging changes.

4. Mock Servers for Dependency Isolation

When testing Service A, you mock Services B, C, and D. Mock servers simulate realistic responses and failure modes without requiring live instances.

5. Chaos and Resilience Tests

Deliberately inject failures — timeouts, 503s, malformed responses — to verify that services handle upstream failures gracefully.

6. CI/CD Quality Gates

Every pull request triggers the full test suite for the affected service(s), including contract verification. Merges are blocked when tests fail. For a step-by-step guide on implementing these gates, see How to Build a CI/CD Testing Pipeline.

Service Isolation and Mocking

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

Why Isolation Matters

Testing Service A against a live Service B introduces a cascade of problems: Service B must be deployed and healthy, its test data must be in a known state, and any flakiness in Service B pollutes your results for Service A. Service isolation eliminates this coupling.

How Mock Servers Enable Isolation

A mock server simulates the behavior of a real service by serving pre-defined responses based on the incoming request. For microservices testing, you need mocks that:

- Match responses to specific request patterns

- Simulate error conditions (5xx, timeouts, network drops)

- Are generated automatically from OpenAPI specs — not hand-crafted

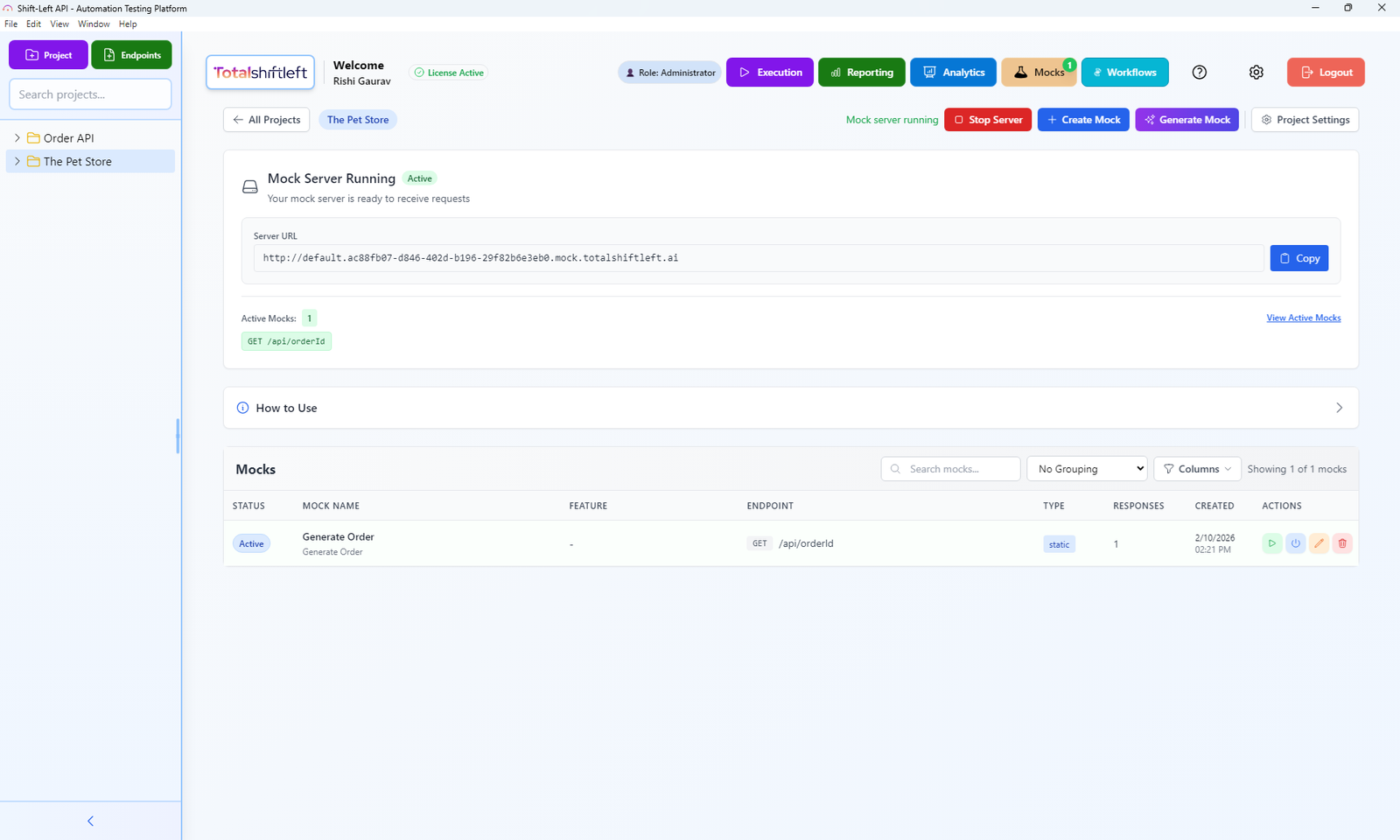

Shift-Left API's built-in mock server, auto-generated from OpenAPI specs, enables true service isolation during testing.

Stateful vs. Stateless Mocks

- Stateless mocks return fixed responses for a given request pattern — suitable for read-only endpoints

- Stateful mocks track state changes across requests — needed for POST/PUT/DELETE flows that depend on prior actions

Service Virtualization at Scale

For organizations with many services, maintaining mock servers by hand is unsustainable. Tools like Shift-Left API generate and keep mocks synchronized with their OpenAPI specs automatically, ensuring that when the spec changes, the mock changes too.

Contract Testing and Consumer-Driven Contracts

What Is a Contract?

A contract is a formal agreement between a consumer service (the caller) and a provider service (the API owner). The contract specifies:

- Which endpoints the consumer uses

- The expected request format

- The minimum required response schema

- Status codes and error formats

Consumer-Driven Contract Testing Flow

- Consumer writes a contract — "I call

GET /products/{id}and expect at minimumid,name, andpricein the response" - Contract is published — to a contract broker (Pact Broker, or your own artifact store)

- Provider verifies the contract — in its own CI pipeline, without spinning up the consumer

- Verification results are published — provider knows whether all consumers are satisfied

- Can-I-Deploy check — before any deployment, automation checks that all contracts are satisfied for the target environment

OpenAPI as a Contract Foundation

When every service is described by an OpenAPI spec, that spec becomes the basis for automated contract validation. Shift-Left API imports these specs and generates tests that verify the implementation matches the spec — both for the provider's internal behavior and for consumer expectations.

After importing an OpenAPI spec into Shift-Left API, all endpoints are instantly visible and ready for contract-based test generation.

Bi-Directional vs. Consumer-Driven Contracts

| Approach | Pros | Cons |

|---|---|---|

| Consumer-Driven (Pact) | Precise, consumer controls the contract | Requires tooling coordination across teams |

| Bi-Directional (Pact Bi-Di) | Provider publishes spec, consumer publishes spec, broker compares | Simpler adoption, slightly less granular |

| OpenAPI-Based Validation | Works with existing specs, no new tooling required | Does not capture consumer-specific subsets |

For most organizations starting with contract testing, OpenAPI-based validation combined with bi-directional contracts delivers the best ROI.

Chaos and Resilience Testing

Why Resilience Testing Belongs in Your API Strategy

Microservices must be designed to tolerate upstream failures. Circuit breakers, retries, fallbacks, and timeouts only work if they are tested. Chaos testing intentionally injects failures to prove that resilience mechanisms work.

Common Chaos Scenarios for API Testing

- Upstream timeout: the dependency does not respond within the SLA — does your service circuit-break or hang?

- Malformed response: the upstream returns a 200 with an invalid body — does your deserializer crash or handle it gracefully?

- HTTP 503: the upstream is temporarily unavailable — does your service retry, fallback, or propagate the error?

- Partial response: the upstream returns incomplete JSON — does validation catch it before processing?

Implementing Chaos in Your Mock Servers

Configure your mock server to randomly introduce failures based on configurable probability settings:

chaos_config:

endpoints:

- path: /payments

method: POST

scenarios:

- type: timeout

probability: 0.05 # 5% of requests timeout

delay_ms: 30000

- type: error_response

probability: 0.03 # 3% return 503

status: 503

body: '{"error": "Service temporarily unavailable"}'

- type: malformed_body

probability: 0.02 # 2% return invalid JSON

This approach lets you run chaos tests in lower environments as part of your regular CI/CD pipeline without needing production-scale infrastructure.

Microservices Testing Architecture Diagram

┌─────────────────────────────────────────────────────────────────────┐

│ MICROSERVICES TEST ARCHITECTURE │

└─────────────────────────────────────────────────────────────────────┘

Pull Request Trigger

│

▼

┌─────────────────┐ ┌──────────────────────┐

│ Service A CI │────►│ Unit Tests │ PASS/FAIL

│ Pipeline │ │ (internal logic) │

│ │ └──────────────────────┘

│ │ ┌──────────────────────┐

│ │────►│ Component Tests │ PASS/FAIL

│ │ │ (API + mocked deps) │

│ │ └──────────────────────┘

│ │ ┌──────────────────────┐

│ │────►│ Contract Verification│ PASS/FAIL

│ │ │ (all consumers) │

└────────┬────────┘ └──────────────────────┘

│

▼ All Pass

┌─────────────────┐

│ Deploy to │

│ Staging │

│ │

└────────┬────────┘

│

▼

┌─────────────────┐ ┌──────────────────────┐

│ Integration │────►│ E2E Smoke Tests │ PASS/FAIL

│ Environment │ │ (critical journeys) │

│ │ └──────────────────────┘

└────────┬────────┘

│

▼ All Pass

┌─────────────────┐

│ Deploy to │

│ Production │

└─────────────────┘

MOCK SERVER (Shift-Left API)

┌─────────────────────────────┐

│ OpenAPI Spec ──► Mock Auto │

│ Stateful Responses │

│ Chaos Injection │

│ Contract Assertions │

└─────────────────────────────┘

Tools Comparison

| Tool | Contract Testing | Mocking | Auto Test Generation | CI/CD Native | OpenAPI Import | No-Code |

|---|---|---|---|---|---|---|

| Shift-Left API | Yes (OpenAPI-based) | Yes (auto-generated) | Yes (AI-powered) | Yes | Yes | Yes |

| Pact | Yes (consumer-driven) | No | No | Yes | No | No |

| WireMock | No | Yes (manual setup) | No | Yes | Partial | No |

| Postman | Partial | Yes (manual) | No | Yes | Yes | Partial |

| Hoverfly | No | Yes | No | Yes | Partial | No |

| Spring Cloud Contract | Yes | Yes | Partial | Yes | No | No |

| Karate DSL | Partial | Yes | No | Yes | Partial | No |

Key takeaway: Shift-Left API is the only platform that combines auto-generated contract tests, instant mock servers from OpenAPI specs, AI-powered test generation, and no-code CI/CD integration in a single tool — making it the most complete solution for microservices API testing at scale.

Free 1-page checklist

API Testing Checklist for CI/CD Pipelines

A printable 25-point checklist covering authentication, error scenarios, contract validation, performance thresholds, and more.

Download FreeReal Implementation Example with Shift-Left API

Here is how a team managing 15 microservices implemented their API testing strategy using Shift-Left API:

Step 1: Import All Service Specs

Each service's OpenAPI spec is registered in Shift-Left API — either by URL (pointing to the spec hosted alongside the service) or by file upload. As specs are updated, tests and mocks regenerate automatically.

Step 2: Auto-Generate Tests per Service

Shift-Left API's AI engine analyzes each spec and generates:

- Happy-path tests for every endpoint

- Negative tests for invalid inputs, missing required fields, and boundary values

- Authentication and authorization tests

- Schema validation tests comparing actual responses to the spec

Step 3: Configure Mock Servers

For each service, Shift-Left API generates a mock server that consumer services use during their own component tests. The mock is synchronized with the provider's latest spec — no manual maintenance.

Step 4: Set Up CI/CD Integration

Each service's CI pipeline is configured to:

# .github/workflows/api-tests.yml

name: API Tests

on:

pull_request:

branches: [main, develop]

jobs:

api-tests:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run Shift-Left API API Tests

uses: totalshiftleft/tsl-action@v2

with:

api_key: ${{ secrets.TSL_API_KEY }}

spec_url: https://api.myservice.internal/openapi.json

environment: staging

quality_gates:

pass_rate: 100

max_response_time_ms: 500

- name: Verify Consumer Contracts

uses: totalshiftleft/tsl-contract-action@v2

with:

api_key: ${{ secrets.TSL_API_KEY }}

provider: order-service

broker_url: https://contracts.internal

Step 5: Monitor and Alert

Shift-Left API's analytics dashboard provides per-service test trends, coverage heatmaps, and response time baselines — enabling proactive identification of services that are drifting from their contracts before downstream consumers are affected.

Common Challenges and Solutions

Challenge 1: Too Many Services, Too Many Specs

Problem: Managing 50+ OpenAPI specs manually is unsustainable.

Solution: Use Shift-Left API's spec registry to centralize all service specs. Specs can be auto-discovered via service mesh annotations or registered via CI/CD on deploy.

Challenge 2: Test Data Management

Problem: Each service needs realistic test data, but different services need different data sets.

Solution: Combine spec-generated mock servers with stateful test fixtures per environment. Shift-Left API's mock server supports dynamic response templates that generate realistic data based on schema definitions.

Challenge 3: Contract Version Compatibility

Problem: When a provider releases a new version, old consumers may not have updated yet.

Solution: Implement semantic versioning for API contracts and maintain backward compatibility for at least one major version. Shift-Left API's contract verification tests against all registered consumer versions simultaneously.

Challenge 4: Slow Integration Test Environments

Problem: Spinning up all dependencies for integration testing takes too long.

Solution: Replace live integration dependencies with Shift-Left API mock servers. Component tests run in milliseconds instead of minutes because they only need the service under test to be running.

Challenge 5: Flaky Tests from Network Conditions

Problem: Tests that call live services fail intermittently due to network issues.

Solution: All inter-service dependencies are mocked during component tests. Only the minimal set of E2E smoke tests use live services, and they are run with appropriate retry logic.

Best Practices

- Spec-first, always: write or update the OpenAPI spec before writing code — it becomes the test contract automatically

- One test suite per service: each service owns its tests; no shared cross-service test suites that create team dependencies

- Mock at the boundary, not inside: mock the HTTP client calls, not internal functions — test the full service logic with mocked dependencies

- Run contract tests in both directions: verify both that you call your dependencies correctly and that you fulfill your consumers' expectations

- Keep E2E tests minimal: target only the top 5-10 critical user journeys; everything else belongs at the component or contract layer

- Parameterize environments: the same test suite should run in dev, staging, and production by switching configuration, not test code

- Version your contracts: use semantic versioning and deprecation windows; never make breaking changes without a migration path

- Monitor in production: run a read-only subset of your API tests against production on a schedule to detect silent regressions. See shift left vs shift right testing for how production monitoring complements pre-production testing

- Automate chaos: include at least one chaos scenario per external dependency in your component test suite

- Treat flakiness as a bug: a flaky test is either hiding a real resilience problem or is poorly designed — fix it immediately

Microservices API Testing Checklist

Use this checklist when evaluating your current strategy or onboarding a new service:

Service Setup

- ✔ OpenAPI/Swagger spec exists and is current

- ✔ Spec is registered in the central spec registry / Shift-Left API

- ✔ All endpoints have response schema definitions

- ✔ Authentication and authorization requirements are documented in the spec

Component Tests

- ✔ All endpoints have happy-path tests

- ✔ All endpoints have negative tests (invalid inputs, boundary values)

- ✔ All downstream dependencies are mocked

- ✔ Tests validate response schema, not just status codes

- ✔ Tests validate response time against SLA

Contract Tests

- ✔ Provider has registered all consumer contracts

- ✔ Contract verification runs in the provider's CI pipeline

- ✔ Can-I-Deploy check is enforced before production deployments

- ✔ Consumers receive notifications of provider breaking changes

Chaos and Resilience

- ✔ Timeout scenarios tested for all external dependencies

- ✔ 503/retry behavior validated

- ✔ Circuit breaker activation verified

- ✔ Malformed response handling validated

CI/CD Integration

- ✔ Tests run on every pull request

- ✔ Quality gates block merge on test failure

- ✔ Test results are published to analytics dashboard

- ✔ Scheduled production monitoring tests are active

Frequently Asked Questions

What is the best API testing strategy for microservices?

The best API testing strategy for microservices combines unit-level service tests, contract tests between consumers and providers, integration tests using mocked dependencies, and end-to-end smoke tests. Shift-left tools that auto-generate tests from OpenAPI specs dramatically reduce the manual effort required across dozens of services.

Why is contract testing important for microservices API testing?

Contract testing ensures that when a provider service changes its API, those changes do not silently break dependent consumer services. In a microservices system with tens or hundreds of services, integration failures are the leading cause of production incidents — contract tests catch them before deployment.

How do you test microservices in isolation?

You test microservices in isolation by mocking or virtualizing their downstream dependencies. Tools like Shift-Left API generate realistic mock servers directly from OpenAPI specs, allowing each service to be tested independently without standing up its entire dependency graph.

What tools support microservices API testing at scale?

Shift-Left API imports OpenAPI/Swagger specs for each microservice, auto-generates functional and negative test cases using AI, spins up mock servers for dependency isolation, and integrates the full test suite into CI/CD pipelines — supporting teams managing dozens of services simultaneously.

Conclusion

Building a robust API testing strategy for microservices is not a one-time project — it is an ongoing practice that evolves with your architecture. The teams shipping with the most confidence in 2026 are those who have embedded testing into the development workflow from the first line of spec, not bolted it on as a final step before deployment.

The layered strategy described in this guide — spec-first design, component tests with mocked dependencies, consumer-driven contracts, and chaos testing in CI/CD — gives every service team the autonomy to ship fast without creating integration risk for the rest of the organization.

Shift-Left API makes this strategy achievable without a large QA team or deep testing expertise. By importing your OpenAPI specs and letting AI generate the test suite, you get comprehensive coverage in minutes rather than weeks — for every service, every endpoint, every time the spec changes.

The distributed systems that will dominate the next decade are the ones being tested in isolation and in concert today. Start building that foundation now. For the broader quality architecture this fits within, see our DevOps testing strategy guide and our comparison of the best testing tools for microservices.

Related: What Is Shift Left Testing? Complete Guide | Shift Left Testing Strategy | Shift Left vs Shift Right Testing | How to Build a CI/CD Testing Pipeline | DevOps Testing Strategy | Best Shift Left Testing Tools | Contract Testing for Microservices | No-code API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.