Testing in DevOps: Best Practices for Fast Releases (2026)

DevOps testing best practices are proven techniques for embedding quality throughout the software delivery lifecycle. They include shift-left automation, CI/CD quality gates, test pyramid adherence, parallel execution, and metrics-driven improvement to help teams release fast without sacrificing reliability.

DevOps testing best practices are the specific, actionable techniques that allow engineering teams to release software at high frequency without accumulating technical debt, generating production incidents, or requiring heroic manual QA effort. Teams that master these practices ship multiple times per day with higher reliability than teams that deploy weekly with traditional QA processes.

Table of Contents

- Introduction

- What DevOps Testing Best Practices Actually Mean

- Why These Practices Matter for Fast Releases

- Best Practice 1: The Test Pyramid

- Best Practice 2: Automation-First Mindset

- Best Practice 3: Shift Testing Left

- Best Practice 4: Parallel Test Execution

- Best Practice 5: Quality Gates at Every Stage

- Best Practice 6: Fast Feedback Loops

- Best Practice 7: Test Data Management

- Best Practice 8: API Testing as a First-Class Practice

- Pipeline Architecture for Best Practice Implementation

- Tools Supporting DevOps Testing Best Practices

- Real Implementation Example

- Common Challenges and Solutions

- Complete Best Practices Reference

- DevOps Testing Best Practices Checklist

- FAQ

- Conclusion

Introduction

The promise of DevOps is the ability to deploy software faster, more often, and with higher reliability. Most engineering organizations achieve the "faster and more often" part through CI/CD pipeline automation. Far fewer achieve the "higher reliability" part, because high reliability requires mature testing practices that are specifically designed for high-frequency deployment environments.

Traditional testing practices—periodic manual QA, manual regression testing, staging environment reviews—simply do not scale to modern DevOps environments. A team deploying 10 times per day cannot have human testers validate every deployment. A team with weekly releases can afford 3-day QA cycles; a team with daily releases cannot.

This guide covers the DevOps testing best practices that bridge this gap. These are not theoretical principles—they are specific techniques used by the highest-performing engineering teams to ship quickly and reliably simultaneously. Each practice is explained, contextualized within a DevOps pipeline, and connected to tools that implement it effectively.

What DevOps Testing Best Practices Actually Mean

DevOps testing best practices are not just "write more tests" or "use automation." They are specific decisions about:

- Test architecture: How tests are structured, distributed, and maintained.

- Pipeline integration: Where in the pipeline each test type runs and what triggers it.

- Enforcement: What quality standards code must meet before advancing.

- Feedback design: How quickly and clearly test results reach the developer.

- Ownership: Who is responsible for each type of test.

These decisions compound. A team with the right test architecture but poor feedback design still suffers because developers don't act on results quickly. A team with great feedback but no quality gates allows failures to propagate. The best practice system works as a whole.

Why These Practices Matter for Fast Releases

Release Frequency Is Inversely Related to Manual Testing Reliance

Teams that rely heavily on manual testing are constrained by the availability and throughput of their QA team. Every increase in deployment frequency is constrained by QA capacity. DevOps testing best practices eliminate this constraint by making quality assurance automatic.

Technical Debt Accumulates Without Automated Regression

Every feature added without automated regression coverage increases the risk that future changes break existing functionality silently. Over time, teams with poor testing coverage begin to slow down—not because they are shipping less, but because they are spending more time investigating production incidents caused by untested regressions.

Customer Experience Depends on Production Quality

Fast releases are valuable only if the released software works. Production incidents caused by inadequate testing cost more than slow releases: customer churn, support costs, reputational damage, and engineering time in incident response all accumulate. The teams that achieve sustainable fast releases are those that invest in the testing practices that make those releases safe.

Best Practice 1: The Test Pyramid

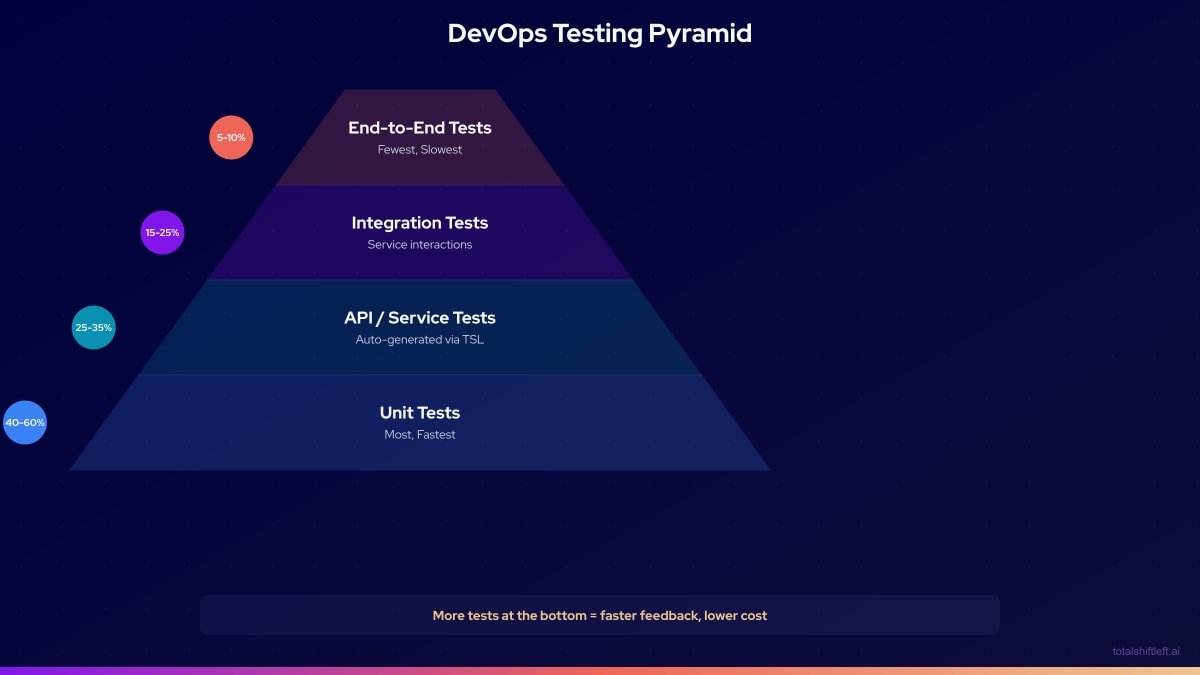

The test pyramid is the most fundamental structural best practice in DevOps testing. It defines the optimal distribution of test types across a system:

Why the pyramid shape: Unit tests are fast (milliseconds each), cheap to write, easy to maintain, and provide precise diagnostic information when they fail. End-to-end tests are slow (minutes each), expensive to maintain, brittle, and provide imprecise failure information. A healthy test suite has the most of the fastest and the least of the slowest.

Common anti-patterns:

- The ice cream cone: More end-to-end tests than unit tests. Produces a slow, brittle pipeline.

- The hourglass: Lots of unit tests and end-to-end tests with nothing in the middle. Misses the critical API layer.

- The flat distribution: Equal numbers of all test types, ignoring the performance and maintenance cost differences.

Maintaining the pyramid: Review your test distribution quarterly. If end-to-end tests are growing faster than unit tests, refactor existing end-to-end tests into API tests where the behavior can be validated at that layer.

Best Practice 2: Automation-First Mindset

The automation-first mindset means that every test should be automated by default. The burden of proof is on manual testing—any test that is executed manually should have a documented reason why automation is impractical or cost-ineffective.

What Automation-First Covers

- All regression testing must be automated. If a test validates that previously working functionality still works, it should run in the pipeline automatically.

- All API testing must be automated. API regression is the most common source of production incidents in microservices architectures and the most tractable to automate.

- All integration testing must be automated. If integration between two services must be verified, that verification must run in the pipeline.

- Performance baselines must be automated. If response time matters, a performance test must run automatically on merges to main.

What Automation-First Does Not Cover

- Exploratory testing is inherently manual—it involves a human tester deliberately trying to find unexpected failures. This is valuable and appropriate as a manual activity.

- Usability testing requires human judgment about the user experience.

- Novel scenario investigation after a production incident may require manual probing.

The key insight is that automation-first dramatically shrinks the scope of necessary manual testing, freeing QA engineers to focus on the genuinely exploratory work that requires human intelligence.

Best Practice 3: Shift Testing Left

Shifting testing left means moving test execution to the earliest feasible stage of the development lifecycle. In a DevOps pipeline context, this means:

- Tests run on every pull request, not just on merge to main.

- API tests are generated from specs and run before the implementation is complete, using mock servers.

- Developer workstations run the same tests that the pipeline runs, so there are no surprises when code is pushed.

- Contract tests validate API designs before any code is written. See our shift left testing strategy guide for the full implementation framework.

The economics of shift left are compelling: a defect caught during code review requires a commit to fix. The same defect caught in production requires an incident response, a hotfix deployment, and potentially a rollback. Shifting detection left reduces the average cost of a defect exponentially.

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

Shift-Left API enables shift left API testing by generating comprehensive test suites from OpenAPI specifications. This means API tests can exist and run at the PR stage from the first day of development, not after QA has had time to write them manually.

Best Practice 4: Parallel Test Execution

Pipeline duration is the enemy of fast releases. If your pipeline takes 90 minutes to complete, developers cannot get timely feedback, and deployment frequency is constrained. Parallel test execution dramatically reduces pipeline duration by running independent test suites simultaneously.

How to Parallelize

Within a test stage: Run tests in parallel threads or processes within a single job. Most modern test frameworks support parallel execution (JUnit, pytest, Jest all have parallel execution modes).

Across test stages: Run independent test categories simultaneously. Unit tests, API tests, and security scans can often run in parallel because they have no dependencies on each other.

Across services: In a microservices architecture, tests for independent services can run in parallel rather than sequentially.

Target Duration by Stage

| Pipeline Stage | Target Duration | Parallelization Strategy |

|---|---|---|

| Pre-commit (local) | Under 30 seconds | Run only changed test files |

| PR gate (commit → PR) | Under 5 minutes | Parallel unit + API + lint |

| Post-merge (main branch) | Under 15 minutes | Parallel integration suites per service |

| Pre-production (staging) | Under 20 minutes | Parallel regression suites |

The Real Cost of Serial Execution

A pipeline with four stages each taking 15 minutes takes 60 minutes total in serial execution. Parallelized, the same pipeline can run in 15–20 minutes. For a team deploying 10 times per day, this difference represents hours of developer waiting time per day.

Best Practice 5: Quality Gates at Every Stage

Quality gates are automated enforcement points that block code from advancing to the next pipeline stage if it fails defined quality thresholds. They are the mechanism that makes quality standards non-negotiable.

Where to Place Quality Gates

- PR stage: Block merge if unit tests fail, API tests fail, or code coverage drops below threshold.

- Main branch: Block staging deployment if integration tests fail or security scan finds critical vulnerabilities.

- Staging: Block production deployment if smoke tests fail or regression suite failure rate exceeds threshold.

- Production: Alert and potentially rollback if synthetic monitoring tests fail after deployment.

What Quality Gates Should Enforce

- Test pass rate (100% for unit and API tests is standard)

- Code coverage minimum (commonly 70–80% for new code)

- Security vulnerability threshold (zero critical; configurable for high/medium)

- Performance regression tolerance (commonly less than 10% regression from baseline)

- API response schema compliance (100% for contract tests)

Making Quality Gates Stick

Quality gates only work if they are non-negotiable. The moment a team bypasses a gate for deadline pressure, the gate's authority is undermined. Engineering leadership must commit publicly to quality gate enforcement and back it up when timelines are tight.

Best Practice 6: Fast Feedback Loops

The most carefully constructed test suite is worthless if developers don't receive results quickly. The goal of feedback loop optimization is to minimize the time between a defect being introduced and the developer receiving actionable information about it.

Designing for Fast Feedback

- Test results should appear in the developer's primary workspace. GitHub PR status checks, Slack notifications, and IDE integrations all reduce the friction of checking test results.

- Failure messages must be actionable. A failure message that says "test failed" is useless. A failure message that says "POST /orders returns 500 when request body missing required field

customerId" is immediately actionable. - The most common failure modes should be visible first. Structure test output so that the most diagnostically useful information appears at the top of the report.

- Test results must be available within the PR review workflow. If a reviewer must navigate away from the PR to see test results, many reviewers will skip checking.

Feedback Loop Targets

| Trigger | Feedback Target | Acceptable Maximum |

|---|---|---|

| Local commit | Immediate (pre-commit hook) | 30 seconds |

| Push to remote | Within 3 minutes | 5 minutes |

| Pull request opened | Within 5 minutes | 10 minutes |

| Merge to main | Within 15 minutes | 30 minutes |

| Deployment to staging | Within 10 minutes | 20 minutes |

Best Practice 7: Test Data Management

Poor test data management is one of the most underappreciated sources of flaky tests and pipeline failures. Tests that depend on specific data states are brittle—they pass when the data is in the expected state and fail when it is not, for reasons entirely unrelated to the code being tested.

Test Data Principles

- Tests must own their data. Each test should create the data it needs, verify against it, and clean up after itself.

- Tests must be independent. The order of test execution should not affect the outcome of any individual test.

- Never use production data in tests. Production data contains PII, changes unpredictably, and is subject to regulatory constraints. Synthetic or masked data is always preferable.

- Use database seeding for integration tests. Integration tests that require a database should seed it with known data before running and clean up after.

- Mock external data sources in CI. Tests that depend on external APIs or services for data should use mocks in CI to eliminate external variability.

Best Practice 8: API Testing as a First-Class Practice

In a microservices architecture, APIs are the primary integration points between services. A defect at the API layer propagates failure to every consuming service. Yet many teams treat API testing as an afterthought—a few Postman collections that QA runs manually before releases.

API testing must be automated, comprehensive, and running at the earliest pipeline stages. It is not optional in a DevOps environment where services communicate exclusively through APIs.

What Comprehensive API Testing Covers

- Happy path tests: Valid inputs produce expected outputs.

- Error path tests: Invalid inputs, missing required fields, and boundary values produce appropriate error responses.

- Authentication tests: Unauthenticated and improperly authenticated requests are rejected correctly.

- Schema validation: All responses conform to the documented API schema.

- Contract tests: APIs match the contracts that dependent services expect.

- Performance baselines: API response times are within acceptable bounds.

The Shift-Left API Approach to API Testing

Shift-Left API addresses the comprehensive API testing requirement without requiring engineers to write test code. Upload your OpenAPI or Swagger specification, and the platform automatically generates tests covering all of the above categories for every documented endpoint. These tests run via CLI in any CI/CD pipeline and produce standard JUnit output for integration with any test reporting system.

For teams with large API surfaces (dozens of services, hundreds of endpoints), manual API test authoring is simply not feasible. Spec-driven generation is the only scalable approach.

Pipeline Architecture for Best Practice Implementation

Tools Supporting DevOps Testing Best Practices

| Best Practice | Recommended Tools | Role |

|---|---|---|

| Test Pyramid (Unit) | JUnit, Jest, pytest, Go test | Unit test frameworks |

| Test Pyramid (API) | Shift-Left API, REST Assured, Karate | API test automation |

| Test Pyramid (Integration) | Testcontainers, Docker Compose | Real dependency management |

| Test Pyramid (E2E) | Playwright, Cypress | Browser-level E2E tests |

| Automation-First | GitHub Actions, GitLab CI, Jenkins | Pipeline automation |

| Shift Left | Shift-Left API, Pact | API and contract testing from specs |

| Parallel Execution | pytest-xdist, JUnit parallel, k6 | Parallel test execution |

| Quality Gates | SonarQube, TSL quality gates, GitHub branch protection | Automated enforcement |

| Fast Feedback | Allure, TSL Analytics, Slack CI bots | Result delivery and trending |

| Test Data | Faker, Mockaroo, Testcontainers | Synthetic data generation |

| API Testing | Shift-Left API | Spec-driven test generation |

| Monitoring | Datadog, Checkly, New Relic Synthetics | Production continuous testing |

Free YAML templates + guide

CI/CD Testing Pipeline Templates

Production-ready CI/CD pipeline templates for GitHub Actions and GitLab CI. Includes API testing, contract testing, and performance testing stages.

Download FreeReal Implementation Example

The Problem

A retail technology company with 25 engineers was experiencing a pattern familiar to many growing teams: deployment frequency was high (3-4 times per week) but production incidents were also high (2-3 incidents per week). Post-incident analysis consistently found that the root cause was API regressions—endpoints that changed behavior without any automated test catching the change.

Their existing pipeline had unit tests on commits and end-to-end tests before production, but nothing in between. The API layer was completely untested in CI.

The Solution

The engineering team implemented the eight best practices described in this guide over two sprints:

Sprint 1:

- Added Shift-Left API to generate API tests from their 8 OpenAPI specifications (resulting in 620 automated API tests).

- Integrated TSL into their GitHub Actions pipeline as a PR gate.

- Configured quality gates requiring 100% API test pass rate before PR merge.

- Parallelized their existing unit tests, reducing unit test execution from 8 minutes to 2 minutes.

Sprint 2:

- Added integration tests using Testcontainers for their three most critical service integrations.

- Implemented Slack notifications for pipeline failures, routing alerts to the relevant team's channel.

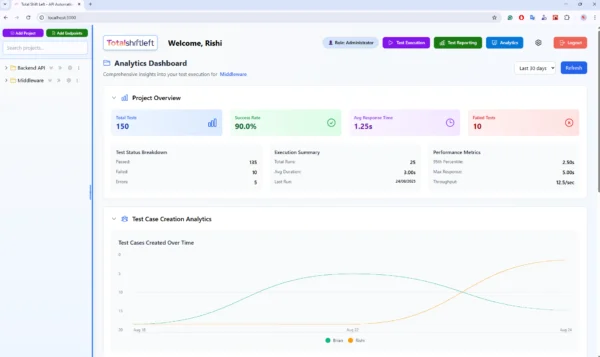

- Added TSL analytics dashboard to their weekly engineering review meeting.

- Created test data management guidelines and refactored 12 flaky tests that were using shared test data.

The Results

Over the 60 days following full implementation:

- Production incidents dropped from 2-3 per week to an average of 0.4 per week—an 83% reduction.

- API regressions caught in the pipeline: 89 over the period, all before reaching production.

- Developer mean time to repair (MTTR) for test failures: 12 minutes (from 2.3 hours for production incidents).

- Team reported higher confidence in deployments and reduced deployment anxiety.

Common Challenges and Solutions

Challenge 1: Tests Take Too Long to Run

Problem: The pipeline takes 45 minutes. Developers push code and then switch tasks, losing context by the time results arrive.

Solution: Audit pipeline duration stage by stage. Identify the slowest stages and parallelize them. Ensure unit tests run in parallel threads. Use TSL for API tests rather than slow end-to-end tests for API verification. Target under 10 minutes for the PR gate stage.

Challenge 2: Flaky Tests Undermine Gate Effectiveness

Problem: Quality gates that fail occasionally due to flakiness rather than actual code defects cause developers to treat all failures with skepticism, including real failures.

Solution: Track flakiness explicitly. Any test that fails more than once without a corresponding code defect is flaky and must be quarantined and fixed. Use mock servers to eliminate external variability. Treat flaky test resolution as a high-priority engineering task, not a maintenance backlog item.

Challenge 3: API Testing Requires Too Much Time to Set Up

Problem: Writing comprehensive API tests manually for a large API surface takes weeks or months of QA engineering time, delaying the benefit of the practice.

Solution: Use spec-driven test generation. Shift-Left API generates comprehensive API tests from OpenAPI specifications in minutes. A team with 10 microservices and existing OpenAPI specs can have 500+ automated API tests running in their pipeline within a day, without any manual test authoring. See our guide on how to build a CI/CD testing pipeline for detailed setup instructions.

Challenge 4: Management Pressure Bypasses Quality Gates

Problem: Under deadline pressure, engineering leadership requests that quality gates be bypassed to ship faster, undermining the entire system.

Solution: This is a leadership problem requiring a leadership solution. Engineering leaders must commit publicly and consistently to quality gate enforcement. The data on the cost of bypassing gates (production incidents, emergency fixes, customer impact) should be made visible and attributed clearly. Document and report every gate bypass and its consequences.

Complete Best Practices Reference

- Follow the test pyramid. Maintain 40-60% unit tests, 25-35% API/service tests, 15-25% integration tests, 5-10% end-to-end tests.

- Automate every regression test. If it tests that something still works, it must run in the pipeline without human initiation.

- Shift testing to the earliest feasible stage. PR stage for unit and API tests; merge stage for integration tests.

- Run independent test suites in parallel. Minimize pipeline duration through parallelization.

- Enforce quality gates at every stage. No code advances without meeting defined quality thresholds.

- Design feedback for developer speed. Results in 5 minutes or less, with actionable failure messages.

- Own your test data. Every test creates and cleans up its own data; no shared state between tests.

- Automate API testing from OpenAPI specs. Use shift left testing tools like Shift-Left API to generate and maintain API tests automatically.

- Track test health metrics. Monitor pass rates, flakiness rates, and coverage trends weekly.

- Make security testing automatic. SAST, dependency scanning, and API security testing run in every pipeline.

- Use mocks for external dependencies. CI tests should not depend on live external services.

- Review the test pyramid quarterly. Actively maintain the distribution; prevent pyramid inversion. Align with your broader DevOps testing strategy goals.

DevOps Testing Best Practices Checklist

- ✔ Test pyramid distribution is within target ranges (unit > API > integration > E2E)

- ✔ All regression tests are automated and run in the CI/CD pipeline

- ✔ API tests run on every pull request trigger

- ✔ API tests generated from OpenAPI/Swagger specs (not hand-coded)

- ✔ Pipeline stages run in parallel where possible

- ✔ PR gate completes within 5-10 minutes

- ✔ Quality gates configured at every pipeline stage

- ✔ Quality gates are non-negotiable (no bypass mechanism)

- ✔ Test failures appear in developer's primary workflow within 5 minutes

- ✔ Failure messages are actionable (not just "test failed")

- ✔ Test data is isolated per test (no shared mutable state)

- ✔ External service dependencies are mocked in CI

- ✔ Security scanning runs in parallel with functional tests

- ✔ Flaky tests are tracked and prioritized

- ✔ Test metrics reviewed weekly by engineering leads

- ✔ Test pyramid distribution reviewed quarterly

- ✔ Post-deployment synthetic monitoring active for critical API paths

Frequently Asked Questions

What are the most important DevOps testing best practices?

The most important DevOps testing best practices are: following the test pyramid (many unit tests, fewer end-to-end tests), automating tests at every pipeline stage, enforcing quality gates that block substandard code, running tests in parallel to minimize duration, and shifting testing left so defects are caught during development rather than after deployment. Together, these practices enable high deployment frequency with high reliability.

How does the test pyramid support fast releases in DevOps?

The test pyramid prioritizes fast, isolated tests (unit tests) at the base and reserves slow, expensive tests (end-to-end) for the top. This distribution keeps the pipeline fast—the PR gate runs in minutes rather than hours—while maximizing coverage. A team with an inverted pyramid (more end-to-end than unit tests) will have a slow pipeline that constrains release frequency regardless of other optimizations.

What is an automation-first testing approach in DevOps?

Automation-first means every test should be automated by default. Manual testing is reserved exclusively for exploratory testing and scenarios that are genuinely impractical to automate. All regression testing, API testing, and integration testing should run automatically in the CI/CD pipeline without human initiation. This principle is what enables testing to scale with deployment frequency.

How do quality gates work in DevOps pipelines?

Quality gates are automated enforcement points in the pipeline that block code from advancing if it fails defined quality thresholds. They enable fast releases by making quality verification automatic and instant—no human review of test results required. Code either passes all gates and proceeds automatically, or fails and is blocked immediately with actionable feedback. The automated nature of gates means they add zero latency to a passing build.

Conclusion

DevOps testing best practices are not ideals to aspire to—they are operational requirements for teams that want to release software fast and reliably over sustained periods. Teams that skip or shortcut these practices inevitably face one of two outcomes: they slow down (because accumulated technical debt and production incidents force them to slow deployments) or they continue shipping fast while accumulating production quality debt that eventually results in a major incident.

The eight practices described in this guide form a coherent system. The test pyramid provides the structural foundation. The automation-first mindset ensures every test runs without human intervention. Shift left ensures tests run at the earliest possible stages. Parallel execution keeps the pipeline fast. Quality gates make standards non-negotiable. Fast feedback loops keep developers engaged and productive. Test data management ensures reliability. And API testing as a first-class practice protects the critical integration layer of microservices architectures.

For the API testing component of this system—the layer that most teams underinvest in—Shift-Left API provides immediate, scalable automation. Generate comprehensive API tests from your OpenAPI specifications, run them in your pipeline on every pull request, and trend the results in the analytics dashboard. No test engineering team required, no test code to write or maintain.

Start your free trial and implement API testing best practices in your DevOps pipeline today.

Related: What Is Shift Left Testing | DevOps Testing Strategy | Shift Left Testing Strategy | Best Shift Left Testing Tools | How to Build a CI/CD Testing Pipeline | Shift Left Testing in CI/CD Pipelines | No-code API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.