How to Build a Test Automation Framework: Complete Guide (2026)

A test automation framework is a structured set of guidelines, tools, and conventions that define how automated tests are organized, written, executed, and reported. It helps teams standardize test design, integrate testing into CI/CD pipelines, and maintain scalable test suites as applications grow.

A test automation framework is only as good as the decisions made before writing the first test. Teams that skip the design phase end up with a collection of scripts that works in isolation but falls apart under the pressure of real development cycles. This guide covers every step of building a production-grade test automation framework—from defining scope to setting up CI gates—with specific guidance on how Shift-Left API integrates into the API layer for instant, no-code coverage.

Table of Contents

- What Is a Test Automation Framework?

- Why Framework Design Matters

- Key Components of a Test Automation Framework

- Framework Architecture and Structure

- Tool Selection by Layer

- Real Implementation Example

- Common Challenges and Solutions

- Best Practices for Building a Test Automation Framework

- Test Automation Framework Checklist

- FAQ

- Conclusion

Introduction

Ask any QA architect what their biggest regret is, and the answer is nearly always the same: "We started writing tests before we designed the framework." The result is always the same too—a test suite that runs locally but breaks in CI, produces inconsistent reports, has no clear ownership model, and requires heroic effort to maintain as the application grows.

A test automation framework is not a tool. It is an architecture. It defines the conventions, utilities, patterns, and integration points that make it possible for a team of 20 engineers to maintain 5,000 automated tests without the suite becoming a second codebase that nobody wants to touch. This guide gives you the blueprint.

We will walk through six steps: defining scope, choosing framework type, establishing folder structure, setting up reporting, integrating with CI/CD, and building a maintenance model. Along the way, we will show exactly where Shift-Left API fits into the API layer—and why it can cut the time to full API coverage from months to hours.

What Is a Test Automation Framework?

A test automation framework is a structured system that governs how automated tests are written, organized, executed, and maintained. It includes:

- Base libraries: The testing libraries your tests depend on (Jest, PyTest, JUnit, etc.)

- Utilities: Shared helper functions, data factories, and setup/teardown hooks

- Configuration management: Environment variables, configuration files, secrets management

- Reporting: Output formats for CI/CD pipelines and human-readable dashboards

- Integration points: Hooks into CI/CD, issue trackers, and notification systems

- Conventions: Naming standards, folder structure, and code review criteria for tests

Without a framework, each engineer writes tests in their own style, with their own utilities, producing output that cannot be aggregated or compared. With a framework, tests are interchangeable, maintainable, and trustworthy.

Framework Types

Data-Driven Framework: Tests are parameterized by external data sources (CSV, JSON, database). The same test logic runs against multiple data sets. Excellent for API testing where the same endpoint needs validation across many input combinations.

Keyword-Driven Framework: Test logic is abstracted into human-readable keywords. Non-technical stakeholders can understand and contribute to test cases. Common in legacy enterprise environments.

Behavior-Driven Development (BDD): Tests are written in Gherkin (Given/When/Then) syntax. Bridges the gap between product owners and engineering. Popular with Cucumber, Behave, and SpecFlow.

Modular Framework: Tests are composed from reusable modules representing application components. High reusability, lower maintenance. Works well for large applications with repeated UI patterns.

Hybrid Framework: Combines multiple approaches. Most production frameworks are hybrid—they use data-driven patterns for regression, BDD for acceptance tests, and modular patterns for shared utilities.

In 2026, the dominant approach for modern software teams is hybrid with API-first coverage: strong unit testing at the code level, comprehensive API testing at the service level (often using shift left testing tools like Shift-Left API), and focused BDD or modular tests for critical user journeys.

Why Framework Design Matters

Maintenance Is the Hidden Cost

The IBM Systems Sciences Institute found that fixing a bug in production costs 100x more than fixing it during development. The equivalent applies to test frameworks: a poorly designed framework costs 5–10x more to maintain than one designed with deliberate conventions. The maintenance tax compounds over time—by year two, a poorly designed framework typically consumes more engineering hours than it saves.

Consistency Enables Scale

A team of 5 can manage chaos. A team of 50 cannot. As engineering organizations grow, consistent conventions become essential. When every test follows the same patterns, any engineer can read, debug, and extend any test without a learning curve.

Framework Quality Determines CI/CD Speed

The speed of your CI/CD pipeline is largely determined by how well your test framework is designed. Parallel execution requires tests to be independent. Fast feedback requires tests to be categorized by speed. Clean reporting requires tests to produce structured output. None of these happen automatically—they are designed into the framework from the start.

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

According to DORA research, elite software delivery organizations run automated tests that complete in under 10 minutes on every pull request. This is impossible without a framework explicitly designed for speed and parallelism — and it is a cornerstone of a shift left testing approach.

Key Components of a Test Automation Framework

Component 1: Test Organization

Tests should be organized by layer, feature, and type—not by the person who wrote them or when they were written. A clear folder structure makes it obvious where any new test belongs and prevents duplication.

Component 2: Shared Utilities

Every test suite develops repeated patterns: creating test users, setting up authentication tokens, seeding databases, cleaning up after tests. These should live in shared utility modules, not duplicated across individual test files. Duplication in test code is as problematic as duplication in production code.

Component 3: Configuration Management

Tests run in multiple environments: local development, CI, staging, production. Configuration—base URLs, credentials, feature flags—must be environment-specific and managed through environment variables, never hardcoded. A configuration module should provide a consistent interface regardless of environment.

Component 4: Reporting Infrastructure

CI/CD systems consume structured test output (JUnit XML, TAP format, JSON). Human reviewers need readable dashboards. A framework should produce both. Additionally, trend reporting—tracking test pass rates, execution times, and flakiness over time—is essential for managing framework health.

Component 5: Data Management

Test data is the most underestimated challenge in automation framework design. Tests that depend on shared, mutable data are brittle and non-deterministic. A robust framework provides:

- Data factories: Functions that create valid test data programmatically

- Setup fixtures: Pre-test state initialization

- Teardown routines: Post-test cleanup to restore known state

- Isolation guarantees: Each test runs against its own data, not shared state

Component 6: CI/CD Integration

A framework that does not run in CI is incomplete. Integration means: triggering tests on the right events (commit, build, merge), failing the pipeline on test failure, reporting results to the PR interface, and notifying the team of failures. This is covered in depth in the API test automation with CI/CD guide.

Framework Architecture and Structure

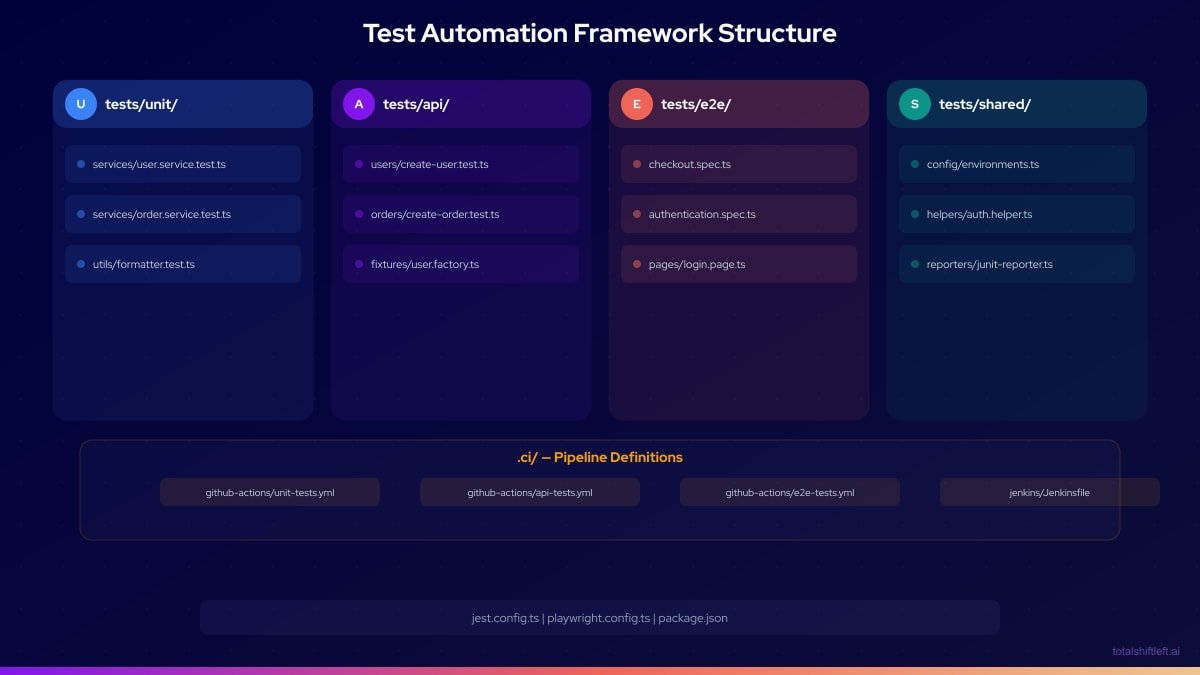

Below is a reference folder structure for a polyglot test automation framework covering unit, API, and E2E layers:

For the API layer, Shift-Left API imports your OpenAPI specification and manages test organization automatically. The generated tests connect directly to your CI/CD pipeline, bypassing the need to build and maintain the API test directory structure manually.

Tool Selection by Layer

| Layer | Recommended Tools | When to Use | Notes |

|---|---|---|---|

| Unit (JS/TS) | Jest, Vitest | All JavaScript/TypeScript services | Jest is mature; Vitest is faster for Vite projects |

| Unit (Java) | JUnit 5 + Mockito | Spring Boot, Quarkus | Standard in Java ecosystem |

| Unit (Python) | PyTest + unittest.mock | Django, FastAPI, Flask | Most flexible Python option |

| Unit (.NET) | xUnit + Moq | ASP.NET Core | Preferred by .NET community |

| API (no-code) | Shift-Left API | OpenAPI-documented services | Generates tests from spec automatically |

| API (code-based) | REST Assured | Java API testing | Fluent, readable DSL |

| API (code-based) | SuperTest | Node.js in-process testing | Excellent for Express/Fastify |

| API (code-based) | Requests + PyTest | Python services | Simple and effective |

| Contract | Pact | Microservice consumer contracts | Provider verification built in |

| E2E | Playwright | Modern SPAs and SSR apps | Fast, cross-browser, great API |

| E2E | Cypress | Component + E2E, single browser | Outstanding developer experience |

| Reporting | Allure, HTML Extra | Human-readable dashboards | Integrates with all major runners |

| Performance | k6 | Load and stress testing | CI-friendly, scripted in JS |

Real Implementation Example

Problem

A financial technology startup with 28 engineers was building a REST API-first platform with 9 microservices. They had excellent unit test coverage (82%) but zero API integration tests. Every two-week release required three days of manual API verification by QA engineers. A missed regression in the payment service had reached production twice in six months, costing the company approximately $40,000 in incident response and customer remediation.

Solution

The team implemented a test automation framework in four phases over eight weeks.

Phase 1: Framework scaffolding (week 1)

- Established the folder structure described above

- Created shared configuration module supporting local, staging, and CI environments

- Set up Jest for unit tests and Playwright for the two highest-risk E2E flows

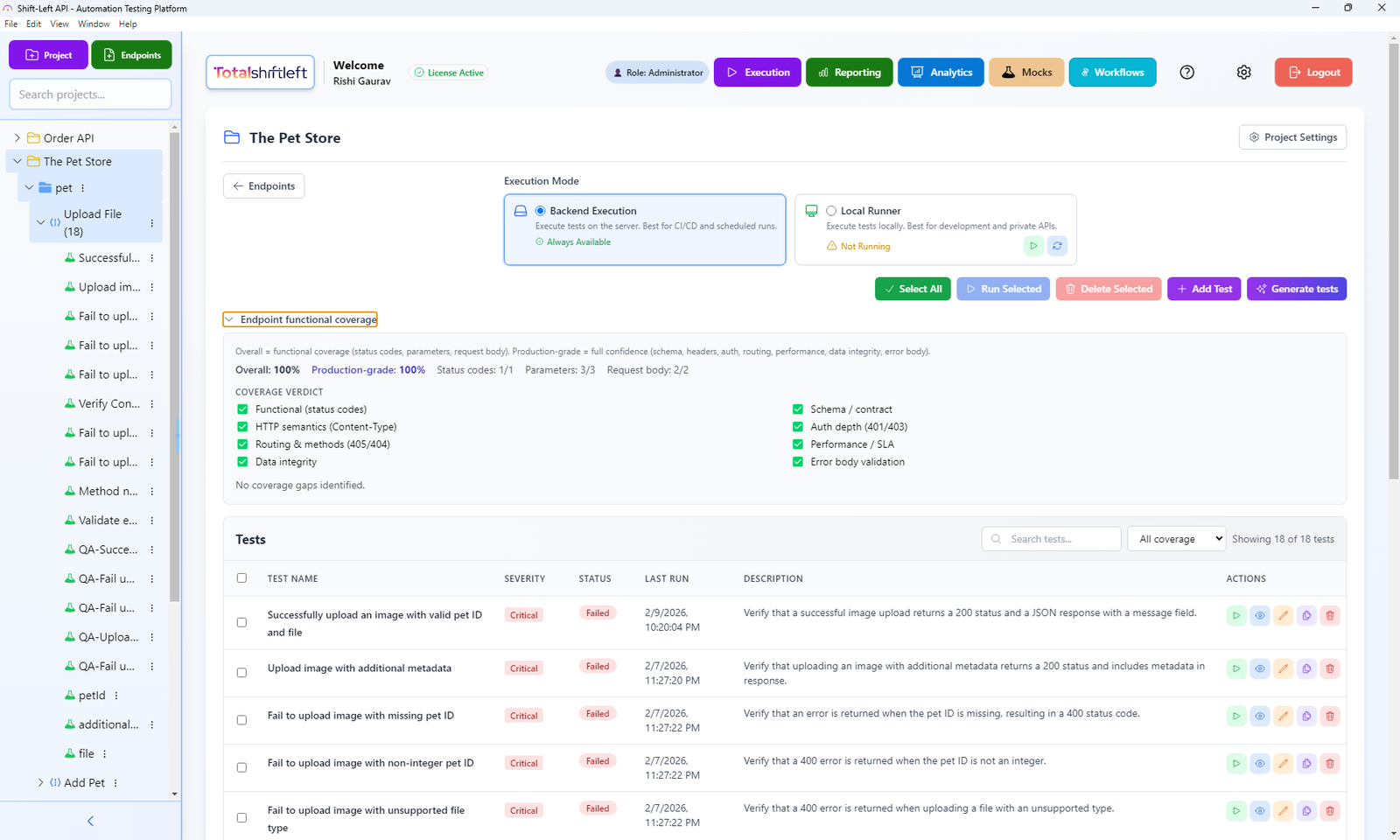

Phase 2: API layer with Shift-Left API (weeks 2–3)

- Imported OpenAPI specifications for all 9 microservices into Shift-Left API

- Auto-generated API tests covering 312 endpoints across all services

- Configured TSL to run against staging environment in CI

Free PDF + code examples

OpenAPI to Test Generation Template Pack

Go from OpenAPI spec to full test coverage. Includes sample specs, example generated tests, edge case patterns, and CI/CD integration guides.

Download FreePhase 3: CI/CD integration (week 4)

- Unit tests run on every commit (2-minute gate)

- API tests run on every build via TSL's GitHub Actions integration (4-minute gate)

- E2E tests run nightly and pre-release (18-minute suite)

Phase 4: Reporting and observability (weeks 5–8)

- Configured Allure for human-readable test reports

- Set up Slack notifications for pipeline failures

- Built a coverage dashboard using TSL's analytics to track API test health

Results After 90 Days

- Manual API regression testing eliminated entirely (0 QA hours vs. 3 days per release)

- 312 API endpoints continuously validated in CI (was 0)

- Production incidents from API regressions: 0 (was 2 per quarter)

- Release confidence score (internal NPS): increased from 6.2 to 8.7

- Framework maintenance: 4 hours per sprint (budgeted from the start)

- Total setup time for API layer: 11 hours using Shift-Left API (estimated 6 weeks if coded from scratch)

Common Challenges and Solutions

Challenge: Tests are tightly coupled to each other Solution: Enforce strict test isolation. Each test must set up its own state and clean up after itself. Use unique identifiers (UUIDs) for test data to prevent collision between parallel test runs.

Challenge: Framework is only understood by one person Solution: Document framework conventions in the repository README. Require code review for all test additions. Run framework orientation sessions for new engineers joining the team.

Challenge: Tests pass in CI but fail in staging Solution: Environment-specific tests indicate that tests depend on environment state rather than managed fixtures. Audit each failing test to identify undeclared dependencies on external state.

Challenge: API tests break whenever the API changes Solution: If you are maintaining API tests manually, schema changes require manual test updates. Using Shift-Left API with automated spec re-import means tests regenerate automatically when the OpenAPI spec is updated.

Challenge: E2E tests take too long Solution: Apply strict criteria for E2E test addition. Only the top 10–15 highest-risk user journeys deserve E2E coverage. Invest in parallel execution using Playwright's built-in sharding. Move everything else to API tests.

Challenge: No visibility into framework health over time Solution: Track three metrics monthly: test count by layer, pass rate by layer, and average execution time. These three numbers tell you everything about whether your framework is healthy or deteriorating.

Best Practices for Building a Test Automation Framework

- Design the folder structure before writing any tests. The structure expresses the team's mental model of the application.

- Write a framework README before anything else. Conventions that are not documented are conventions that will not be followed.

- Treat framework utilities as production code. Unit test your test helpers. Review test utilities in code review. Align framework design with your overall test automation strategy.

- Isolate every test completely. No test should depend on another test's execution or on shared mutable state.

- Parameterize everything that varies by environment. Base URLs, credentials, timeouts—all must be configurable.

- Define a maximum acceptable test suite runtime before you start. Unit: 3 minutes. API: 5 minutes. E2E: 20 minutes. Enforce these as SLOs.

- Build the CI integration on day one, not day thirty. Tests that do not run in CI do not exist. Follow our guide on how to build a CI/CD testing pipeline for a step-by-step approach.

- Use the API layer for the majority of integration coverage. It is faster, more stable, and easier to maintain than UI testing. Follow test automation best practices for DevOps for API test design patterns.

- Budget 20–30% of automation effort for framework maintenance. This is not optional.

- Review framework health monthly. If execution time is growing, investigate immediately.

Test Automation Framework Checklist

- ✔ Folder structure defined and documented before test writing begins

- ✔ Shared utilities module created for auth, data factories, and HTTP client

- ✔ Configuration module supports local, CI, and staging environments

- ✔ All tests are isolated—no shared mutable state between tests

- ✔ CI/CD pipeline triggers the appropriate test layer at the appropriate event

- ✔ API layer coverage established (use Shift-Left API for no-code generation)

- ✔ Reporting produces JUnit XML for CI and human-readable output for review

- ✔ Framework conventions documented in repository README

Frequently Asked Questions

What is a test automation framework?

A test automation framework is a set of guidelines, libraries, tools, and conventions that define how automated tests are organized, written, executed, and reported. It provides consistency and maintainability across the entire test suite.

What are the main types of test automation frameworks?

The main types are: data-driven (tests driven by external data sets), keyword-driven (tests described in human-readable keywords), behavior-driven (BDD using Gherkin), modular (reusable components), and hybrid (combining multiple approaches).

How long does it take to build a test automation framework?

A basic framework with unit and API testing can be operational in 2–4 weeks. A full framework with E2E, reporting, and CI integration takes 6–12 weeks. Using no-code platforms like Shift-Left API for API testing reduces the API layer setup to hours.

How do you maintain a test automation framework over time?

Treat framework code as production code: review it, refactor it, and track its health metrics. Assign an owner for framework maintenance, budget 20–30% of automation effort for upkeep, and review tool versions quarterly.

Conclusion

Building a test automation framework is a design problem as much as an engineering problem. The decisions you make before writing the first test—folder structure, ownership model, data management, CI integration—determine whether the framework serves the team for years or becomes a burden within months. Start with the API layer, where the ROI is highest and the maintenance cost is lowest. Shift-Left API makes the API layer accessible to any team by generating a complete test suite from your OpenAPI specification in minutes. Start your free trial and build your API coverage foundation today.

Related: What Is Shift Left Testing | Shift Left Testing Strategy | Best Shift Left Testing Tools | How to Build a CI/CD Testing Pipeline | DevOps Testing Strategy | API Test Automation with CI/CD | No-code API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.