Test Automation Strategy for Modern Software Teams (2026)

A test automation strategy is a plan that defines which tests to automate, at which layer of the testing pyramid, using which tools, and how automation integrates into CI/CD pipelines. It helps teams deliver fast feedback, reduce manual effort, and maintain scalable quality as applications grow.

A well-defined test automation strategy separates teams that ship confidently from those that ship and pray. Without a clear plan, automation investments accumulate technical debt faster than they reduce manual effort—leaving teams with a suite of brittle, hard-to-maintain tests that slow releases rather than accelerating them. This guide lays out a practical, step-by-step approach to building and sustaining a test automation strategy in 2026, covering the automation pyramid, ROI calculation, tool selection, and how platforms like Shift-Left API eliminate the automation barrier at the API layer entirely.

Table of Contents

- What Is a Test Automation Strategy?

- Why Your Automation Strategy Matters

- Key Components of a Test Automation Strategy

- The Automation Pyramid Architecture

- Tool Selection Framework

- Real Implementation Example

- Common Challenges and Solutions

- Best Practices for Test Automation Strategy

- Test Automation Strategy Checklist

- FAQ

- Conclusion

Introduction

In 2026, software teams ship multiple times per day. Manual regression testing at that cadence is mathematically impossible. A test automation strategy is no longer optional—it is a prerequisite for staying competitive, and it sits at the heart of any effective shift left testing initiative. Yet Gartner estimates that over 60% of automation initiatives fail to deliver expected ROI, primarily because teams automate without a coherent plan. They pick tools before defining scope, write tests that nobody maintains, and create automation suites that take longer to run than the deployment window they are meant to protect.

This guide gives you a practical framework for avoiding those failure modes. We will cover how to define automation scope, how to balance the testing pyramid, how to calculate ROI before you invest, and how to integrate automation into every phase of your CI/CD pipeline. We will also look at where modern AI-powered platforms change the calculation—particularly for the API layer, which most teams agree is the highest-value automation target but also the hardest to staff for.

What Is a Test Automation Strategy?

A test automation strategy is a documented plan that answers five fundamental questions:

- What tests should be automated, and what should remain manual?

- Where in the testing pyramid should automation investment be concentrated?

- When in the development lifecycle should automated tests run?

- Who owns the automation suite—QA engineers, developers, or a shared model?

- How will the automation suite be maintained as the application evolves?

Without answers to all five questions, automation becomes a collection of scripts rather than a system. A strategy aligns testing investment with business objectives: reducing time-to-market, improving release confidence, and lowering the cost of quality.

A mature test automation strategy also distinguishes between test automation types:

- Functional automation: Verifying that features work as specified

- Regression automation: Ensuring existing functionality is not broken by new changes

- Performance automation: Measuring response times and throughput under load

- Security automation: Scanning for vulnerabilities in APIs and infrastructure

- Contract automation: Verifying API contracts between services

Each type has different tooling requirements, different ownership models, and different points in the pipeline where it belongs.

Why Your Automation Strategy Matters

The Cost of No Strategy

Teams that automate without strategy typically encounter three failure modes:

Automation debt. Tests are written ad hoc, with no consistent patterns or shared utilities. The suite grows fragile. Small application changes break dozens of tests that have nothing to do with the change. Developers begin ignoring red builds, which defeats the entire purpose of automation.

Coverage gaps. Without deliberate planning, teams tend to automate what is easy to automate—UI flows—rather than what is valuable to automate—API contracts and integration points. UI tests are 10–20x slower and 3–5x more expensive to maintain than equivalent API tests.

Tool sprawl. Different teams adopt different tools independently. Integration between test results, reporting, and deployment gates becomes impossible. Leadership has no unified view of quality.

The ROI of a Coherent Strategy

According to the World Quality Report 2025, organizations with a documented test automation strategy report:

- 47% reduction in time spent on regression testing

- 38% faster release cycles

- 3.2x higher defect detection rate before production

- 62% reduction in post-release incidents

These numbers represent the difference between teams that treat automation as a discipline versus teams that treat it as a task.

The API Layer: Highest ROI Automation Target

Of all automation investments, API testing consistently delivers the fastest payback. APIs are stable (they change on explicit versioning), fast to execute (milliseconds vs. seconds for UI), and cover the business logic that actually matters. A single API test can validate in 50ms what takes a UI test 30 seconds to verify.

The barrier has historically been that API test automation required programming expertise. Platforms like Shift-Left API remove that barrier by auto-generating API tests from your OpenAPI/Swagger specification—no code required. See also: Why no-code API automation is the future of quality engineering.

Key Components of a Test Automation Strategy

Component 1: Scope Definition

Define automation scope using three criteria:

- Frequency: Tests that run frequently (every commit, every build) are the highest automation priority

- Stability: Automate tests for stable features first; features under active development create high maintenance costs

- Risk: Business-critical paths deserve automation regardless of stability

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

A practical starting point: take your top 20 most-run manual test cases. Every one of those is an automation candidate.

Component 2: Ownership Model

The most sustainable ownership model in 2026 is shared ownership with clear boundaries:

- Developers own unit tests — written during development, live in the same repository as code

- QA engineers own integration and API tests — written against stable interfaces, validated at build time

- QA and developers co-own E2E tests — reviewed together, with clear criteria for addition and deletion

Avoid the "QA automation team" anti-pattern, where a separate team owns all automation. This creates a bottleneck, slows feedback, and disconnects automation from the development workflow.

Component 3: Maintenance Budget

Plan to spend 20–30% of automation development time on maintenance. This is not waste—it is the cost of keeping your safety net intact as the application evolves. Teams that do not budget for maintenance accumulate technical debt in the test suite until it collapses under its own weight.

Component 4: Reporting and Observability

Every automated test run should produce structured, actionable output:

- Pass/fail status per test

- Execution time trends (to detect flakiness)

- Coverage metrics by feature area

- Defect detection rate (how many bugs did automation catch before production?)

Platforms like Shift-Left API provide this out of the box through their analytics dashboard, giving teams visibility into API test health without building custom reporting infrastructure.

Component 5: Integration with CI/CD

Automation that does not run in CI/CD is decoration. Every automated test should be triggered automatically at the appropriate pipeline stage and should gate deployment when failures occur. We cover CI/CD integration in depth in Shift Left Testing in CI/CD Pipelines. For teams building frameworks from scratch, see our guide on how to build a test automation framework.

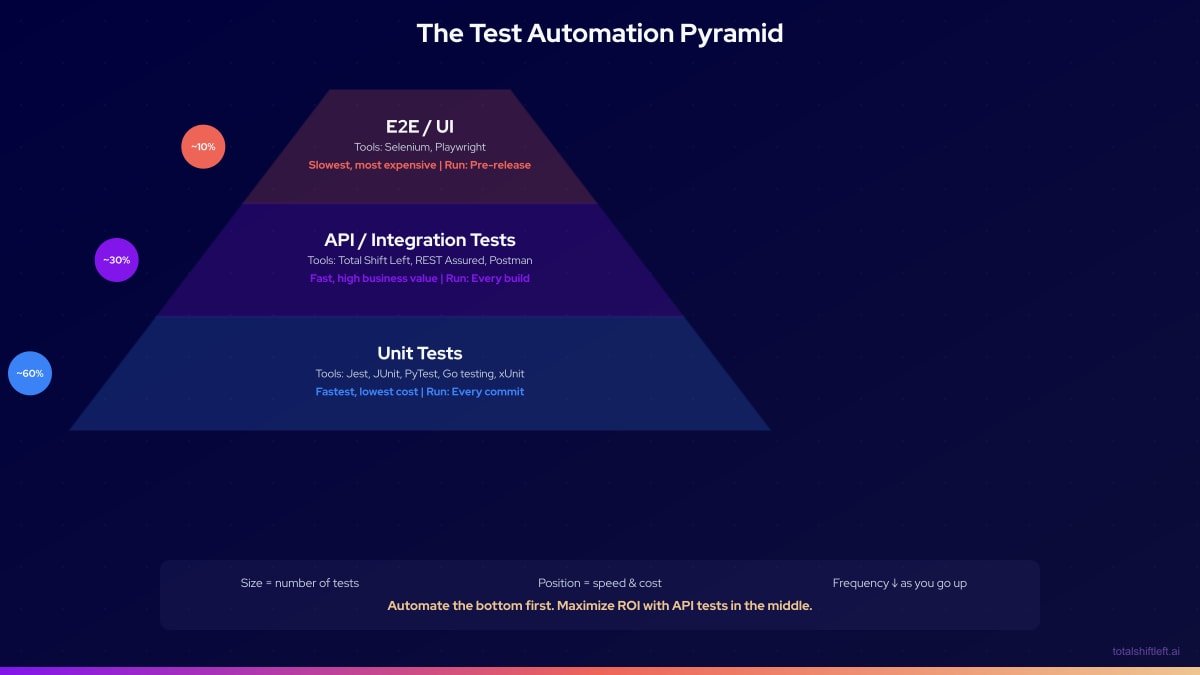

The Automation Pyramid Architecture

The automation pyramid, first described by Mike Cohn, defines the optimal distribution of test types by layer. In 2026, the pyramid has been refined to account for microservices, APIs, and contract testing. Teams building microservices architectures should also review our API testing strategy for microservices for layer-specific guidance.

Unit Layer (60%)

Unit tests validate individual functions, methods, and classes in complete isolation. They run in milliseconds, require no external dependencies, and provide the fastest feedback loop. Every developer should write unit tests as part of the definition of done.

Key characteristics:

- No network calls, no database, no external services

- Mocks and stubs replace dependencies

- Run on every git commit via pre-commit hooks or CI triggers

- Owned entirely by developers

API/Integration Layer (30%)

This is the highest-ROI automation investment for most teams. API tests validate service contracts, business logic, data transformations, and error handling. They run significantly faster than UI tests—a suite of 500 API tests typically executes in under 2 minutes—and they catch the majority of production defects before they reach users.

Key characteristics:

- Test HTTP endpoints, gRPC services, message queues

- Validate request/response schemas, status codes, business rules

- Run on every build (not just every commit)

- Owned jointly by QA and developers

This is where Shift-Left API operates. By importing your OpenAPI/Swagger specification, the platform automatically generates a comprehensive API test suite covering every endpoint, parameter combination, and error scenario—without requiring anyone to write test code. See how it works in the codeless API testing guide.

E2E/UI Layer (10%)

End-to-end tests validate complete user journeys through the application stack. They are valuable for high-risk, high-visibility user flows—checkout, authentication, onboarding—but expensive to create, slow to run, and brittle to maintain. Keeping this layer small and focused is the most common challenge for teams with a legacy of heavy UI automation.

Key characteristics:

- Full browser automation (Playwright, Cypress, Selenium)

- Validate user-visible behavior across integrated systems

- Run pre-release or on a scheduled basis, not every build

- High maintenance cost—plan accordingly

Tool Selection Framework

Choosing automation tools is one of the most consequential decisions in your strategy. The wrong choice creates years of migration pain. Use this framework to evaluate options, and see our best shift left testing tools guide for a focused comparison of platforms designed for early-stage quality.

| Category | Tool | Best For | Notes |

|---|---|---|---|

| Unit Testing (JS/TS) | Jest, Vitest | React, Node.js applications | Fast, great watch mode |

| Unit Testing (Java) | JUnit 5, TestNG | Spring Boot, Java services | Mature ecosystem |

| Unit Testing (Python) | PyTest | Django, FastAPI, ML services | Excellent fixture system |

| Unit Testing (.NET) | xUnit, NUnit | ASP.NET applications | Strong IDE integration |

| API Testing (no-code) | Shift-Left API | OpenAPI-documented APIs | Auto-generates from spec |

| API Testing (code) | REST Assured | Java API testing | Fluent DSL |

| API Testing (code) | SuperTest | Node.js APIs | Built on Superagent |

| API Testing (code) | Requests + PyTest | Python APIs | Simple, flexible |

| Contract Testing | Pact | Microservice contracts | Consumer-driven |

| E2E Testing | Playwright | Modern web apps | Fast, cross-browser |

| E2E Testing | Cypress | Component + E2E | Great DX, limited browser support |

| E2E Testing | Selenium | Legacy browser support | Mature, widely understood |

| Performance | k6, Gatling | Load and stress testing | CI/CD friendly |

| Security | OWASP ZAP | DAST scanning | Open source |

Free PDF + code examples

OpenAPI to Test Generation Template Pack

Go from OpenAPI spec to full test coverage. Includes sample specs, example generated tests, edge case patterns, and CI/CD integration guides.

Download FreeFor teams evaluating API testing tools specifically, see Best API Test Automation Tools Compared for a detailed breakdown.

Real Implementation Example

Problem

A mid-size SaaS company with 35 engineers was spending 12 hours per sprint on manual regression testing. Their test suite consisted primarily of UI automation written by a dedicated QA team. The suite took 4 hours to run, was failing randomly 20% of the time due to flakiness, and covered only 45% of their business logic. Their release cadence was two weeks—not because of development velocity, but because of the testing bottleneck.

Solution

They implemented a three-phase strategy:

Phase 1: Establish the foundation (weeks 1–4)

- Audited existing tests and deleted all tests that had not caught a defect in 6 months

- Introduced unit testing standards—every new feature required unit tests before merge

- Selected Jest for frontend, PyTest for backend services

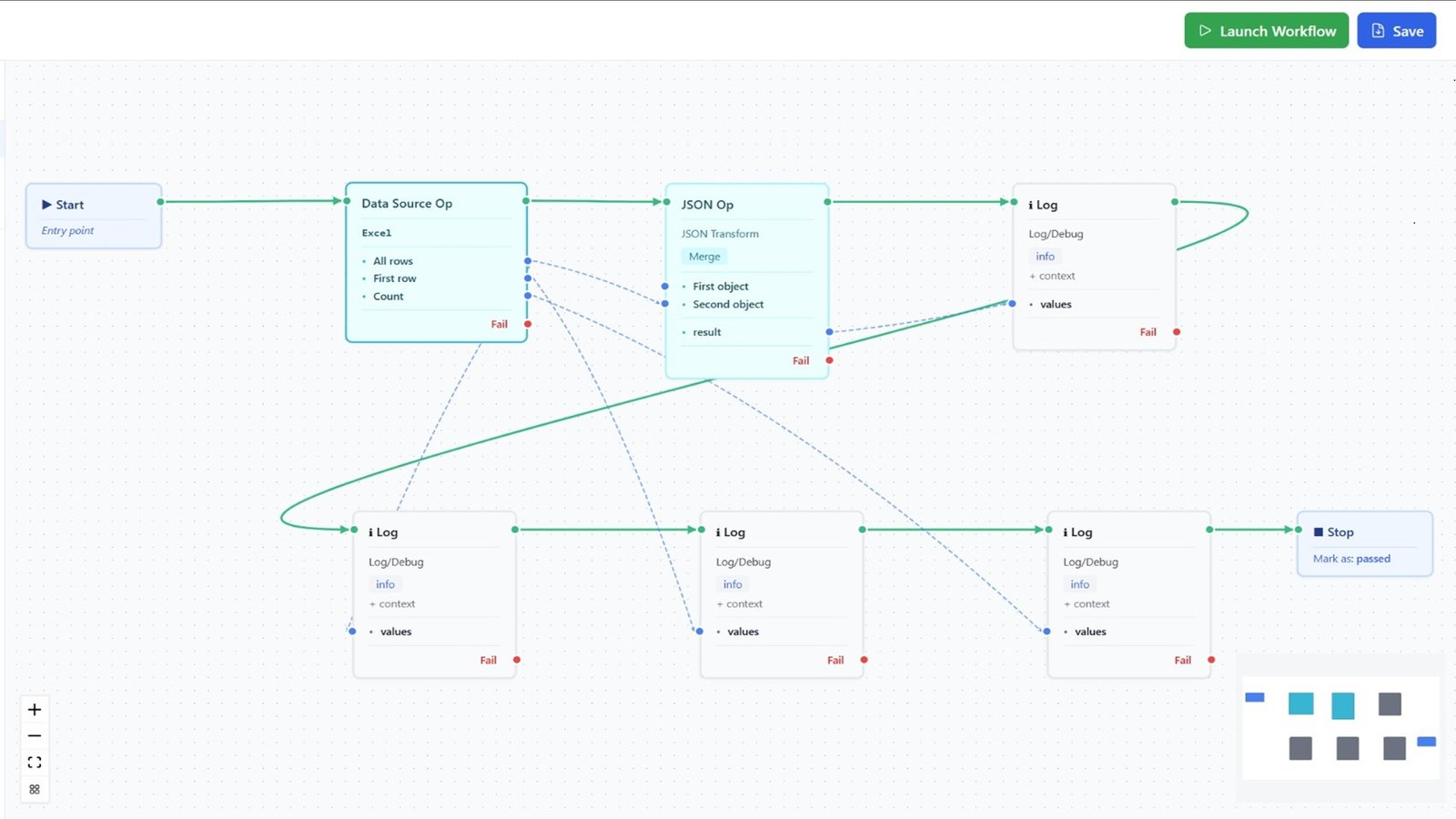

Phase 2: Build the API layer (weeks 5–8)

- Imported their OpenAPI specs into Shift-Left API

- Auto-generated API tests covering all 147 endpoints

- Integrated TSL into their GitHub Actions pipeline, running on every PR

Phase 3: Refocus E2E tests (weeks 9–12)

- Reduced E2E suite from 340 tests to 80 high-value user journey tests

- Migrated from Selenium to Playwright, reducing execution time from 4 hours to 45 minutes

- Scheduled E2E suite for nightly runs, not blocking PRs

Results After 90 Days

- Regression testing time reduced from 12 hours to 90 minutes per sprint

- API test suite covering 147 endpoints runs in under 3 minutes in CI

- Flakiness reduced from 20% to 2% by following test automation best practices for DevOps

- Production incident rate reduced by 61%

- Release cadence improved from bi-weekly to weekly

- Two QA engineers freed up from regression work to focus on exploratory testing

Common Challenges and Solutions

Challenge: Tests pass locally but fail in CI Solution: Containerize test environments using Docker. Ensure test data is managed programmatically and does not depend on local state. Use environment variables for configuration, never hardcoded values.

Challenge: Test suite grows too slow to provide fast feedback Solution: Enforce the pyramid rigorously. Run unit tests on every commit (fast). Run API tests on every build (medium). Run E2E only on release branches (slower is acceptable). Use parallel execution to reduce wall-clock time.

Challenge: Flaky tests erode trust in automation Solution: Track flakiness as a metric. Any test that fails without a code change is flaky and must be fixed or deleted. Implement automatic retry for known infrastructure flakiness (network timeouts) but never for logic failures.

Challenge: No one owns test maintenance Solution: Assign test ownership to feature teams, not a central QA team. Tests for a feature are owned by the team building that feature. This aligns incentives—teams maintain tests for code they own.

Challenge: API tests require specialized skills Solution: Use a platform like Shift-Left API that generates API tests from your OpenAPI specification automatically. This removes the programming barrier and allows QA engineers and product owners to contribute to API test coverage.

Challenge: Test data management across environments Solution: Use test data factories and reset scripts to create clean state before each test run. Never share mutable test data between test cases. For API testing, Shift-Left API's mock server capability allows tests to run against controlled, predictable data without production dependencies.

Best Practices for Test Automation Strategy

- Define automation criteria before writing tests. Know exactly what qualifies a test for automation before you invest.

- Start with the API layer. It delivers the fastest ROI and covers the most business logic per test.

- Treat test code with the same standards as production code. Code review, refactoring, and documentation apply equally.

- Run automation on every pull request. Feedback that comes after merge is too late to be actionable.

- Measure defect escape rate. If defects are reaching production, your automation is not covering the right scenarios.

- Delete flaky tests immediately. A test that sometimes fails is worse than no test—it trains the team to ignore failures.

- Version control all test artifacts. Tests, test data schemas, and configuration all belong in git.

- Budget 20–30% of automation effort for maintenance. This is not optional; it is the cost of keeping your safety net intact.

- Review coverage metrics monthly. Coverage should increase with every sprint, not stagnate.

- Use parallel execution to keep feedback loops short. A 10-minute test suite is the maximum acceptable wait time for a PR gate.

Test Automation Strategy Checklist

- ✔ Automation scope is documented and reviewed quarterly

- ✔ Testing pyramid distribution targets are defined (unit/API/E2E ratios)

- ✔ Every automated test is triggered in CI/CD at the appropriate stage

- ✔ Test ownership is assigned to feature teams, not a central QA silo

- ✔ API layer tests cover all production endpoints (use TSL for no-code generation)

- ✔ Flakiness is tracked as a metric with a zero-tolerance policy

- ✔ Maintenance budget (20–30%) is included in sprint planning

- ✔ Automation ROI is measured and reported to stakeholders quarterly

Frequently Asked Questions

What is a test automation strategy?

A test automation strategy is a plan that defines which tests to automate, at which layer of the testing pyramid, using which tools, and how automation integrates into the CI/CD pipeline to deliver fast, reliable feedback.

What percentage of tests should be automated?

Industry best practice recommends automating 70–80% of your test suite, prioritizing the unit and API/integration layers. Manual testing should be reserved for exploratory, usability, and complex scenario testing.

How do you choose the right test automation tools?

Evaluate tools based on language support, CI/CD integration, maintenance overhead, community maturity, and coverage of your stack. For API testing, no-code platforms like Shift-Left API remove the skill barrier entirely.

How do you calculate test automation ROI?

Automation ROI = (Manual testing cost saved – Automation investment) / Automation investment × 100. Teams typically see positive ROI after 3–6 months when the automation suite covers the most frequently run regression tests.

Conclusion

A test automation strategy is the difference between automation that accelerates delivery and automation that becomes a liability. By grounding your strategy in the testing pyramid, investing heavily in the API layer, and maintaining disciplined ownership and maintenance practices, you can build an automation suite that earns trust and grows in value over time. Shift-Left API makes the API layer accessible to every team by auto-generating tests from your OpenAPI specification—no coding required. Start your free trial and automate your first 100 API tests today.

Related: What Is Shift Left Testing? Complete Guide | Shift Left Testing Strategy | DevOps Testing Strategy | How to Build a CI/CD Testing Pipeline | Best Shift Left Testing Tools | API Testing Strategy for Microservices | No-code API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.