Smoke Testing vs Regression Testing: Key Differences (2026)

Smoke testing vs regression testing compares two testing strategies used at different pipeline stages. Smoke testing runs quick checks to verify a build is stable enough for further testing, while regression testing runs comprehensive suites to confirm existing features still work after code changes.

Smoke testing and regression testing are two of the most frequently confused testing concepts in software engineering. Teams that use the terms interchangeably end up either running too few tests after deployment (mistaking regression tests for smoke tests) or waiting too long in CI for results (running a full regression suite where a smoke test would suffice). This guide provides precise definitions, a comparison table, clear CI/CD placement guidance for both types, and explains how Shift-Left API enables automated API regression testing at the speed required for continuous delivery.

Table of Contents

- What Is Smoke Testing?

- What Is Regression Testing?

- Why the Distinction Matters

- Key Characteristics of Each Type

- CI/CD Pipeline Placement Architecture

- Smoke vs. Regression Testing: Comparison Table

- Real Implementation Example

- Common Challenges and Solutions

- Best Practices for Smoke and Regression Testing

- Testing Strategy Checklist

- FAQ

- Conclusion

Introduction

The terminology distinction matters in practice because smoke tests and regression tests have fundamentally different purposes, scopes, and CI/CD placements. Using a regression suite where a smoke test is needed wastes 10–30 minutes before the team can verify that a deployment even succeeded. Using a smoke test where a regression suite is needed leaves entire categories of defects undetected until production.

Smoke testing answers the question: "Is this build worth testing further?" Regression testing answers the question: "Has anything that was working before stopped working now?" These are different questions, requiring different scopes, different test counts, and different pipeline positions.

This guide clarifies both, explains where each belongs in your CI/CD pipeline, and shows how Shift-Left API makes API regression testing fast enough to run continuously in CI—closing the gap that many teams leave between deployment verification and comprehensive regression coverage. For a broader view of how testing fits into the delivery lifecycle, see our guide on what is shift left testing.

What Is Smoke Testing?

Smoke testing—also called "build verification testing" or "sanity testing"—is a minimal set of tests designed to verify that the most critical functionality of a build or deployment works at a basic level. The name comes from electronics testing: if you power on a circuit and it does not catch fire (emit smoke), it is worth testing further.

A smoke test suite is intentionally small. It does not attempt comprehensive coverage. Its sole purpose is to determine whether the application is stable enough to invest further testing resources.

What Smoke Tests Validate

- The application starts and is reachable (health check endpoint returns 200)

- The most critical user path is minimally functional (user can log in, core feature loads)

- Critical API endpoints respond correctly (not 500 errors, not timeouts)

- Database connectivity is healthy (core read/write operations succeed)

- Third-party dependencies critical for basic operation are reachable

What Smoke Tests Do NOT Validate

- Comprehensive business logic correctness

- Edge cases and error handling

- All API endpoints

- Performance under load

- Regression: whether functionality that worked before still works

Smoke Test Characteristics

- Count: Small—typically 10–30 tests covering critical paths only

- Execution time: Under 5 minutes (preferably under 2)

- Trigger: Every deployment to any environment

- Failure behavior: Stop all further testing; notify deployment team; do not proceed

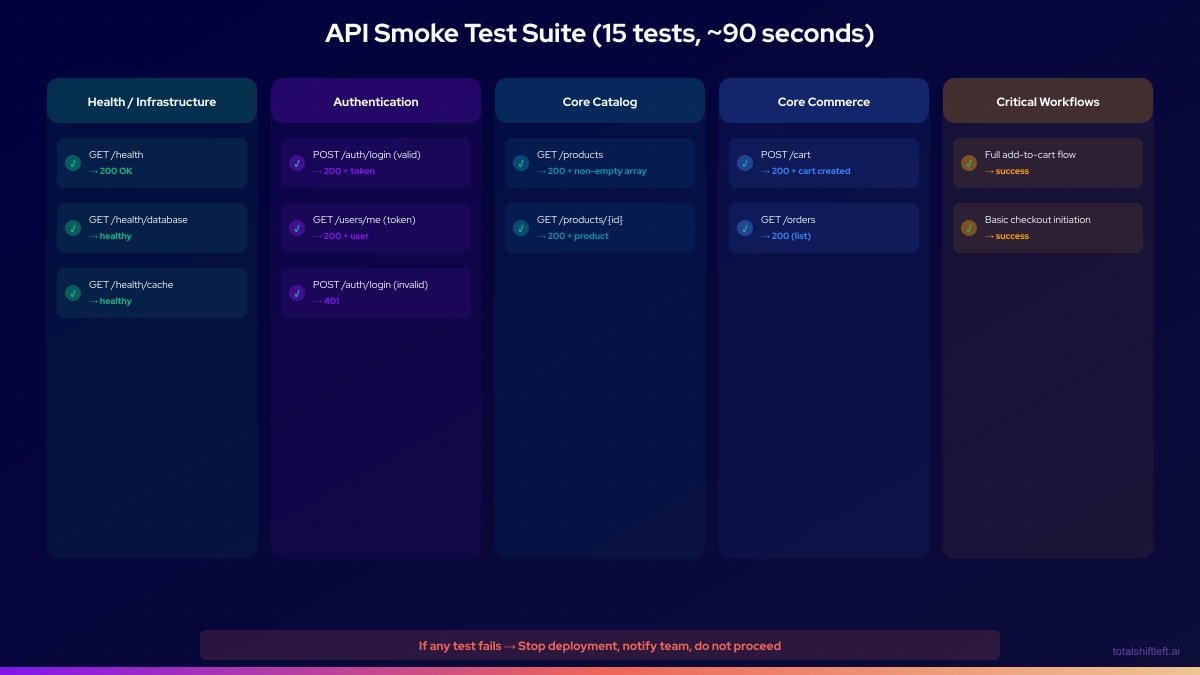

Example Smoke Test Suite for an E-commerce API

If any of these 15 tests fail, the deployment has critical issues. Stop testing, notify the team, and do not proceed to regression testing.

What Is Regression Testing?

Regression testing is a comprehensive validation of all existing functionality to ensure that code changes have not introduced defects in previously working features. Every time code changes—new features added, bugs fixed, dependencies updated—regression tests verify that nothing was broken in the process.

The word "regression" refers to a backwards step: a regression is when something that worked before stops working. Regression testing is the systematic prevention of regressions reaching production.

What Regression Tests Validate

- All previously validated features continue to work correctly

- All API endpoints return correct responses for all defined scenarios

- All business rules are correctly enforced

- Error handling remains correct for all invalid input combinations

- Recently fixed bugs have not reappeared (verification of bug fix regression)

- Integration points between components remain intact

What Regression Tests Do NOT Validate (That Other Test Types Do)

- New, untested features (those need initial validation, not regression validation)

- Deployment success (that is smoke testing's role)

- Performance under load (that is performance testing's role)

- Unknown defect scenarios (that is exploratory testing's role)

Regression Test Characteristics

- Count: Large—typically hundreds to thousands of tests

- Execution time: API regression: 3–10 minutes; Full E2E regression: 15–60 minutes

- Trigger: Every build (API-based), every PR (fast API-only), nightly/pre-release (full E2E)

- Failure behavior: Block deployment; identify which feature/scenario broke; assign for fix

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

The API Regression Advantage

API regression testing is uniquely well-suited to CI/CD because it is fast enough to run continuously. A comprehensive API regression suite covering 300 endpoints typically runs in 3–6 minutes—within the threshold for a PR gate. This means regressions are caught within minutes of being introduced.

E2E regression suites covering the same functional scope would take 45–90 minutes—too slow for PR gates, appropriate only for nightly runs. By building comprehensive API regression coverage with Shift-Left API, teams can catch the vast majority of regressions within minutes of code change.

Why the Distinction Matters

Different Questions, Different Scope, Different Cost

If you deploy to staging and run your full regression suite before knowing whether the deployment succeeded, you may run 30 minutes of regression tests against a broken deployment that fails on the first smoke check. That is 30 minutes of compute and attention wasted.

Conversely, if you run only smoke tests before deploying to production, you verify that the application starts—not that all the features that were working yesterday are still working today. A regression introduced 3 sprints ago slips through.

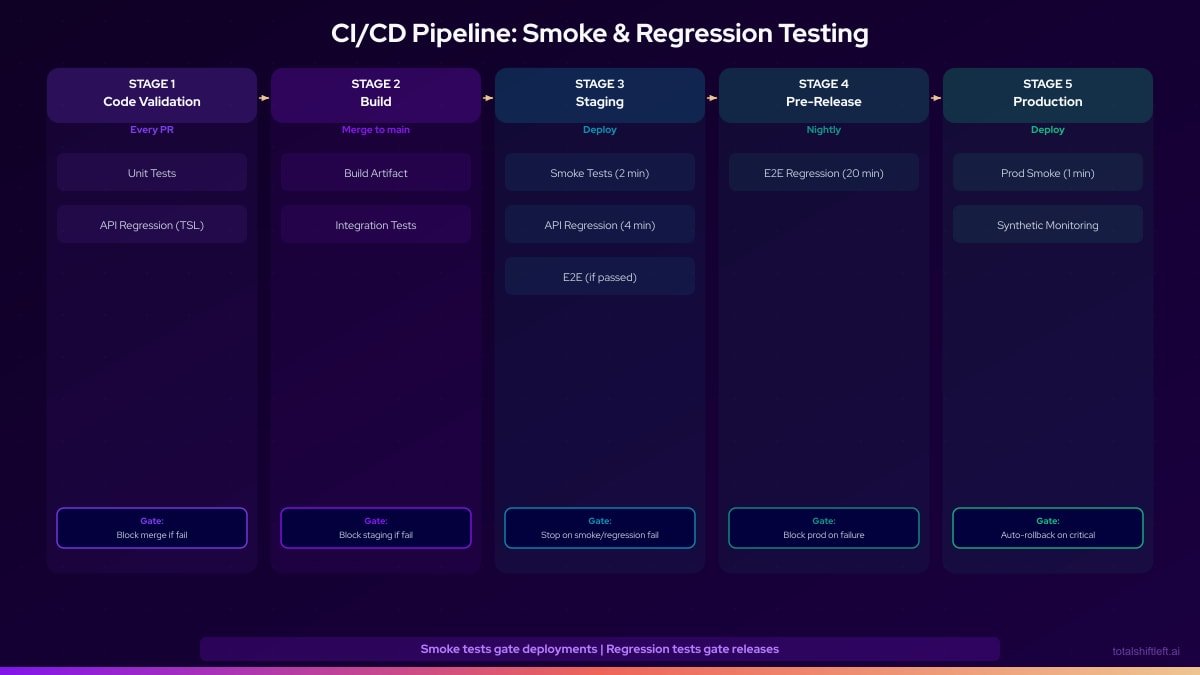

The correct pipeline placement:

- Deploy to environment

- Smoke tests (2 minutes): Is the build stable?

- Regression tests (3–10 minutes for API, nightly for E2E): Has anything regressed?

- Proceed to next stage only if both pass

Cost of Misclassification

Running regression tests instead of smoke tests post-deployment: Wastes 30–90 minutes before knowing if a broken deployment is even worth further testing. Delays rollback decisions.

Running smoke tests instead of regression tests pre-release: Misses regressions. Code changes that broke previously working features proceed to production. Increases change failure rate.

Key Characteristics of Each Type

Smoke Testing

- Purpose: Verify build stability; gate further testing

- Scope: Minimal — critical paths only

- Count: 10–30 tests

- Speed: Under 2 minutes

- When to run: After every deployment

- Failure action: Stop all testing, escalate, investigate deployment

- Maintained by: QA engineers (small, stable suite)

- Examples: Health checks, login validation, core feature availability

Regression Testing

- Purpose: Verify no existing functionality has broken

- Scope: Comprehensive — all features and scenarios

- Count: Hundreds to thousands of tests

- Speed: 3–10 minutes (API), 15–60 minutes (E2E)

- When to run: Every build, every PR (API-based), nightly (E2E)

- Failure action: Block deployment, identify breaking change, assign fix

- Maintained by: QA + developers (large, evolving suite)

- Examples: All API endpoints, all business rules, all error scenarios

CI/CD Pipeline Placement Architecture

Smoke vs. Regression Testing: Comparison Table

| Dimension | Smoke Testing | Regression Testing |

|---|---|---|

| Primary purpose | Verify build stability | Verify no functionality regressed |

| Scope | Minimal (critical paths) | Comprehensive (all features) |

| Typical test count | 10–30 tests | Hundreds to thousands |

| Execution time | Under 2 minutes | 3–10 min (API), 15–60 min (E2E) |

| CI/CD trigger | After every deployment | Every build/PR (API), nightly (E2E) |

| Failure response | Stop all testing, investigate deployment | Block release, identify regression |

| Maintenance overhead | Low (stable, rarely updated) | Medium to high (grows with feature count) |

| Who maintains | QA engineers | QA engineers + developers |

| Automatable | Yes (critical path API checks) | Yes (TSL auto-generates API regression) |

| False positive risk | Low (minimal scope, stable) | Medium (large suites, flakiness) |

Real Implementation Example

Problem

A payments processing company with 15 engineers had no formal distinction between smoke testing and regression testing. After every deployment to staging, they ran their entire 400-test E2E suite—which took 110 minutes. On 3 of 4 deployments per week, they discovered midway through the E2E run that the deployment itself had failed (a database migration issue, a misconfigured environment variable, a missing secret). This meant 110 minutes were spent testing a broken deployment before the team even knew it was broken.

Meanwhile, their API regression coverage was zero. Business logic regressions that could have been caught in 4 minutes of API testing were taking 110 minutes to surface in the E2E suite—and sometimes not surfacing at all, slipping through to production.

Solution

Phase 1: Smoke test suite (week 1)

- Created a 20-test smoke suite covering health endpoints, authentication, and the 5 most critical payment workflows

- Suite runs in under 90 seconds against any deployed environment

- Integrated as the first stage after any staging deployment

- Smoke failure = immediate deployment rollback investigation

Phase 2: API regression suite with Shift-Left API (weeks 2–4)

- Imported OpenAPI specification for the payment service (183 endpoints)

- Auto-generated 380 API regression tests covering all endpoints

- Integrated into CI: runs on every PR (3.5 minutes) and post-deployment to staging

Phase 3: E2E refocus (weeks 5–8)

- Reduced E2E suite from 400 to 80 tests (critical payment flows only)

- E2E suite runtime: 110 minutes → 18 minutes

- E2E suite runs nightly and pre-release, not after every staging deployment

Phase 4: Ordering correction

- Post-staging-deployment: Smoke tests (90 seconds) → API regression (3.5 minutes) → E2E nightly

- Pre-production: Smoke tests → API regression → E2E (on demand) → deploy

Results After 60 Days

| Metric | Before | After |

|---|---|---|

| Time wasted testing broken deployments | ~440 min/week | ~3 min/week |

| Post-deployment test time (staging) | 110 min | 5 min (smoke + API) |

| API regression coverage | 0% | 100% (380 tests) |

| E2E suite runtime | 110 min | 18 min |

| Production regressions in 60 days | 3 | 0 |

| Mean time to detect broken deployment | ~55 min | ~90 seconds |

Common Challenges and Solutions

Challenge: Smoke tests are too comprehensive and too slow Solution: Enforce a strict rule: smoke tests only cover the paths that must work for any testing to be valid at all. If the app can authenticate, reach the database, and load the core feature, it is smoke-test-passing. Everything else belongs in regression.

Challenge: Regression suite grows without bound and becomes too slow Solution: Enforce coverage criteria for adding new regression tests. For API regression, use Shift-Left API to auto-generate tests from your OpenAPI spec—it automatically generates the right number of tests based on the spec without manual accumulation. For E2E regression, set a maximum test count (target: under 150) and delete redundant tests quarterly.

Challenge: Regression tests catch things smoke tests should have caught Solution: Analyze every regression failure: would a minimal smoke test have caught this? If yes, add a smoke test for the scenario and consider moving the regression test. The smoke suite should evolve to catch the most common deployment failure modes.

Challenge: API regression tests cannot validate everything the E2E suite validates Solution: This is correct and expected—API regression tests do not cover UI behavior or browser-level interactions. Design your testing strategy to acknowledge this: API regression for logic coverage, targeted E2E for UI workflow coverage.

Challenge: Teams do not know which tests to include in the smoke suite Solution: Analyze production incidents from the past 6 months. What percentage involved the application not being reachable? What percentage involved authentication failure? What percentage involved the core feature being non-functional? These percentages tell you exactly what your smoke suite should cover.

Best Practices for Smoke and Regression Testing

- Keep smoke tests small and fast. Maximum 30 tests, maximum 2 minutes. If it is more, it is not a smoke test.

- Run smoke tests immediately after every deployment. Not after the full build, not after regression—immediately after deployment.

- Treat smoke test failure as a deployment emergency. Failed smoke tests mean the deployment is broken. Rollback procedures should begin immediately.

- Use API regression for CI gates. API regression tests (via Shift-Left API) are fast enough to run on every PR and every build without blocking developers. See our guide on how to build a CI/CD testing pipeline for step-by-step integration instructions.

- Schedule E2E regression for nightly or pre-release. E2E regression is too slow for CI gates but provides essential user-journey coverage. Apply test automation best practices for DevOps to keep both suites reliable.

- Auto-generate API regression from your OpenAPI spec. Shift-Left API maintains API regression coverage automatically as your API evolves.

- Track regression failure root causes. Distinguish between flaky test failures (infrastructure) and genuine regressions (code changes). Each requires a different response.

- Embed both testing types into your broader DevOps testing strategy. Smoke and regression tests are components of a larger quality system — their placement and triggers should be defined at the strategy level.

- Include bug fix verification in regression. When a bug is fixed, add or update a test that would have caught it. This prevents the same bug from regressing.

Testing Strategy Checklist

- ✔ Smoke test suite covers 10–30 critical paths and runs in under 2 minutes

- ✔ Smoke tests trigger automatically after every deployment to any environment

- ✔ API regression tests run on every PR and every build (use TSL for auto-generation)

- ✔ E2E regression tests run nightly and pre-release (not on every deployment)

- ✔ Smoke test failure stops all further testing immediately

- ✔ API regression tests cover 100% of API endpoints (complete coverage via TSL)

- ✔ Regression suite size is tracked quarterly and pruned of redundant tests

- ✔ Every production bug fix includes a corresponding regression test

Frequently Asked Questions

What is the difference between smoke testing and regression testing?

Smoke testing is a small set of tests that verify a build is stable and functional at a high level—it determines whether further testing is warranted. Regression testing is a comprehensive suite that verifies all existing functionality still works correctly after a code change.

When should smoke tests run in a CI/CD pipeline?

Smoke tests should run immediately after every deployment to a new environment to verify that the deployment was successful and the application is minimally functional. They are the first quality gate after deployment.

When should regression tests run in a CI/CD pipeline?

Regression tests should run after smoke tests pass, on every build that could have changed application behavior. For fast regression suites (API-based, under 10 minutes), they can run on every PR. For slower suites (E2E-based), they run nightly or pre-release.

What tools support automated API regression testing?

Shift-Left API auto-generates API regression tests from your OpenAPI specification, covering all endpoints and validating that behavior matches the defined contract on every build. The fast execution (typically under 5 minutes for hundreds of tests) makes API regression testing viable as a CI gate.

Conclusion

Smoke testing and regression testing serve distinct, essential roles in the CI/CD pipeline. Both are key components of a mature DevOps testing strategy. Smoke tests verify that deployments succeed and warrant further testing—they must be fast, minimal, and run immediately after every deployment. Regression tests verify that existing functionality has not been broken by recent changes—they must be comprehensive and run continuously throughout the delivery cycle. The gap between these two extremes is filled most effectively by automated API regression testing, which is fast enough for CI gates and comprehensive enough to catch the business logic regressions that matter most. Shift-Left API auto-generates API regression tests from your OpenAPI specification, providing complete API regression coverage in minutes rather than months. Start your free trial and establish automated API regression testing today.

Related: Shift Left Testing Strategy | What Is Shift Left Testing | Automated Testing in CI/CD | How to Build a CI/CD Testing Pipeline | DevOps Testing Strategy | Test Automation Strategy | No-code API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.