Shift Left vs Shift Right Testing: Key Differences Explained (2026)

Shift Left vs Shift Right Testing: Key Differences Explained (2026)

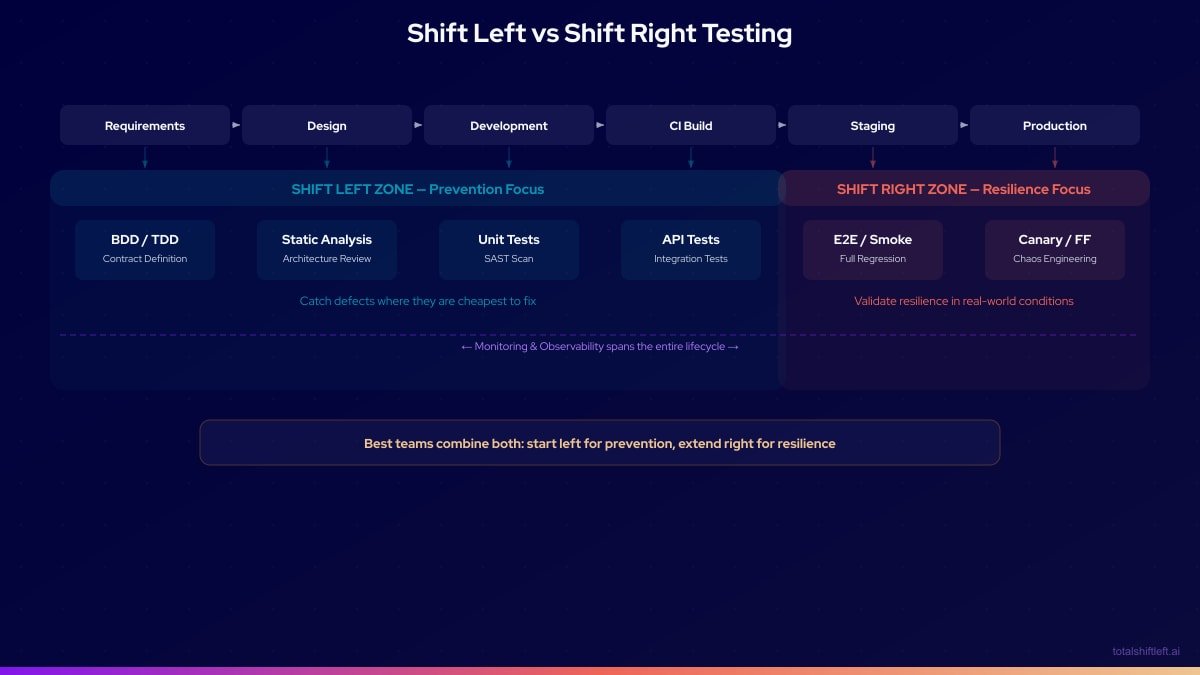

Shift left vs shift right testing compares two complementary quality strategies. Shift left testing catches defects early by moving quality checks into development and CI/CD pipelines, while shift right testing validates software behavior in production. Together, they provide end-to-end quality coverage across the entire delivery lifecycle.

The debate frames one of the central tensions in modern software quality engineering: should teams focus on preventing defects before they reach production, or on detecting and recovering from issues in production? In 2026, the answer is both — but understanding the distinct strengths, weaknesses, and use cases of each approach is essential for building an effective quality strategy.

This guide provides an authoritative comparison of shift left and shift right testing, explaining what each approach is, when to use it, how they complement each other, and which tools support each methodology.

Table of Contents

- What Is Shift Left vs Shift Right Testing?

- Why Both Approaches Matter

- Key Components of Each Approach

- Architecture: How the Two Approaches Map to a Pipeline

- Tools for Shift Left and Shift Right Testing

- Real Implementation Example

- Common Challenges and Solutions

- Best Practices

- Shift Left vs Shift Right Strategy Checklist

- Frequently Asked Questions

- Conclusion

Introduction

The language of "left" and "right" in software testing refers to a timeline. Imagine laying out a traditional software development lifecycle from left to right: requirements gathering sits at the far left, production deployment sits at the far right. Testing has historically lived toward the right — a phase that happens after development is complete and before (or sometimes after) deployment.

Shift left testing moves quality activities to the left of that timeline, embedding them into requirements, design, and development. Shift right testing moves quality activities to the right — into staging, canary deployments, and production itself. Both directions represent a departure from the traditional center, where testing was a discrete phase between development and deployment.

For most of software engineering history, "shift right" was not discussed as a deliberate strategy — it simply happened by accident when defects escaped the testing phase. But modern practices like chaos engineering, canary deployments, A/B testing, and production monitoring have elevated shift right to a legitimate and intentional quality strategy.

Understanding both approaches — their principles, techniques, tools, and tradeoffs — allows engineering teams to build a quality strategy that is greater than the sum of its parts.

What Is Shift Left vs Shift Right Testing?

Shift Left Testing Defined

Shift left testing is the practice of moving quality activities earlier in the software development lifecycle. Instead of testing after code is written, shift left teams test during requirements definition, design, and active development. The goal is to catch defects at the earliest, cheapest point in the process.

Key shift left techniques include: test-driven development (TDD), behavior-driven development (BDD), static code analysis, unit testing, API contract testing, API test automation from OpenAPI/Swagger specifications, and security scanning integrated into CI/CD pipelines. For a complete introduction, see What Is Shift Left Testing? Complete Guide.

Shift Right Testing Defined

Shift right testing is the practice of validating software behavior in production or production-like conditions. Rather than relying exclusively on pre-production environments to catch all issues, shift right teams deliberately expose software to real or simulated real-world conditions — including actual user traffic, genuine production load, and intentional failure injection.

Key shift right techniques include: canary deployments, blue-green deployments, feature flags, A/B testing, chaos engineering, synthetic monitoring, real user monitoring (RUM), and progressive delivery practices.

The Comprehensive Comparison

| Dimension | Shift Left Testing | Shift Right Testing |

|---|---|---|

| When it happens | Requirements, design, development, CI | Staging, canary, production |

| Primary goal | Prevent defects from reaching production | Detect and respond to production issues |

| Cost of defects found | Low (early fix) | High (production remediation) |

| Types of issues caught | Logic errors, API contract violations, security vulnerabilities | Performance degradation, edge case behavior, real-user issues |

| Who drives it | Developers, QA engineers, automation tools | SRE, platform engineering, observability teams |

| Automation level | High (test generation, CI/CD integration) | High (monitoring, alerting, chaos tooling) |

| Feedback speed | Seconds to minutes | Minutes to hours (real-time monitoring) |

| Test environment | Local, CI, staging | Production or production mirror |

| Risk tolerance | Low — prevent defects | Managed — accept some risk, detect fast |

| Complementary to | Production monitoring | Development-time quality |

| Key tools | Shift-Left API, Jest, Pact, SonarQube | LaunchDarkly, Gremlin, Datadog, New Relic |

| Cultural emphasis | Prevention is better than cure | Resilience and recovery are equally important |

| Regulatory fit | Strong audit trail for quality prevention | Supports observability and compliance reporting |

| User impact | Minimal — users unaffected by pre-production issues | Potential — some users may experience issues in canary |

When to Shift Left

Shift left testing is most valuable for:

- Logic and correctness errors that can be defined and tested before deployment

- API contract violations where service interfaces need to be validated before integration

- Security vulnerabilities identified through static analysis or dependency scanning

- Regression defects caught by automated test suites in CI/CD

- Performance baselines established before features reach production

When to Shift Right

Shift right testing is most valuable for:

- Load and scale behaviors that only emerge under real production traffic volumes

- Edge cases created by real user behavior that pre-production test scenarios do not anticipate

- Infrastructure and configuration issues that differ between environments

- Feature validation through controlled A/B experiments in production

- Chaos and resilience testing to verify system behavior under failure conditions

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

Why Both Approaches Matter

The false choice between shift left and shift right testing is a trap. Teams that only shift left have comprehensive pre-production quality but may be surprised by production-specific behaviors they could not anticipate in a test environment. Teams that only shift right catch real issues but pay a high cost — user impact, incident response, and revenue loss — for every defect that reaches production.

The Case for Shift Left as the Foundation

IBM research and industry data consistently show that the cost of fixing a defect rises dramatically with the stage of discovery. Fixing a bug during development costs a fraction of fixing it in production. For this reason, shift left testing must be the foundation of any quality strategy. Preventing defects is always more cost-effective than detecting and recovering from them.

Teams that invest in shift left testing — particularly automated API testing, contract testing, and continuous integration quality gates — report 30-40% reductions in defect escape rates. Every defect caught in CI is one that never reaches a customer. Learn how to build this foundation in our shift left testing strategy guide.

The Case for Shift Right as the Safety Net

No pre-production testing environment perfectly replicates production. Real users generate traffic patterns that QA scenarios do not anticipate. Production configurations, data volumes, and infrastructure behaviors differ from staging. The only way to truly validate software behavior under production conditions is to test in or near production.

Shift right practices like canary deployments, feature flags, and synthetic monitoring allow teams to validate real-world behavior while controlling blast radius. A canary deployment routes 5% of traffic to a new version — if it behaves badly, the rollout stops before the majority of users are affected. This is testing in production done deliberately and safely.

The Combined Strategy

The most effective approach is a quality pipeline that uses shift left to prevent the vast majority of defects and shift right to catch the residual issues that only emerge in production conditions:

- Shift left reduces the defect rate that reaches production

- Shift right reduces the impact of defects that do reach production

- Together, they create a quality system that is both preventative and resilient

Key Components of Each Approach

Shift Left Components

Test-Driven Development (TDD): Tests written before code, defining expected behavior from the start. Tests drive the implementation and serve as living documentation.

API Testing from Specifications: Using tools like Shift-Left API, API tests are generated automatically from OpenAPI/Swagger specifications. This ensures that every endpoint, method, and response code is validated from the moment the API is defined — often before implementation begins.

Contract Testing: Consumer-driven contracts define the interface expectations between microservices. Contract tests verify these expectations independently, without requiring both services to be deployed together. For microservices teams, a dedicated API testing strategy is critical.

Static Analysis: Automated code scanning identifies bugs, security vulnerabilities, and code quality issues before code is committed or reviewed.

CI/CD Quality Gates: Pipeline stages that block progression when tests fail. Quality gates enforce that no code advances through the pipeline without meeting defined quality criteria. See our guide on how to build a CI/CD testing pipeline for implementation details.

Shift Right Components

Canary Deployments: New software versions are rolled out to a small subset of users first. Monitoring detects issues before the rollout expands to the full user base.

Feature Flags: Features are deployed but hidden behind flags, allowing controlled enablement for specific user segments. Issues can be resolved by disabling the flag without a full rollback.

Chaos Engineering: Deliberate injection of failures (network latency, service outages, resource exhaustion) to test system resilience. Netflix's Chaos Monkey pioneered this approach at scale.

Synthetic Monitoring: Automated scripts simulate user journeys in production on a continuous basis, alerting teams when production behavior deviates from expectations.

Real User Monitoring (RUM): Collection and analysis of actual user performance and error data from production, providing ground truth about user experience that no lab test can replicate.

Architecture: How the Two Approaches Map to a Pipeline

This architecture shows how the two approaches complement each other across the delivery pipeline. The left zone is dominated by prevention-focused automation. The right zone is dominated by resilience and observability tooling. A healthy quality strategy has both zones operating effectively.

Tools for Shift Left and Shift Right Testing

| Category | Shift Left Tools | Shift Right Tools | Notes |

|---|---|---|---|

| API Testing | Shift-Left API, REST Assured, Karate | Postman Monitors, Runscope | TSL auto-generates from specs |

| Contract Testing | Pact, Spring Cloud Contract | Pact Broker (production verification) | Consumer-driven approach |

| Unit Testing | Jest, JUnit, PyTest, Mocha | — | Language-specific frameworks |

| Static Analysis | SonarQube, ESLint, Checkmarx | — | Commit/PR stage integration |

| CI/CD | GitHub Actions, GitLab CI, Jenkins | — | Pipeline orchestration |

| Feature Flags | — | LaunchDarkly, Unleash, Split.io | Controlled rollout in production |

| Chaos Engineering | — | Gremlin, Chaos Monkey, LitmusChaos | Resilience validation |

| Monitoring | — | Datadog, New Relic, Prometheus, Grafana | Production observability |

| Synthetic Monitoring | — | Datadog Synthetics, Checkly, Pingdom | Simulated production testing |

| Canary/Progressive Delivery | — | Argo Rollouts, Flagger, Spinnaker | Controlled production rollouts |

| Performance Testing | k6, Gatling (pre-production) | k6 (production load tests) | Both zones depending on maturity |

Real Implementation Example

Problem

A large e-commerce platform was experiencing a frustrating pattern: their pre-production testing suite was extensive and passed consistently, but production incidents related to API performance and unexpected user behaviors continued to happen every release cycle. The team had invested heavily in shift left practices — unit tests, API tests, and CI quality gates — but had no shift right practices in place. When code reached production, they were flying blind.

Solution

The platform engineering team implemented a combined shift left and shift right strategy:

Shift Left Improvements: The team onboarded Shift-Left API to generate API tests from their OpenAPI specifications across 28 services. This increased API test coverage from 35% to 94% of endpoints, with tests running on every pull request and CI build. Contract tests using Pact were added for the 8 most critical inter-service interfaces.

Shift Right Implementation: The team adopted feature flags (LaunchDarkly) for all new features, enabling controlled rollouts to 1%, 10%, and 50% of users before full release. Datadog Synthetics ran simulated user journeys in production every 5 minutes. Argo Rollouts managed canary deployments, automatically rolling back when error rates exceeded defined thresholds.

Results

Over two quarters:

- Production incidents related to API failures dropped by 67% (attributed to improved shift left coverage)

- Remaining production incidents were detected in an average of 4 minutes (compared to 45 minutes previously) due to synthetic monitoring

- Three releases were automatically rolled back by canary analysis before impacting more than 2% of users — each would have previously become a major incident

- Developer confidence in production deployments increased significantly; the team began deploying on Fridays without hesitation

Common Challenges and Solutions

Challenge: Treating Shift Left and Shift Right as Competing Priorities

Problem: Teams allocate budget and attention to one approach at the expense of the other, leaving gaps in their quality coverage.

Solution: Frame shift left and shift right as complementary investments. Document the class of defects each approach catches to demonstrate that they address different risks. Build a roadmap that grows both capabilities simultaneously.

Challenge: Shift Right Becoming a Substitute for Pre-production Quality

Problem: "We have great monitoring" becomes an excuse to skip pre-production testing. This increases user impact from defects and creates an expensive, reactive quality culture.

Solution: Establish clear quality gates in the pre-production pipeline. Shift right should be a safety net, not a primary quality mechanism. Track the percentage of defects caught pre-production vs. post-production and set improvement targets.

Challenge: Alert Fatigue from Production Monitoring

Problem: Overly sensitive shift right monitoring generates too many alerts, leading teams to ignore them — defeating the purpose.

Solution: Tune alerting thresholds based on baseline production behavior. Use anomaly detection rather than static thresholds where possible. Implement alert grouping and on-call rotation to distribute response load.

Challenge: Slow Shift Left Pipelines Pushing Testing to the Right

Problem: As shift left test suites grow, CI pipelines become slow. Developers bypass them or push code without waiting for results, effectively shifting testing to the right unintentionally.

Solution: Optimize pipeline performance aggressively. Run unit tests in parallel. Use test impact analysis to run only tests affected by changed code. Cache dependencies and test environments. Set a target of under 10 minutes for the core CI feedback loop.

Best Practices

- Use shift left as the primary defect prevention mechanism. Most defects should be caught in pre-production stages — this is the most cost-effective quality strategy.

- Design shift right as a resilience and learning system. Production monitoring and canary deployments exist to catch what pre-production cannot, and to accelerate incident detection and response.

- Automate both zones. Shift left automation is CI/CD-based. Shift right automation is monitoring, alerting, and progressive delivery. Both require investment to be effective. See the DevOps testing strategy guide for a holistic approach.

- Generate API tests from OpenAPI/Swagger specs. Spec-driven test generation with Shift-Left API ensures shift left coverage is comprehensive and always current. Compare all leading platforms in our best shift left testing tools guide.

- Instrument every production deployment. Every release should have defined success metrics, alerting thresholds, and rollback criteria before it goes live.

- Track defect origin in retrospectives. Classify each production incident by whether it could have been caught by better shift left practices or was genuinely a shift right concern. Use this data to improve both strategies.

- Make shift right invisible to the user. Canary deployments, feature flags, and chaos engineering should be transparent to users — controlled experiments, not user-facing incidents.

- Build a shared quality culture. Developers own shift left quality. SRE and platform engineers own shift right resilience. QA architects own the overall quality strategy. Collaboration across these roles is essential.

- Understand and communicate the benefits of shift left testing to stakeholders. Data-driven ROI evidence builds organizational support for shift left investment.

- Anticipate shift left testing challenges before they stall adoption. Cultural resistance, skill gaps, and tool sprawl are predictable obstacles with known solutions.

Shift Left vs Shift Right Strategy Checklist

- ✔ Shift left: Unit tests, API tests, and static analysis run in CI on every commit

- ✔ Shift left: API tests are generated from OpenAPI/Swagger specifications

- ✔ Shift left: Contract tests cover critical inter-service interfaces

- ✔ Shift left: Quality gates block pipeline progression on test failure

- ✔ Shift right: All deployments use canary or progressive delivery strategies

- ✔ Shift right: Production is monitored with synthetic tests and real user monitoring

- ✔ Shift right: Feature flags are in place for all major new features

- ✔ Shift right: Rollback procedures and thresholds are defined before each release

Frequently Asked Questions

What is the difference between shift left and shift right testing?

Shift left testing moves quality checks earlier in the development lifecycle — to requirements, design, and development stages — to catch defects before they become expensive. Shift right testing validates software in production-like or actual production environments to catch issues that only emerge under real-world load and conditions.

Should teams choose shift left or shift right testing?

Modern engineering teams should use both. Shift left testing prevents defects from reaching production, while shift right testing monitors production behavior and catches issues that cannot be replicated in pre-production environments. The two strategies are complementary, not competing.

What are examples of shift right testing techniques?

Shift right testing techniques include canary deployments, blue-green deployments, A/B testing, chaos engineering, feature flags, production monitoring, real user monitoring (RUM), and synthetic monitoring. These approaches validate software behavior under real production conditions.

Which approach reduces software costs more?

Shift left testing generally reduces costs more by preventing defects early — catching a bug during development is dramatically cheaper than remediating it in production. However, shift right testing catches a class of production-specific issues that pre-production testing cannot, preventing costly incidents. A combined strategy delivers the best overall cost profile.

Conclusion

The shift left vs shift right testing distinction is a useful conceptual frame, but experienced engineering teams quickly recognize that the goal is not to choose between them — it is to excel at both. Shift left testing provides the prevention layer that keeps defects from reaching users. Shift right testing provides the resilience and learning layer that catches what prevention misses and accelerates recovery.

Building both capabilities requires investment in automation, tooling, and culture. The returns — measured in reduced defect escape rates, faster incident detection, and higher developer confidence — make that investment one of the highest-ROI activities in modern software engineering. Shift-Left API accelerates the shift left side of this equation by automating API test generation from your OpenAPI specifications, giving teams comprehensive shift left coverage from day one. Start your free trial to see how quickly you can expand your shift left coverage.

Related: What Is Shift Left Testing? Complete Guide | Shift Left Testing Strategy | API Testing Strategy for Microservices | How to Build a CI/CD Testing Pipeline | DevOps Testing Strategy | Best Shift Left Testing Tools | Benefits of Shift Left Testing | No-code API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.