How to Automate API Testing in CI/CD Pipelines (2026)

Automating API testing in CI/CD is the practice of integrating API test execution into your delivery pipeline so every code change triggers automatic validation. It catches API regressions at the pull request stage, prevents breaking changes from reaching production, and eliminates manual QA bottlenecks.

Automating API testing in CI/CD means every code change automatically triggers a suite of API tests that validate correctness, performance, and schema compliance — blocking broken changes from reaching production without human review.

Table of Contents

- Introduction

- What Does It Mean to Automate API Testing in CI/CD?

- Why Manual API Testing Fails in Modern Pipelines

- Key Components of CI/CD API Test Automation

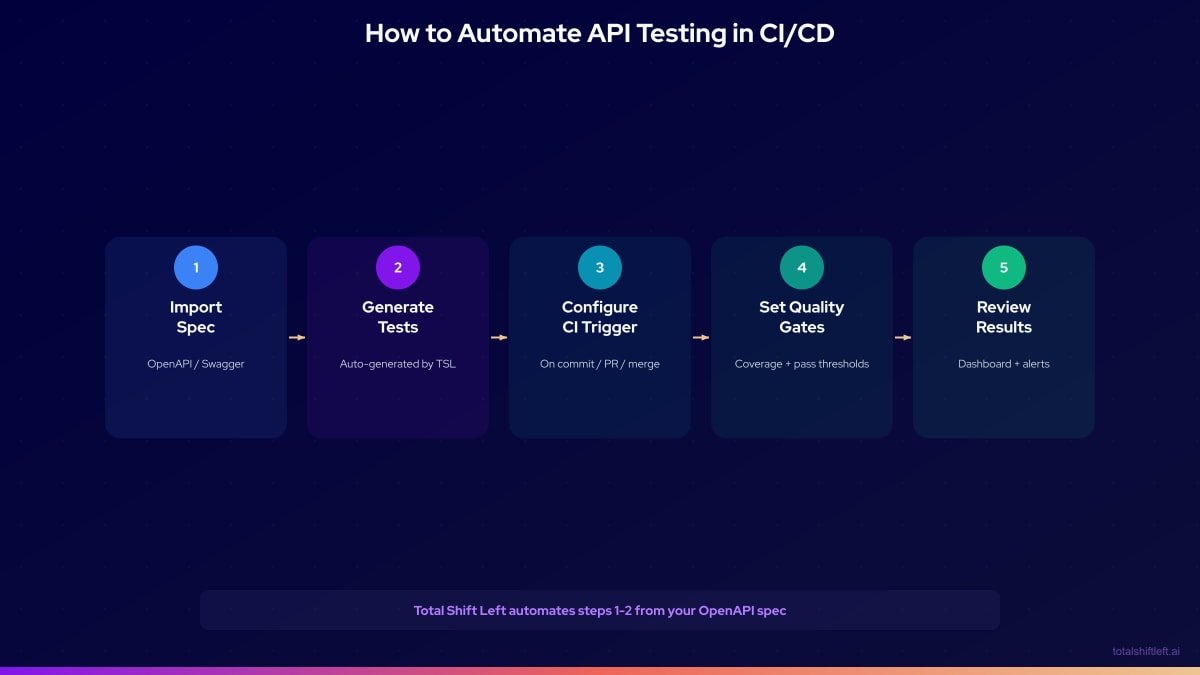

- The 5-Step Automation Workflow

- Step 1: Import Your OpenAPI Spec

- Step 2: Auto-Generate Tests

- Step 3: Configure the CI Trigger

- Step 4: Set Quality Gates

- Step 5: Review Results and Analytics

- CI/CD Pipeline Architecture Diagram

- Tools Comparison

- Common Challenges and Solutions

- Best Practices

- CI/CD API Testing Checklist

- FAQ

- Conclusion

Introduction

Software delivery speed has accelerated dramatically. The average high-performing engineering team now deploys multiple times per day. At that velocity, the old model — run tests manually in a QA environment, get approval, deploy — is not just slow, it is actively harmful. Manual gates create bottlenecks, reduce developer confidence, and paradoxically lead to more production incidents because teams start bypassing review steps to keep up with the pace.

The solution is to automate API testing in CI/CD pipelines so that quality verification happens automatically, consistently, and at machine speed. Every pull request, every commit, every deployment candidate gets tested — without anyone manually clicking "run." This is the shift-left testing principle in practice: moving quality validation as early as possible in the delivery process.

This guide walks through the complete implementation: from importing your first OpenAPI spec to running fully automated test suites in GitHub Actions, with quality gates that protect your main branch from broken APIs. We use Shift-Left API as the automation platform, but the principles apply regardless of which tool you choose.

By the end, you will have a working mental model of the full pipeline and a concrete configuration you can adapt for your own stack.

What Does It Mean to Automate API Testing in CI/CD?

CI/CD stands for Continuous Integration and Continuous Deployment (or Delivery). Continuous Integration means every developer's changes are merged into the main branch frequently, with automated checks validating each merge. Continuous Deployment means the validated code is automatically deployed to production or staging.

Automating API testing in this context means inserting API test execution as an automated step in the CI/CD pipeline — triggered by events like pull request creation, push to a branch, or deployment — so that APIs are validated without human initiation.

The automation stack has three components:

- Test generation: creating the test cases that will be executed — ideally from your API spec so they stay current

- Test execution: running those tests against the API in a repeatable, environment-aware way

- Gate enforcement: making the pipeline succeed or fail based on test results, blocking deployments when tests fail

Why Manual API Testing Fails in Modern Pipelines

It Does Not Scale with Velocity

A team deploying 20 times a day cannot run a manual test suite 20 times a day. Manual testing creates a bottleneck that either slows releases or gets abandoned.

It Is Inconsistent

Manual testers test differently each time. Some edge cases get checked, others get skipped depending on time pressure. Automated tests run the same checks every time.

It Cannot Catch Schema Drift Early

When a developer changes a response field from user_id (integer) to userId (string) on a Monday morning, a manual tester might not run the affected tests until Friday. An automated CI test catches it within minutes of the pull request opening.

It Cannot Enforce Standards

Quality gates — "this PR cannot merge if more than 0 tests fail" — require automation. You cannot enforce a manual gate at scale without creating a process bottleneck that teams will route around.

It Creates False Confidence

A manual "all clear" from a QA engineer 24 hours after a deployment means nothing by the time the next five deployments have landed. Automated tests provide continuous, real-time confidence.

Key Components of CI/CD API Test Automation

API Specification (OpenAPI/Swagger)

The foundation of automated API testing is a machine-readable spec. OpenAPI 3.x is the industry standard for REST APIs. Without a spec, you either write tests by hand (slow, error-prone) or run tests against live behavior (which defeats the purpose of shift-left testing). For a deeper look at the overall pipeline design, see our guide on how to build a CI/CD testing pipeline.

Test Generation Engine

Converts the API spec into executable test cases. Manual generation is possible but rarely kept current. AI-powered generation from the spec produces and updates tests automatically as the spec evolves.

Test Execution Environment

The environment in which tests run during CI. This may be:

- A live staging environment (shared, slower, potentially unstable)

- A service-specific environment spun up for the PR (faster, isolated)

- A mocked environment using virtual services (fastest, fully isolated)

CI/CD Integration

The mechanism that triggers test execution and reports results. GitHub Actions, GitLab CI, Jenkins, CircleCI, and Azure DevOps are all common choices. Most modern API testing platforms expose a CLI or API for CI integration.

Quality Gates

Configurable thresholds that determine whether the pipeline passes or fails:

- Test pass rate (e.g., must be 100%)

- Response time SLA (e.g., 95th percentile under 500ms)

- Coverage threshold (e.g., all endpoints must have at least one test)

- Error rate baseline (e.g., no regression from previous run)

Analytics and Reporting

Dashboards that aggregate results across runs, environments, and time — enabling trend analysis, flakiness detection, and coverage gap identification.

The 5-Step Automation Workflow

The complete workflow for automating API testing in CI/CD with Shift-Left API follows five steps:

Let us walk through each step in detail.

Step 1: Import Your OpenAPI Spec

The first step is connecting your API specification to Shift-Left API. Shift-Left API supports:

- Direct URL import: point to the spec URL (e.g.,

https://api.myservice.com/openapi.json) - File upload: upload a YAML or JSON spec file directly

- Git integration: connect to a repository and auto-sync the spec on push

Importing an OpenAPI spec into Shift-Left API — all endpoints are parsed and ready for test generation in seconds.

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

Once the spec is imported, Shift-Left API displays all endpoints, parameters, request bodies, and response schemas. The spec is validated for completeness — missing schemas, undefined status codes, and incomplete parameter definitions are flagged before test generation begins.

Pro tip: If your spec does not yet exist, generate it from your existing codebase using tools like springdoc-openapi (Java/Spring), fastapi (Python), or swagger-jsdoc (Node.js). Any OpenAPI 2.0 or 3.x spec works.

Step 2: Auto-Generate Tests

With the spec imported, Shift-Left API's AI engine generates a complete test suite. The generated tests cover:

- Happy-path functional tests: valid requests that should return 200/201/204

- Negative tests: invalid request bodies, missing required fields, out-of-range values

- Authentication tests: requests with missing, expired, or malformed tokens

- Schema validation tests: verify that actual response structure matches the spec

- Boundary value tests: minimum, maximum, and edge values for numeric and string fields

- Idempotency tests: for PUT and DELETE endpoints

Test generation takes 30-90 seconds depending on spec size. For a spec with 50 endpoints, you might get 300-500 individual test cases.

Parameterizing for environments: tests are generated with environment variables for base URL, authentication tokens, and test data — so the same test suite runs across dev, staging, and production simply by changing configuration.

Step 3: Configure the CI Trigger

Once tests exist, configure your CI/CD system to run them automatically. Here is a complete GitHub Actions workflow that:

- Triggers on pull requests and pushes to main

- Runs the Shift-Left API test suite

- Reports results as a PR check

- Blocks merge on failure

# .github/workflows/api-tests.yml

name: API Test Automation

on:

pull_request:

branches: [main, develop, release/*]

push:

branches: [main]

schedule:

# Run production monitoring tests every 15 minutes

- cron: '*/15 * * * *'

env:

TSL_API_KEY: ${{ secrets.TSL_API_KEY }}

TSL_PROJECT_ID: ${{ secrets.TSL_PROJECT_ID }}

jobs:

api-tests:

name: Run API Tests

runs-on: ubuntu-latest

timeout-minutes: 15

strategy:

matrix:

environment: [staging]

steps:

- name: Checkout code

uses: actions/checkout@v4

- name: Set up Node.js

uses: actions/setup-node@v4

with:

node-version: '20'

- name: Install TSL CLI

run: npm install -g @totalshiftleft/cli

- name: Sync spec from PR branch

run: |

tsl spec sync \

--project-id $TSL_PROJECT_ID \

--spec-file ./api/openapi.yaml \

--environment ${{ matrix.environment }}

- name: Run API test suite

id: run-tests

run: |

tsl run \

--project-id $TSL_PROJECT_ID \

--environment ${{ matrix.environment }} \

--output junit \

--output-file test-results.xml

env:

BASE_URL: ${{ secrets[format('BASE_URL_{0}', matrix.environment)] }}

AUTH_TOKEN: ${{ secrets[format('AUTH_TOKEN_{0}', matrix.environment)] }}

- name: Publish test results

uses: EnricoMi/publish-unit-test-result-action@v2

if: always()

with:

files: test-results.xml

comment_mode: always

check_name: API Tests (${{ matrix.environment }})

- name: Enforce quality gates

run: |

tsl gates check \

--project-id $TSL_PROJECT_ID \

--pass-rate 100 \

--max-p95-ms 500 \

--fail-on-violation

For GitLab CI:

# .gitlab-ci.yml

api-tests:

stage: test

image: node:20-alpine

before_script:

- npm install -g @totalshiftleft/cli

script:

- tsl run --project-id $TSL_PROJECT_ID --environment staging

rules:

- if: $CI_PIPELINE_SOURCE == 'merge_request_event'

- if: $CI_COMMIT_BRANCH == 'main'

artifacts:

reports:

junit: test-results.xml

Step 4: Set Quality Gates

Quality gates transform test results into actionable pipeline decisions. Without gates, tests are advisory — developers see failures but can still merge. With gates, failures block the pipeline.

Recommended quality gate configuration:

# tsl-config.yaml

quality_gates:

# Block on any test failure

pass_rate:

threshold: 100

action: fail_pipeline

# Block if any endpoint exceeds SLA

response_time:

p95_threshold_ms: 500

p99_threshold_ms: 1000

action: fail_pipeline

# Warn (but do not block) if coverage drops

coverage:

minimum_endpoint_coverage: 80

action: warn

# Block on security test failures

security:

auth_bypass_tests: must_pass

injection_tests: must_pass

action: fail_pipeline

# Historical comparison

regression:

max_new_failures: 0

action: fail_pipeline

Environment-specific gates: you may want stricter gates on production deployments than on feature branch PRs:

environments:

feature_branch:

pass_rate: 95 # Allow minor failures on WIP branches

staging:

pass_rate: 100

production:

pass_rate: 100

response_time.p95_threshold_ms: 300 # Tighter SLA for production

Step 5: Review Results and Analytics

After tests run, results flow into the Shift-Left API analytics dashboard where you can:

- See pass/fail trends across runs and branches

- Identify the slowest endpoints by percentile

- Track test coverage growth over time

- Detect flaky tests (inconsistent pass/fail patterns)

- Compare results between environments (staging vs. production)

The Shift-Left API workflow: spec import → AI test generation → CI/CD execution → quality gates → analytics dashboard.

The analytics layer is where the strategy compounds: over weeks and months, you build a baseline of expected behavior. Regressions — a suddenly slower endpoint, a new failure on an edge case — are immediately visible against that baseline.

Setting up test result notifications:

# tsl-config.yaml notifications section

notifications:

slack:

webhook_url: ${{ secrets.SLACK_WEBHOOK }}

events:

- test_suite_failed

- quality_gate_violated

- new_flaky_test_detected

email:

recipients:

- team@company.com

events:

- daily_summary

- test_suite_failed

CI/CD Pipeline Architecture Diagram

┌─────────────────────────────────────────────────────────────────┐

│ CI/CD API TESTING PIPELINE │

└─────────────────────────────────────────────────────────────────┘

Developer opens Pull Request

│

▼

┌─────────────────────┐

│ GitHub Actions │

│ Trigger: PR Event │

└──────────┬──────────┘

│

▼

┌─────────────────────┐

│ Sync OpenAPI Spec │◄──── /api/openapi.yaml (in repo)

│ to Shift-Left API │

└──────────┬──────────┘

│

▼

┌─────────────────────┐

│ AI Test Generation │

│ (if spec changed) │

└──────────┬──────────┘

│

▼

┌─────────────────────────────────────────────┐

│ Test Execution │

│ │

│ ├── Functional Tests (all endpoints) │

│ ├── Negative / Error Tests │

│ ├── Auth / Security Tests │

│ ├── Schema Validation Tests │

│ └── Performance Baseline Tests │

└──────────┬──────────────────────────────────┘

│

▼

┌─────────────────────┐

│ Quality Gate Check │

│ ┌─────────────────┐ │

│ │ Pass Rate: 100% │ │

│ │ P95 < 500ms │ │

│ │ Coverage > 80% │ │

│ └─────────────────┘ │

└──────────┬──────────┘

│

┌──────┴──────┐

│ │

PASS FAIL

│ │

▼ ▼

┌───────┐ ┌──────────────┐

│ Merge │ │ Block Merge │

│ ✔ │ │ Notify Team │

└───────┘ └──────────────┘

│

▼

┌─────────────────────┐

│ Deploy to Staging │

└──────────┬──────────┘

│

▼

┌─────────────────────┐

│ Analytics Dashboard │

│ Trend / Coverage │

└─────────────────────┘

Free 1-page checklist

API Testing Checklist for CI/CD Pipelines

A printable 25-point checklist covering authentication, error scenarios, contract validation, performance thresholds, and more.

Download FreeTools Comparison

| Tool | OpenAPI Import | Auto Test Gen | GitHub Actions | Quality Gates | Analytics | No-Code | CI Native |

|---|---|---|---|---|---|---|---|

| Shift-Left API | Yes | Yes (AI) | Yes | Yes | Yes | Yes | Yes |

| Postman / Newman | Yes | No | Yes | Partial | Partial | No | Yes |

| REST Assured | No | No | Yes | Manual | No | No | Yes |

| Karate DSL | Partial | No | Yes | Manual | No | No | Yes |

| Dredd | Yes | Partial | Yes | No | No | No | Yes |

| Swagger Inspector | Yes | No | No | No | No | Partial | No |

| Katalon | Yes | Partial | Yes | Yes | Yes | Partial | Yes |

| SoapUI/ReadyAPI | Yes | Partial | Yes | Yes | Partial | No | Yes |

Shift-Left API is the only tool in this list that combines all six capabilities without requiring test code — making it the natural choice for teams that want automated API testing in CI/CD without dedicated test engineering resources.

Common Challenges and Solutions

Challenge 1: Spec and Code Are Out of Sync

Problem: Developers update the code but forget to update the OpenAPI spec. Tests start failing for the wrong reason — they are testing against an outdated contract.

Solution: Enforce spec-first development. Use a pre-commit hook that validates the spec against the actual API response on localhost before allowing a commit. Shift-Left API can alert when a spec change creates test failures, surfacing the drift immediately.

Challenge 2: Environment Stability

Problem: Tests fail because the staging environment is down or has bad data, not because the code is broken.

Solution: Use Shift-Left API's mock server for most tests. Reserve live-environment tests for a small smoke test suite. Separate environment health checks from test results in your pipeline.

Challenge 3: Authentication Setup

Problem: Tests cannot authenticate against the API in CI because secrets are hard to manage.

Solution: Store credentials as CI secrets (GitHub Actions Secrets, GitLab CI Variables, etc.) and inject them as environment variables at runtime. Shift-Left API supports per-environment credential configuration.

Challenge 4: Long Test Run Times

Problem: As the API grows, the test suite takes 10+ minutes to run, slowing down PR feedback.

Solution: Parallelize test execution across multiple runners. Shift-Left API supports test sharding — splitting the suite across runners and aggregating results. Prioritize fast-running tests for PR checks and run the full suite only on merges to main.

Challenge 5: Flaky Tests

Problem: Some tests pass intermittently, causing noise and reducing trust in the test suite.

Solution: Shift-Left API's analytics dashboard flags tests that have inconsistent pass/fail patterns. Investigate and fix flaky tests as priority bugs. A flaky test is either exposing a real intermittent issue in the API or is testing something non-deterministic that needs to be mocked.

Best Practices

- Trigger on every PR, not just on merge: catch failures before they are integrated, not after

- Keep tests independent: each test should set up its own state and clean up after itself — no test should depend on another test's execution order

- Use test data factories: generate realistic test data from spec schemas rather than hard-coding values that can become stale

- Test in the environment closest to production: staging environments that mirror production configuration catch more real issues than sanitized dev environments

- Set strict quality gates from day one: it is much harder to introduce quality gates into a project with existing failures than to start with them

- Treat test failures as production incidents: when a CI test fails, someone owns that failure and fixes it before the next deployment

- Monitor production with scheduled test runs: run a read-only subset of your test suite against production every 15-30 minutes to catch environment issues early

- Version your test suites: store test configuration in version control alongside the API spec so test changes are reviewed and auditable. Follow REST API testing best practices for comprehensive test design

- Select the right tools for your pipeline: compare options in our guide to the top API testing tools in 2026

- Review analytics weekly: spend 15 minutes each week reviewing coverage gaps, slow endpoints, and flakiness trends — this surfaces technical debt before it becomes a crisis

- Adopt a comprehensive DevOps testing approach: API testing in CI/CD is one component of a broader DevOps testing strategy that covers all quality dimensions

CI/CD API Testing Checklist

Pipeline Configuration

- ✔ CI triggers are set for pull requests and main branch pushes

- ✔ Test execution step is added to the pipeline before deployment steps

- ✔ Quality gates are configured and enforced (not just advisory)

- ✔ Test results are published as PR check status

Spec Management

- ✔ OpenAPI spec is stored in version control alongside the application code

- ✔ Spec is validated on every commit (linting, completeness checks)

- ✔ Spec is automatically synced to Shift-Left API on push

Test Coverage

- ✔ All endpoints have at least one happy-path test

- ✔ All endpoints have at least one negative test

- ✔ Authentication and authorization flows are tested

- ✔ Response schema validation is enabled for all tests

Quality Gates

- ✔ Pass rate gate is set to 100% for staging and production

- ✔ Response time SLA gate is configured per endpoint SLA

- ✔ New test regressions are automatically flagged

Reporting and Monitoring

- ✔ Slack or email notifications are configured for failures

- ✔ Analytics dashboard is reviewed on a weekly schedule

- ✔ Scheduled production monitoring tests are running

Frequently Asked Questions

How do you automate API testing in a CI/CD pipeline?

Automate API testing in CI/CD by: 1) importing your OpenAPI spec into a test automation tool, 2) auto-generating test cases, 3) configuring a CI trigger to run tests on every pull request, 4) setting quality gates that block merges when tests fail, and 5) publishing results to a dashboard for trend analysis.

What is the best tool to automate API testing in CI/CD?

Shift-Left API is purpose-built for CI/CD API test automation — it imports OpenAPI specs, generates tests automatically using AI, runs in GitHub Actions and other CI systems natively, and provides quality gates and analytics without writing any code.

Can you automate API testing without writing test code?

Yes. No-code platforms like Shift-Left API generate complete API test suites from OpenAPI/Swagger specs automatically. Teams connect their spec, configure quality gates, and add the CI step — no test scripting required.

How do quality gates work in API test automation?

Quality gates are automated pass/fail thresholds applied to test results in CI/CD. Common gates include: 100% of tests must pass, response time must be under a defined SLA, and test coverage must meet a minimum percentage. When a gate fails, the pipeline stops and the change cannot be merged or deployed.

Conclusion

Automating API testing in CI/CD is not a future best practice — it is the current standard for teams serious about reliability and velocity. The five-step workflow described in this guide — import spec, generate tests, configure CI trigger, set quality gates, review analytics — can be implemented in an afternoon with the right tooling.

The compounding return is significant. On day one, you have basic test coverage and a working CI gate. After three months, you have a performance baseline, a history of regressions caught before production, and a coverage heatmap that shows exactly where your gaps are. After a year, you have a system that has protected hundreds of deployments automatically.

Shift-Left API removes the biggest barrier to adoption — the need to write and maintain test code. By generating tests from your OpenAPI spec, it keeps your test suite current with zero maintenance overhead, freeing your team to focus on building rather than testing.

The question is not whether to automate API testing in your pipelines. The question is how long you can afford to wait.

Related: What Is Shift Left Testing: Complete Guide | How to Build a CI/CD Testing Pipeline | DevOps Testing Strategy | API Testing Strategy for Microservices | Best Shift Left Testing Tools | Shift Left Testing in CI/CD Pipelines | No-code API testing platform | Total Shift Left home | Start Free Trial

Continue learning

Go deeper in the Learning Center

Hands-on lessons with runnable code against our live sandbox.

A contract is a promise. Contract testing keeps you honest. Here's how to do it right.

Turn an OpenAPI spec into hundreds of tests in minutes. Here's what the AI actually does well — and where it still needs you.

Ready to shift left with your API testing?

Try our no-code API test automation platform free.