Why Engineering Teams Can't Rely on Post-Deployment Testing Anymore (2026 Guide)

Post-deployment testing is the practice of validating software after it has been merged and deployed to a staging or production-like environment — typically by a QA team running manual checks, scripted regression suites, or exploratory sessions. It was the dominant quality model from roughly 2005 to 2018 and still runs in most enterprises. In API-driven products built on hundreds of microservices and shipping daily, **this model is structurally broken**. The feedback arrives too late, the defects cost too much, and the cadence does not match the delivery tempo engineering organizations are now expected to sustain.

The data is stark. The Capgemini World Quality Report 2024-2025 shows elite engineering organizations deploy 46x more frequently than low performers with 7x lower change-failure rates — and they achieve this by catching defects pre-merge, not post-deploy. IBM and NIST research consistently show defects caught in production cost 30-100x more than defects caught at commit. DORA's State of DevOps research ties high-performing delivery to pre-merge automated verification. In 2026, clinging to post-deployment testing as the primary quality gate is no longer conservative — it is the riskier choice.

Table of Contents

- Introduction

- What Is Post-Deployment Testing?

- Why This Matters Now for Engineering Teams

- Key Components of a Post-Deployment-to-Shift-Left Transition

- Reference Architecture

- Tools and Platforms

- Real-World Example

- Common Challenges

- Best Practices

- Implementation Checklist

- FAQ

- Conclusion

Introduction

For two decades, quality in enterprise software meant a QA team catching defects after development was "done." Code merged on Friday; QA validated on Monday; bugs returned to developers on Wednesday; a release shipped the following week. That rhythm worked when products were monolithic, releases were quarterly, and APIs were internal plumbing rather than the product itself.

None of those conditions hold in 2026. A mid-sized SaaS now operates 200-500 internal APIs, ships daily or multiple times per day, and depends on third-party integrations it does not control. A defect caught four days after merge is not a manageable quality issue — it is an incident. Engineering teams are realizing that post-deployment testing cannot be the primary quality gate for API-driven software, and are moving validation into the pull request.

This guide explains what post-deployment testing is, why it fails under current conditions, and what a transition to shift-left validation looks like. For the broader context, see the rising importance of shift-left API testing, the shift-left testing framework, and the shift-left AI-first API testing platform reference. Fundamentals are covered in the API Learning Center.

What Is Post-Deployment Testing?

Post-deployment testing is any quality validation that happens after code is merged and deployed to a shared environment. It sits in the downstream portion of the software delivery lifecycle and typically includes four activities:

Manual QA sign-off — a QA engineer executes test plans against a staging environment after the developer has declared work "done." Scripted regression suites — collections of Postman requests, Selenium scripts, or similar artifacts run on a nightly schedule against staging or a pre-prod environment. Exploratory testing — experienced testers use the application unscripted to find issues not covered by automation. User acceptance testing — business stakeholders validate features against requirements before production release.

None of these are inherently wrong. The problem is not the activities — it is using them as the primary quality gate for API-driven software. When post-deployment testing is the primary gate, feedback latency between a developer writing a bug and learning about it routinely exceeds 24 hours. That latency is the root cause of nearly every dysfunction in modern QA.

Shift-left inverts this model. Quality moves into the pull request: automated contract testing, validation checks, and AI-generated positive, negative, and boundary tests run on every commit, block merges on failure, and give developers feedback in minutes. Post-deployment testing does not disappear — it shrinks to production smoke tests, synthetic monitoring, and canary analysis.

Why This Matters Now for Engineering Teams

Microservice sprawl has outpaced manual test capacity

A SaaS with 300 APIs and a modest 20-test suite each is 6,000 test cases. At 30 minutes per case to author and 10 minutes per month to maintain, that is a five-person QA team doing nothing but test upkeep. Post-deployment models assume a small number of testable surfaces — they do not survive microservice fan-out. See best Postman alternatives.

Release cadence has compressed past QA sign-off windows

Weekly releases were the leading edge in 2019. Daily and multi-daily deploys are the 2026 norm for elite performers (DORA). A two-day post-deployment sign-off cycle either blocks those releases or gets skipped — and when it gets skipped, the model quietly collapses. Wiring patterns: API test automation with CI/CD.

Schema drift is a dominant incident driver

When a backend service adds a required field or changes a response type, consumer services break silently. Without automated contract enforcement at PR time, the first signal is a production error. Post-deployment regression suites rarely cover the specific consumer paths that drift exposes. See API schema validation: catching drift.

Staging environments drift from production

Post-deployment testing presumes staging mirrors production. In practice, data volumes differ, integration configurations differ, third-party stubs differ, and traffic patterns differ. Defects that pass staging regularly surface in production. This is the "works on staging" problem, and it structurally undermines the late-gate model.

Defect economics have moved decisively against late validation

IBM Systems Sciences Institute, NIST, and Capgemini World Quality Report research all converge on the same curve: defects caught post-deploy cost 10-100x more than defects caught pre-merge. When deploy frequency increases, the absolute defect count increases too — so the cost of the old model grows non-linearly with release velocity.

Developer context loss destroys debugging efficiency

A defect caught 20 minutes after a PR is filed takes 2 minutes to fix. The same defect caught 48 hours later, after the developer has shipped three more PRs, takes 40 minutes. Multiply across a team and post-deployment detection silently consumes 20-30% of engineering capacity. Deeper: AI test maintenance.

Key Components of a Post-Deployment-to-Shift-Left Transition

Pull-request-level automated API testing

Every PR runs an automated API test suite against the changed services. Tests are generated from OpenAPI specifications, cover positive, negative, and boundary cases, and fail the build on breaking changes. This is the single highest-leverage component.

Contract testing between producer and consumer services

Formal contract definitions — expressed in OpenAPI or Pact — are enforced at PR time. Producer changes that break consumer contracts fail the producer's PR, not the consumer's next deploy. See the /learn/testing/contract-testing lesson for how this works in practice.

AI-driven test generation and maintenance

Hand-authored test suites cannot keep up with hundreds of APIs evolving continuously. AI-first platforms — see AI-driven API test generation and AI-assisted negative testing — generate baseline suites from specs and self-heal on changes. This is what makes shift-left economics work at scale.

Authentication and environment isolation in CI

Real APIs require real auth. Shift-left platforms need first-class JWT, OAuth2 client credentials, and token refresh support, plus environment isolation so PR test runs do not collide. Without this, teams revert to running in staging — which is post-deployment testing again.

Parallel, sharded test execution

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

A PR that takes 40 minutes to validate will not be tolerated. Sharded parallel execution compresses suites from 40 minutes sequential to 3-5 minutes on a PR. This is a non-negotiable component — not an optimization. Related: features/test-execution.

Observability and failure triage UX

Developers will abandon any test system that reports failures poorly. Clear request/response diffs, one-click local reproduction, flakiness scores, and readable assertions are what determine adoption. See features/analytics-monitoring.

Production smoke and synthetic monitoring

Post-deployment does not vanish — it narrows. After shifting left, a thin layer of production smoke tests, synthetic monitoring, and canary analysis handles environment-specific and emergent issues. This is the correct role for late-stage validation.

QA role redefinition

Shift-left does not eliminate QA; it upgrades it. Engineers redirect from script maintenance toward test strategy, risk modeling, exploratory testing, compliance validation, and quality platform ownership. Teams that skip this redefinition stall culturally even when the tooling lands.

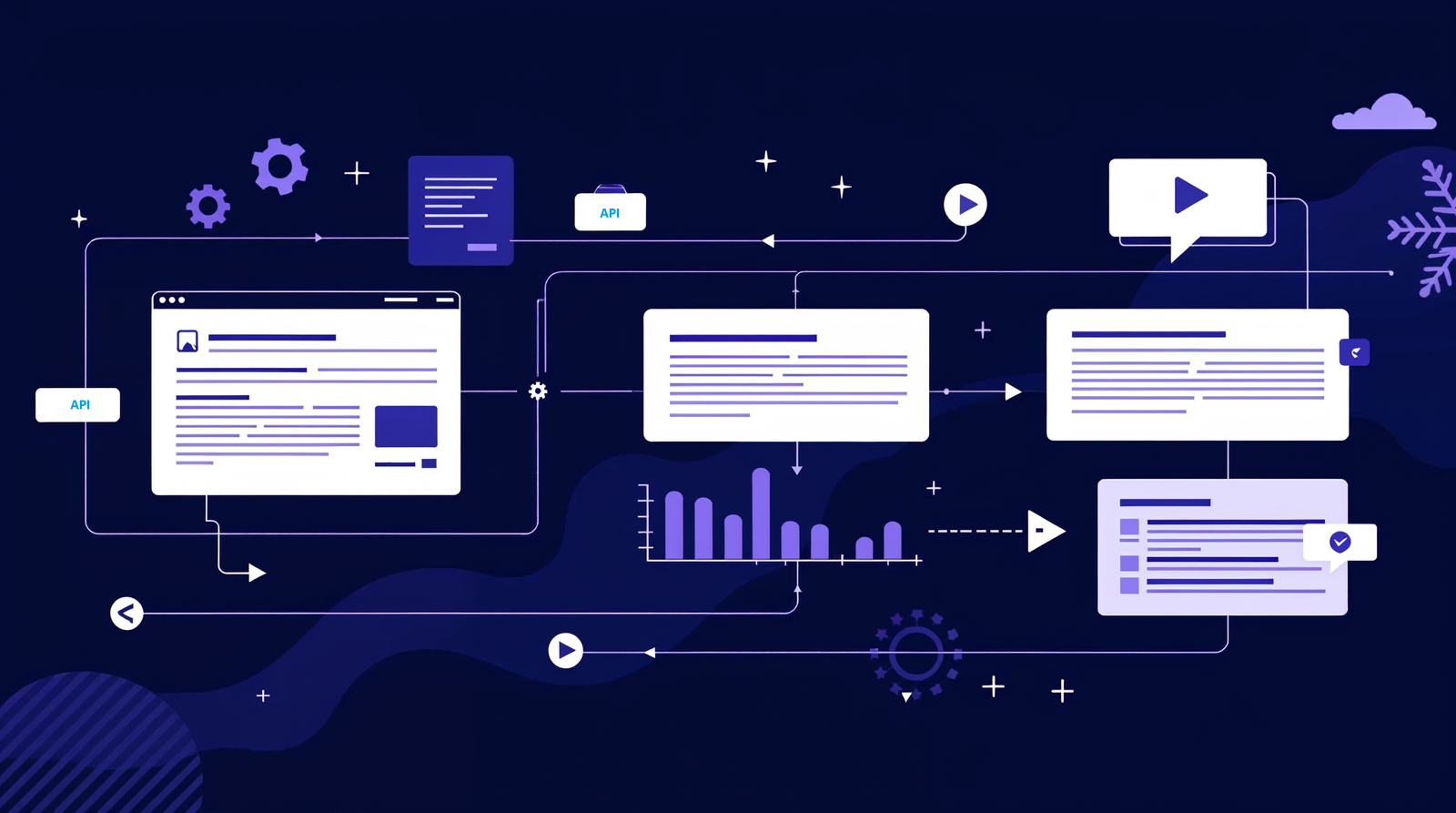

Reference Architecture

A mature shift-left validation pipeline for API-driven software operates across five layers, each replacing a specific function of the old post-deployment model.

At the source layer sit the artifacts of truth: the OpenAPI specification committed with each service, the running endpoint, and authentication configuration. A commit, merge request, or spec change triggers the pipeline. This layer replaces the old "developer throws work over the wall to QA" handoff.

The generation and maintenance layer consumes specs and produces test cases. AI-first platforms generate positive, negative, and boundary suites automatically and version them against the spec hash. When specs change, this layer computes a diff, updates affected tests (self-healing), and flags breaking changes. See the platform overview for how this is implemented end to end.

The execution layer runs tests inside the CI pipeline on every PR. It resolves authentication, isolates environments, shards tests for parallelism, and enforces pass/fail gates that block merges. This layer replaces the old nightly regression run against staging.

The feedback layer surfaces failures where developers already work: PR annotations, request/response diffs, Slack or Teams escalations, and historical trend dashboards. The old post-deployment model deferred feedback to ticket queues; this layer collapses it to minutes.

Cross-cutting is the governance layer: RBAC, audit logging, secrets management, compliance controls, and environment configuration. This is the enterprise plumbing that distinguishes a serious platform from hobbyist scripting. For a deeper treatment, see the shift-left AI-first platform architecture section.

Tools and Platforms

| Platform | Model | Best For | Post-Deployment Replacement |

|---|---|---|---|

| Total Shift Left | AI-First Shift-Left Platform | End-to-end spec-to-PR automation | Full — replaces staging regression and QA sign-off for APIs |

| Postman | Collection-Based | Exploratory + manual API debugging | Partial — useful for exploration, weak for CI/CD gates |

| ReadyAPI (SmartBear) | Scripted Enterprise Automation | Legacy SOAP + REST with load testing | Partial — supports CI but script-maintenance heavy |

| Apidog | Design + Test Hybrid | Small-to-mid teams standardizing on spec-first | Partial — good design integration, limited AI generation |

| Karate | Open-Source DSL | Engineer-written Gherkin-style tests | Partial — code-heavy, strong for engineering teams |

| REST Assured | Java Library | Java teams embedding tests in code | Partial — good unit-level, limited at platform scope |

| Schemathesis | Property-Based OSS | Engineers wanting OpenAPI-driven fuzzing | Partial — excellent coverage, no maintenance layer |

| Pact | Consumer-Driven Contract Testing | Microservice contract enforcement | Full for contracts — pairs with generation platforms |

| Pact Broker + CI | Contract Orchestration | Multi-service contract governance | Full for contract governance |

For detailed vendor comparisons, see /compare, best API test automation tools compared, and the learning center entries ReadyAPI vs Shift Left, Apidog vs Shift Left, and best AI API testing tools 2026. For a live walkthrough, open demo.totalshiftleft.ai.

The category split is clear: tools designed for humans to operate (Postman, ReadyAPI) against tools designed to operate autonomously inside CI/CD (AI-first shift-left platforms, contract frameworks). The former cannot replace post-deployment testing; the latter can.

Real-World Example

Problem: A 140-engineer B2B SaaS company operated 180 internal APIs with a 9-person QA team owning a nightly Selenium + Postman regression suite against staging. Releases shipped weekly on Thursdays with a 36-hour QA sign-off window. Over the prior two quarters the team logged 14 P1 incidents, 9 of which were traced to API contract changes that passed nightly regression but broke in production. Developer survey data showed 71% of engineers felt "not confident" deploying on Friday. QA sign-off routinely slipped, pushing releases to the following Monday or later.

Solution: Leadership committed to a 16-week transition off post-deployment as the primary gate. Weeks 1-4: audit of all 180 APIs; Spectral linting gated in PRs; spec quality raised to 92% linter-clean. Weeks 5-10: AI-first platform ingested specs for the 30 highest-traffic APIs and generated baseline suites; GitHub Actions wired to run suites on every PR with merge-blocking gates; authentication centralized in the platform vault. Weeks 11-16: remaining 150 APIs onboarded; nightly Selenium/Postman regression deprecated for API scope; production smoke tests and synthetic monitoring retained. QA team redirected to exploratory testing, risk modeling, and compliance validation for payment endpoints.

Results: Mean time from "endpoint changed" to "change validated" dropped from 22 hours to 6 minutes. P1 incidents traced to API contract changes fell from 9 per quarter to 1. Release cadence moved from weekly to three-times-weekly within three quarters, with change-failure rate dropping from 18% to 4%. Developer Friday-deploy confidence rose from 29% to 84%. QA headcount held steady but 70% of capacity redirected from maintenance to strategic work. For more transition patterns, see how to migrate from Postman to spec-driven testing.

Common Challenges

"We have too much legacy regression investment to walk away from"

Teams with thousands of Selenium/Postman artifacts see migration as a multi-year effort. Solution: Run both models in parallel during transition. Freeze legacy post-deployment suites (no new additions). Apply AI-first generation to all new endpoints from day one. Migrate legacy coverage opportunistically when suites require maintenance anyway. Within 2-3 quarters, legacy coverage shrinks below critical mass and can be deprecated cleanly.

"Our QA team will push back on losing scope"

QA organizations built around manual sign-off cycles perceive shift-left as headcount risk. Solution: Lead with role redefinition, not tooling. Publish the new QA charter — test strategy, risk modeling, exploratory depth, compliance ownership, platform engineering — before platform rollout. Teams that frame this correctly see QA engagement increase, not decrease.

"Staging environments are too complex to replicate in CI"

Shift-left presumes tests run against reliably provisioned environments in CI, which teams with complex staging fear is impossible. Solution: Use service virtualization, ephemeral per-PR environments, and mocked downstreams for the majority of tests. Reserve true integrated staging runs for a narrow smoke-test tier after merge. Most API correctness questions do not require full staging.

"AI-generated tests will produce false positives"

Engineers who have not seen modern generation assume AI output is shallow or noisy. Solution: Pilot with one team and 10-20 high-quality specs. Engineers reviewing output alongside the spec consistently find coverage superior to what they would have hand-written. Credibility builds quickly once the first team ships with the new gates. See AI-assisted negative testing.

"Authentication across our services is too complex for CI"

Enterprise auth — mTLS, custom headers, nested token exchanges, rotation — is where many shift-left attempts stall. Solution: Evaluate platform auth capability against your hardest auth flow during procurement, not the easiest. Centralize secrets in the platform vault and integrate with AWS Secrets Manager, HashiCorp Vault, or Azure Key Vault rather than CI environment variables.

"Our PR feedback times will become unacceptable"

Teams fear that running thousands of tests on every PR will create 30-minute pipelines. Solution: Require sharded parallel execution from day one. Use smart test selection (changed-service-aware) on feature branches; run the full suite only on main merges and nightly. Well-configured pipelines return PR feedback in 3-5 minutes regardless of total suite size.

Best Practices

- Make the pull request the primary quality gate. If a defect can reach main before automated validation, the transition is incomplete. Enforce merge-blocking on generated test failures.

- Treat OpenAPI as the source of truth. Specs generate tests, mocks, and client SDKs. Spec quality compounds — invest in linting, examples, and descriptions as PR gates.

- Replace nightly regression with per-PR validation. Nightly runs create 12-hour feedback gaps. Per-PR validation closes them. Keep nightly only for long-running performance or integration suites.

- Generate, review, curate — never hand-author baselines. AI-first platforms produce coverage humans would never write. Review and prune noise; do not revert to manual authoring as the default.

- Parallelize and shard execution aggressively. PR feedback under 5 minutes is the engagement threshold. Beyond that, developers route around the gate.

- Retain a thin post-deployment layer. Production smoke, synthetic monitoring, canary analysis, and chaos experiments remain essential. Narrow the scope, do not eliminate.

- Measure feedback latency, not just coverage. Track time-from-commit-to-signal and time-from-signal-to-fix. These are the real ROI metrics.

- Centralize authentication and secrets in the platform vault. Scattering credentials across CI environment variables creates security and maintenance debt. Use platform integrations with features/collaboration-security.

- Enforce contract testing between producer and consumer services. Schema drift is the top incident driver in microservice systems. Contracts at PR time eliminate it systematically.

- Redefine QA roles before rollout, not after. QA organizations that clarify strategic scope early embrace shift-left; those told to "figure it out later" resist.

- Stage the rollout by team and service, not by feature. One team, 10-20 high-traffic APIs, proven wins, then expand. Big-bang migrations reliably stall.

- Invest in failure triage UX as a first-class concern. Request/response diffs, local reproduction, and readable assertions determine adoption more than generation sophistication.

Implementation Checklist

- ✔ Inventory all APIs and map ownership to engineering teams

- ✔ Audit current post-deployment testing artifacts (Postman collections, Selenium scripts, regression suites)

- ✔ Measure baseline feedback latency — time from commit to defect signal

- ✔ Lint all OpenAPI specs with Spectral (or equivalent) as a PR check

- ✔ Raise spec quality to at least 90% linter-clean before generating tests

- ✔ Select one pilot team and 10-20 high-traffic APIs for initial shift-left rollout

- ✔ Ingest pilot specs into an AI-first platform and generate baseline suites

- ✔ Review generated suites with developers and QA alongside the spec

- ✔ Wire the platform into CI/CD (GitHub Actions, GitLab, Azure DevOps, or Jenkins)

- ✔ Configure merge-blocking gates on generated test failures

- ✔ Centralize OAuth2, JWT, API keys, and mTLS certs in the platform vault

- ✔ Configure sharded parallel execution targeting sub-5-minute PR feedback

- ✔ Enable contract testing between producer and consumer services

- ✔ Define self-healing thresholds — silent heal on additive changes, alert on breaking

- ✔ Deprecate nightly API regression suites superseded by per-PR validation

- ✔ Retain production smoke tests, synthetic monitoring, and canary analysis

- ✔ Publish a redefined QA charter — strategy, exploratory, compliance, platform

- ✔ Track KPIs: feedback latency, drift-caught-pre-merge, change-failure rate, deploy frequency

- ✔ Conduct a quarterly ROI review against pre-transition baseline

FAQ

What is post-deployment testing and why is it failing?

Post-deployment testing is validating software after it has been merged and deployed to a staging or production-like environment, typically by a QA team running manual or scripted checks. It is failing in 2026 because microservice sprawl, daily release cadence, and silent schema drift between services have outpaced the feedback speed of late validation. By the time a post-deployment test catches a defect, the developer has context-switched, the fix cost has multiplied 10-100x per IBM and NIST research, and in many cases a customer has already felt the impact.

How is shift-left testing different from post-deployment testing?

Shift-left testing moves validation into the pull request and commit stages, before code merges. Post-deployment testing happens after merge and deploy. The difference is not just timing — it is feedback latency, defect economics, and who owns quality. Shift-left puts quality ownership on developers with automated tests enforcing contracts on every PR. Post-deployment puts it on a downstream QA team catching defects hours or days later.

What does industry research say about the cost of late defect detection?

IBM Systems Sciences Institute and NIST both document a well-known escalation curve: defects caught during development cost roughly 1x to fix; caught in QA they cost 5-15x; caught in production they cost 30-100x. The Capgemini World Quality Report 2024-2025 and DORA State of DevOps research reinforce this: elite performers detect the majority of defects pre-merge and deploy multiple times per day with low change-failure rates, while low performers rely on late QA gates and ship weekly at best.

Can a team go fully shift-left without any post-deployment validation?

No — post-deployment validation does not disappear, it shrinks in scope. Production smoke tests, synthetic monitoring, canary analysis, and chaos experiments remain valuable because they catch environment-specific and emergent issues that pre-merge tests cannot simulate. The shift is that functional correctness, contract conformance, and regression catching move entirely pre-merge. Post-deployment testing becomes verification of the running system, not the primary quality gate.

What are the failure modes of post-deployment testing in API-driven products?

Six failure modes dominate: (1) schema drift between producer and consumer services is detected only at runtime; (2) QA sign-off cycles cannot keep pace with daily deploys; (3) staging environments drift from production and mask issues; (4) defects detected post-deploy arrive after the developer has context-switched; (5) rollback cost grows with microservice dependencies; (6) customer-facing incidents become the primary signal, not tests.

How long does a realistic shift-left transition take?

For a mid-sized engineering organization (100-300 developers), a realistic transition runs 12-20 weeks in staged phases: weeks 1-4 audit and pilot team selection; weeks 5-10 wiring spec-driven AI test generation into CI/CD for the pilot; weeks 11-16 expanding across services and deprecating legacy post-deployment checks; weeks 17-20 hardening, KPI review, and redirecting QA capacity. Teams that skip staging and attempt big-bang migration consistently stall.

Conclusion

Post-deployment testing is not evil — it is simply obsolete as the primary quality gate for API-driven software. The conditions that made it work (monolithic architectures, quarterly releases, internal-only APIs) have been replaced by conditions that break it (microservice sprawl, daily releases, API-as-product). Research from IBM, NIST, DORA, and the Capgemini World Quality Report all point the same direction: elite engineering performance in 2026 comes from pre-merge validation, not post-deploy sign-off.

The transition is staged, not instantaneous. Start with spec quality. Pilot with one team. Wire AI-first test generation into CI/CD. Enforce contracts at PR time. Narrow post-deployment testing to production smoke and synthetic monitoring. Redefine QA roles toward strategy and exploratory depth. Measure feedback latency as the primary KPI. Teams that follow this sequence consistently ship faster with lower change-failure rates within two to three quarters.

If you want to see what shift-left validation looks like end to end — OpenAPI ingestion, AI-generated positive/negative/boundary tests, CI/CD-native execution, self-healing on schema change — explore the Total Shift Left platform, start a free trial, or book a guided demo. First green PR gate in under 10 minutes.

Related: Shift-Left Testing Framework | Shift-Left AI-First API Testing Platform | The Rising Importance of Shift-Left API Testing | AI-Driven API Test Generation | API Test Automation with CI/CD | API Schema Validation | Best Postman Alternatives | Future of API Testing: AI Automation | API Learning Center | Shift-left platform | Start Free Trial | Book a Demo

Ready to shift left with your API testing?

Try our no-code API test automation platform free.