Functional Testing vs Integration Testing: Scope, Tooling, and When to Use Each (2026)

**Functional testing vs integration testing** is the comparison between two complementary disciplines in software quality: one validates that features behave according to specification, the other validates that independently built components communicate correctly when combined. They catch different categories of defect, run at different speeds, and belong at different points in the delivery pipeline — and the teams who understand the distinction ship faster with fewer production incidents than those who treat them as interchangeable.

The economics are decisive. The IBM Systems Sciences Institute and NIST have shown for decades that defects caught during development cost 5–15x less than defects caught in QA and 30–100x less than defects caught in production. The 2025 World Quality Report found that organizations running both disciplines in automated CI/CD pipelines release 3.4x faster with 62% fewer production incidents, and DORA's State of DevOps research links this testing layering directly to elite software delivery performance. In 2026, the question is no longer whether to run both — it is how to run them efficiently, where to draw the line, and which tools collapse the boundary at the API layer.

Table of Contents

- Introduction

- What Is Functional Testing vs Integration Testing?

- Why This Matters Now for Engineering Teams

- Key Components of Each Discipline

- Reference Architecture

- Tools and Platforms

- Real-World Example

- Common Challenges

- Best Practices

- Implementation Checklist

- FAQ

- Conclusion

Introduction

Ask five engineers to define functional testing and integration testing and you will get seven answers. The terms are used inconsistently across organizations, vendor marketing, and academic texts, which causes real harm: coverage gaps, duplicated work, misassigned ownership, and pipelines that pass every gate while production quietly breaks.

The stakes are higher than they used to be. A typical mid-sized SaaS now runs 200–500 internal APIs across a dozen or more teams, release cadence has compressed from quarterly to weekly, and the attack surface has multiplied. When two teams disagree on whether a given test is "functional" or "integration," the boundary often goes untested entirely. This guide fixes that: it defines both disciplines rigorously, maps them to a reference architecture, compares the tooling landscape, and shows where modern AI-first API testing platforms collapse the two categories into a single spec-driven workflow.

For the broader shift-left context, read the rising importance of shift-left API testing. For fundamentals, the API Learning Center covers what is an API and request/response anatomy.

What Is Functional Testing vs Integration Testing?

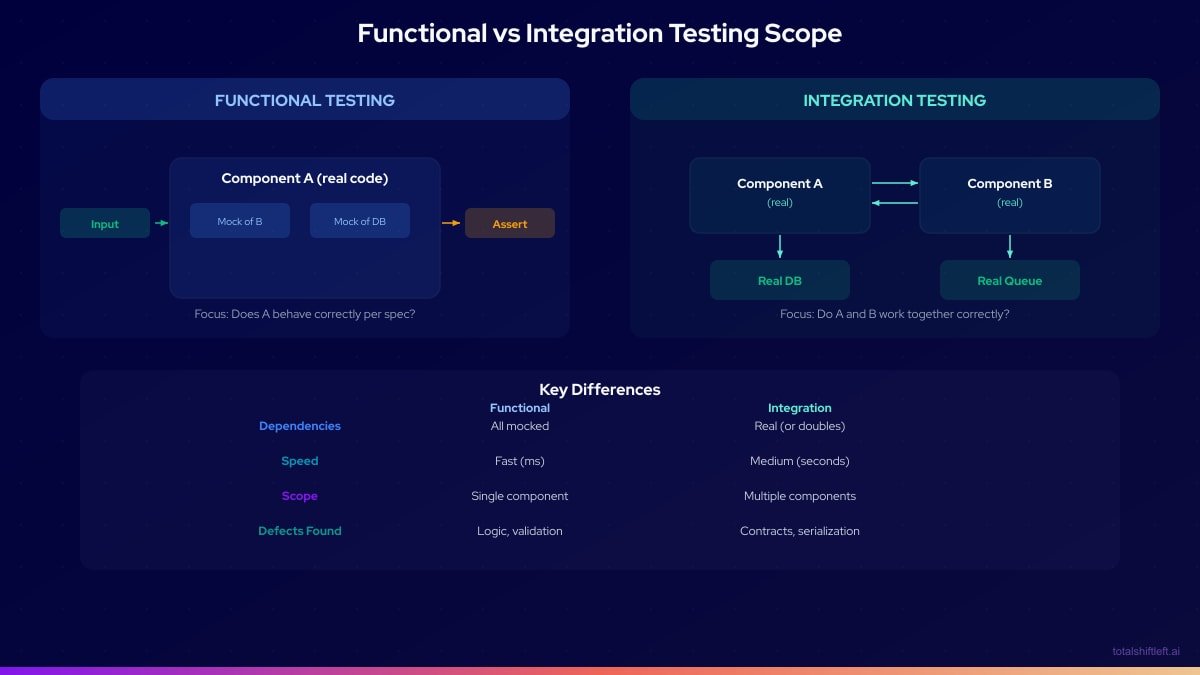

Functional testing validates that software behaves according to its specified requirements from the perspective of a consumer — an end user, a client application, or an upstream service. It is concerned with what the software does, not how it does it internally. Functional tests are derived from requirements, user stories, or API contracts. They typically run against a single component with external dependencies mocked or stubbed, which makes them fast, deterministic, and cheap to parallelize.

A functional test for a user registration endpoint asks questions like: does a valid payload return 201? Does an invalid email produce a 422 with a helpful error? Does a duplicate email produce a 409? Does a missing required field yield a clear validation message? It does not care whether the underlying data lands in Postgres or DynamoDB, or whether the service publishes an event to Kafka or simply writes a row.

Integration testing validates that independently developed components work correctly when combined — that the interfaces between them are implemented consistently by both sides and that data flows as expected across boundaries. Integration testing is interface-driven. It explicitly depends on multiple real components working together and uses controlled doubles only for boundaries outside the team's control (a third-party gateway, a flaky upstream).

An integration test for the same registration flow asks: does the API service correctly call the identity service and persist the user? Does it publish the user.created event with the expected schema to the message broker? Does the downstream email service consume that event and enqueue a welcome message? Does the whole chain recover cleanly when the broker is momentarily unavailable?

The two disciplines answer different questions — "does it work as specified?" versus "do the pieces work together?" — and a mature quality engineering practice needs both. See contract testing for the formal contract layer that sits between them, and validation errors for how functional assertions on error semantics tie into integration correctness.

Why This Matters Now for Engineering Teams

Microservice sprawl has exploded the integration surface

A monolith has one integration — the deploy. A system of 20 microservices has up to 190 unique service pairs, each a potential integration failure point. Functional tests on each service in isolation will miss every one of those boundary defects. Teams that skip integration testing in a distributed architecture are shipping untested seams. See API testing strategy for microservices.

Release cadence has compressed past manual QA cycles

Weekly and daily deploys are now the norm. A 48-hour manual QA cycle either blocks releases or gets skipped. DORA's elite performers deploy multiple times per day with change failure rates under 15% — a bar only achievable when both functional and integration validation are automated into the pipeline. CI/CD integration patterns show how.

Schema drift is a silent incident driver

When a producer service changes a field type or removes a property, consumers break. Without automated contract and integration testing enforced at PR time, the first signal is a production error. The 2025 World Quality Report identified cross-service contract violations as the single largest category of preventable production incidents in API-first organizations. See API schema validation: catching drift.

Test pyramid economics still apply — but with new tools

The classic test pyramid (many unit tests, fewer integration, few E2E) remains sound, but AI-first platforms like Shift-Left API reshape the middle layer by generating integration-grade API tests at near-zero marginal cost. The new curve widens the middle and shortens time-to-coverage without inflating maintenance debt.

Compliance and security now depend on both

SOC 2, ISO 27001, PCI-DSS, and HIPAA audits increasingly require evidence of continuous validation at both the feature level (functional) and the interface level (integration). Manual spreadsheet-driven test evidence no longer satisfies auditors at modern-pace organizations.

Key Components of Each Discipline

Specification source for functional tests

Functional tests derive from requirements documents, user stories, API contracts, and OpenAPI specs. The specification is the oracle — if the spec says the endpoint returns 201 on success, the test asserts 201. Teams that treat OpenAPI as authoritative gain compounding benefits across functional testing, SDK generation, and docs. See generate tests from OpenAPI.

Test case design techniques

Functional testing uses formal techniques: equivalence partitioning (group inputs that should behave identically), boundary value analysis (test the edges), decision tables (enumerate rule combinations), and state transition testing. These techniques are what separate a thorough functional suite from a smoke test. AI-assisted negative testing shows how modern platforms automate these techniques.

Mocking and isolation strategy

Functional tests mock external dependencies to isolate the component under test. The mock layer is what makes functional tests fast — milliseconds per test — but also what distinguishes them from integration tests. Tools: WireMock, MSW, Nock, and the mocking capabilities built into frameworks like pytest and JUnit.

Environment topology for integration tests

Integration tests require running instances of all involved components. In practice this means Docker Compose for local runs, ephemeral Kubernetes namespaces for CI, and dedicated staging environments for broader suites. Infrastructure-as-code (Terraform, Pulumi) makes these reproducible.

Test data management across components

Integration tests need consistent, controlled data across multiple services — a materially harder problem than functional testing's isolated fixtures. Patterns: data factories that understand cross-service relationships, seed scripts run before each suite, and ephemeral databases reset between runs.

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

Contract validation at component boundaries

Contract testing (Pact, Spring Cloud Contract) formalizes the agreement between a producer and consumer. It is lighter than full integration testing but stronger than pure functional testing, and it slots naturally between the two layers. See contract testing for microservices.

Failure triage and observability

An integration suite that fails opaquely is worse than no suite at all — developers will disable it. Best-in-class platforms provide request/response diffs, clear assertion messages, one-click local reproduction, and historical flakiness scoring. This is the observability layer from our analytics and monitoring feature.

Authentication and secrets handling

OAuth2, JWT, API keys, and mTLS all need first-class support across both functional and integration layers. Secrets should live in a managed vault, not scattered across CI environment variables. See JWT authentication, OAuth2 client credentials, and token refresh patterns.

Reference Architecture

A mature testing architecture layers functional and integration testing across five tiers, each with its own execution trigger, environment profile, and ownership model.

The source tier holds the artifacts both disciplines derive from: OpenAPI specifications, AsyncAPI documents for event-driven contracts, user stories in the backlog, and acceptance criteria. Quality here is the leading indicator of quality everywhere downstream — a loosely typed spec produces loose tests on both sides.

The authoring tier is where tests come into existence. Functional tests are written by developers alongside feature code (TDD or immediately after), or auto-generated from OpenAPI by AI-first platforms. Integration tests are authored by a mix of feature teams and platform QA, using frameworks like TestContainers, Pact, or AI-generated suites from Shift-Left API.

The execution tier runs tests against environments. Functional tests execute on every commit in under a minute using ephemeral runners with everything mocked. Integration tests execute post-merge in Docker Compose or ephemeral Kubernetes namespaces, taking minutes to tens of minutes. Both use parallel sharding to keep wall-clock time bounded. See test execution.

The feedback tier surfaces results where developers work: PR annotations, Slack notifications, failure diffs, and historical trends. This tier determines adoption. A fast, clear feedback loop for both functional and integration failures is what makes engineers trust the suites instead of routing around them.

The governance tier cuts across the other four: environment configuration, secrets, RBAC, audit logging, and policy enforcement. This is where compliance evidence is produced and where the platform enforces organizational standards consistently across teams.

Tools and Platforms

The tooling landscape bifurcates by discipline, with modern AI-first platforms spanning both categories at the API layer. The comparison below summarizes the primary options in each tier, with deeper analyses on our compare page and the learn comparison cluster.

| Tool / Platform | Primary Role | Functional | Integration | Best For |

|---|---|---|---|---|

| Total Shift Left | AI-first API platform | Yes | Yes | Spec-driven teams covering both at the API layer |

| Postman | Manual/collection-based | Yes | Partial | Exploratory and manual debugging |

| REST Assured | Java library | Yes | Yes | Java shops embedding tests in code |

| Pytest | Python framework | Yes | Yes | Python functional + integration |

| JUnit | Java framework | Yes | Limited | Component-level functional tests |

| TestContainers | Container harness | No | Yes | Ephemeral integration dependencies |

| Pact | Contract testing | Partial | Yes | Consumer-driven contracts between services |

| WireMock | Service virtualization | Partial | Yes | Simulating third-party boundaries |

| Selenium / Playwright | UI/E2E | Yes | Yes | Browser-level functional and E2E |

A few observations worth calling out. Script-based tools (REST Assured, Pytest) cover both disciplines but require hand-authored tests that accumulate maintenance debt at microservice scale. Container-based tools (TestContainers) solve the environment problem but not the test-authoring problem. AI-first platforms generate and self-heal the tests themselves, which is why comparisons like ReadyAPI vs Shift Left, Apidog vs Shift Left, and Postman alternatives matter for evaluation. See also best API test automation tools compared.

Real-World Example

Problem: A European logistics platform operated six microservices — shipments, tracking, routing, notifications, billing, and identity — written by four teams across two time zones. Functional test coverage was strong: 91% line coverage, an extensive pytest suite running on every PR, and a mature mocking discipline. Integration coverage, by contrast, was almost nonexistent. The team relied on a manual smoke test in staging before releases.

The cost surfaced in production. A serialization mismatch between the shipments service (which emitted event_time as an ISO-8601 string) and the tracking service (which parsed eventTime as a Unix timestamp) caused tracking events to be silently dropped for 48 hours before a customer escalation surfaced the issue. Functional tests passed on both sides — each service was correct in isolation. The defect lived entirely in the seam between them.

Solution: The team adopted a three-phase remediation. Phase 1 (weeks 1–2) stood up a Docker Compose integration environment running all six services plus Postgres and RabbitMQ, with IaC-managed seed data. Phase 2 (weeks 3–4) ingested all six OpenAPI specifications into Shift-Left API, which generated 221 integration tests covering every endpoint across positive, negative, and boundary scenarios. Tests ran against the Docker Compose environment on every merge to main. Phase 3 (weeks 5–8) added Pact consumer-driven contract tests between every service pair and integrated a Can-I-Deploy gate into the release pipeline. The full pipeline — functional on PR, integration post-merge, contracts pre-deploy — followed the CI/CD testing pipeline pattern.

Results: Over the following 60 days, production incidents from integration failures fell from three per month to zero. Mean time to detect integration defects collapsed from 48 hours to eight minutes — they were now caught in CI, not by customers. API integration coverage went from 0% to 100% across all 221 endpoints. Developer confidence ("I'm comfortable deploying on a Friday afternoon") rose from 5.8/10 to 8.9/10 on internal survey. The specific serialization-mismatch class of defect has never recurred, and the team now ships to production twice per day. These results track with the World Quality Report's broader findings on shift-left integration automation.

Common Challenges

Teams disagree on where the functional/integration line sits

Ambiguity leads to coverage gaps. If Team A assumes Team B is testing the boundary as integration, and Team B assumes Team A covered it functionally, nobody tests it. Solution: Adopt a written definition (functional = isolated component + mocks; integration = multiple real components + real wire). Enforce via folder structure (tests/functional/, tests/integration/) and include it in PR review criteria. Link to the team definition from the repo README.

Integration tests are slow and block the PR pipeline

Running a full integration suite on every PR quickly balloons CI times past the 10-minute threshold at which developers start routing around it. Solution: Run fast functional tests on PRs and integration post-merge on main. Shard integration tests for parallel execution. Use smart test selection to run only integration tests affected by a given change on feature branches, and the full suite on main. See CI/CD integration patterns.

Flaky integration environments erode trust

An integration suite that fails intermittently for environmental reasons gets ignored even when it finds real defects. Solution: Pin all dependency versions. Use TestContainers or Docker Compose with deterministic seeds. Reset state between runs via IaC. Track flakiness per test and quarantine any test exceeding a threshold (say, 2% flake rate) until fixed.

Mocking drift between functional tests and reality

Functional tests with mocks can drift from the real integration behavior, creating false confidence. Solution: Generate functional mocks from the same OpenAPI spec that drives integration tests — the spec becomes the single source of truth. AI-first platforms do this automatically. Contract testing (Pact) is the formal layer that catches mock drift.

Ownership is undefined for cross-team integration failures

When an integration test between Team A's service and Team B's service fails, neither team owns the fix, and it lingers. Solution: Assign ownership to the API contract, not the service. Whichever team owns the OpenAPI document for the endpoint in question owns failures of tests against that endpoint. Make this explicit in CODEOWNERS.

Integration test data setup is more expensive than the tests themselves

Multi-service test data setup can take longer than the test run. Solution: Build a shared data factory library used by both dev and test code. Use the platform's collaboration and security features to share fixtures across teams. For API integration tests specifically, Shift-Left API's generated tests handle data setup via generated request payloads derived from the spec, eliminating most bespoke fixtures.

Best Practices

- Define the boundary explicitly. Written definitions of functional vs integration testing in your engineering handbook prevent coverage gaps. Reference this guide and unit testing vs integration testing vs system testing for the broader layering.

- Treat OpenAPI as the source of truth for both layers. Functional assertions, integration contracts, mocks, and docs all derive from one spec. OpenAPI test automation shows the end-to-end flow.

- Run functional tests on every commit; integration tests post-merge. This matches DORA elite-performer patterns and keeps PR feedback under five minutes.

- Parallelize aggressively in both layers. A 40-minute integration suite becomes four minutes sharded ten ways. Developers tolerate four minutes on a merge; they do not tolerate forty.

- Use real components for integration tests inside your boundary. Mock only what you do not own. Mocking everything defeats the purpose of integration testing.

- Apply formal test design techniques to functional tests. Equivalence partitioning, boundary value analysis, and decision tables dramatically increase defect-finding yield over ad-hoc test writing.

- Enforce spec quality as a PR gate. Lint OpenAPI with Spectral on every commit. Require examples and descriptions on all schemas. Spec quality is the leading indicator of test quality on both sides.

- Add contract tests between services for the middle layer. Pact or Spring Cloud Contract catches consumer-producer drift faster than full integration runs.

- Automate failure triage, not just execution. Clear diffs, one-click local reproduction, and readable assertion messages matter more than raw test volume. Invest in the feedback layer.

- Measure adoption, not just coverage. Track time-from-spec-to-green-test, integration-drift-caught-pre-merge, and PR pass rate. Coverage percentages alone are misleading.

- Quarantine flaky tests fast. Any test exceeding a flake threshold gets quarantined immediately and fixed by its owner within a defined SLA. Flakiness is the single biggest destroyer of suite credibility.

- Revisit the pyramid quarterly. As AI-first platforms reshape the middle layer, the optimal mix of functional vs integration vs E2E shifts. Review where your tests live and what they cost every quarter.

Implementation Checklist

- ✔ Publish written definitions of functional and integration testing in the engineering handbook

- ✔ Audit existing test suites and label each as functional, integration, contract, or E2E

- ✔ Enforce folder structure (

tests/functional/,tests/integration/,tests/contract/) - ✔ Lint all OpenAPI specs with Spectral on every PR

- ✔ Configure functional test execution on every commit with mocks generated from spec

- ✔ Stand up an integration environment using Docker Compose or ephemeral Kubernetes

- ✔ Ingest OpenAPI specs into an AI-first platform and generate API integration suites

- ✔ Wire integration tests into CI to run post-merge on main

- ✔ Add contract tests (Pact or equivalent) between every consumer-producer pair

- ✔ Implement a Can-I-Deploy gate before any production deployment

- ✔ Configure sharded parallel execution to keep PR feedback under five minutes

- ✔ Integrate failure notifications into Slack or Microsoft Teams with ownership routing

- ✔ Define CODEOWNERS for every OpenAPI document so contract failures route automatically

- ✔ Establish KPIs: time-to-green-test, integration-drift-caught-pre-merge, PR pass rate

- ✔ Quarantine any test exceeding a 2% flake rate until fixed by its owner

- ✔ Review and refresh test data factories so fixtures remain consistent across services

- ✔ Harden assertions on high-stakes flows (payments, auth, compliance) with human review

- ✔ Publish compliance evidence (SOC 2, ISO 27001) from platform audit logs automatically

- ✔ Conduct a quarterly review of the functional/integration/E2E mix against incident data

FAQ

What is the core difference between functional testing and integration testing?

Functional testing validates that a feature behaves according to its specification, typically in isolation with dependencies mocked. Integration testing validates that independently developed components communicate correctly when wired together, using real components or controlled doubles. Functional testing asks "does it work as specified?"; integration testing asks "do the pieces work together?"

Can one test be both functional and integration at the same time?

Yes. An API test that drives a real HTTP request through a running service, hits a real database, and asserts on business-rule-defined response fields is simultaneously functional (validating behavior against spec) and integration (exercising cross-component communication). API-level testing is where the two categories overlap most productively, and spec-driven platforms generate tests that cover both dimensions from a single OpenAPI document.

Which runs first in a CI/CD pipeline — functional or integration tests?

Functional tests run first because they are faster, cheaper, and deterministic. On every commit, unit and component-level functional tests execute in seconds and block merges on failure. Integration tests typically run post-merge on the main branch, on nightly schedules, or in pre-deploy staging gates, because they require real dependencies and take longer. DORA research links this ordering to shorter lead time for changes.

Do mocks belong in integration tests?

Mocking every dependency in an integration test defeats its purpose — you are no longer integrating. However, targeted doubles are legitimate: WireMock for a third-party payment gateway you cannot exercise in CI, or service virtualization for a flaky upstream. The rule is to mock the things you do not own or cannot control, and use real instances for everything inside your system boundary.

How does shift-left testing change the functional-vs-integration split?

Shift-left testing pulls integration validation earlier in the SDLC by generating tests directly from OpenAPI specs and running them on every pull request. Instead of waiting for a nightly integration run, teams catch contract violations, schema drift, and interface defects at PR time. The World Quality Report 2025 found this pattern reduces integration-related production incidents by more than half.

What tooling covers both functional and integration testing for APIs?

AI-first API testing platforms ingest OpenAPI specifications and generate tests that validate both behavioral correctness (functional) and cross-component contracts (integration) in one run. The Total Shift Left platform generates positive, negative, and boundary tests, executes them against real environments, and self-heals on schema drift. This collapses what used to be two separate tooling stacks into one spec-driven workflow.

Conclusion

Functional testing and integration testing are not alternatives — they are layers of a complementary coverage strategy that catch fundamentally different classes of defect. A team with only functional testing misses cross-component failures; a team with only integration testing misses business-logic errors inside each component. The teams who ship fastest and most reliably in 2026 run both in automated CI/CD pipelines, with functional tests on every commit, integration tests post-merge, and contract tests gating production deployments. Research from IBM, NIST, DORA, and the World Quality Report all converges on the same conclusion: layered, automated, shift-left testing is the dividing line between elite and struggling delivery performance.

For the API layer specifically, the distinction is collapsing productively. Spec-driven AI-first platforms generate tests that validate both behavior and contracts from one OpenAPI document, execute them in CI, and self-heal on schema drift. This is where the category is heading, and where the efficiency gains are largest. Explore the Total Shift Left platform to see how a single spec produces comprehensive functional and integration coverage. Start a free trial and have first green run in under 10 minutes, or book a demo to walk through your specific architecture with our team.

Related: API Testing vs UI Testing | Test Automation Strategy | What Is Shift Left Testing | Shift Left Testing Strategy | API Testing Strategy for Microservices | How to Build a CI/CD Testing Pipeline | Contract Testing for Microservices | API Schema Validation | Unit vs Integration vs System Testing | API Learning Center | AI-first API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.