Unit Testing vs Integration Testing vs System Testing Explained (2026)

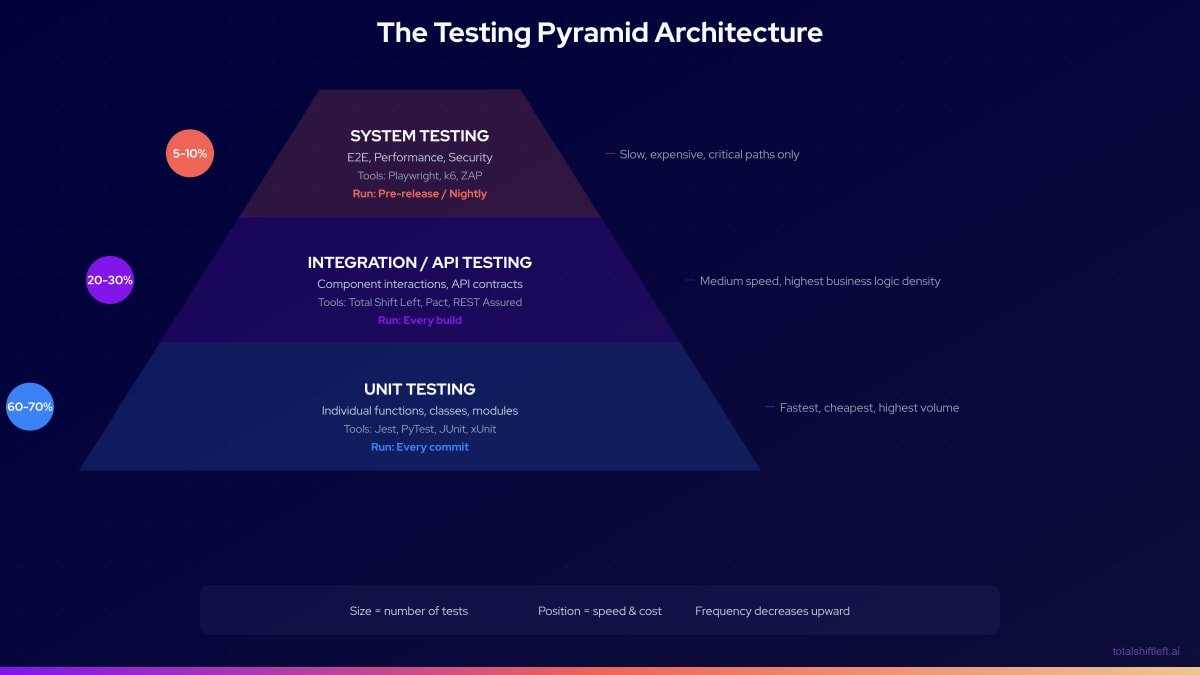

Unit testing vs integration testing vs system testing represents three levels of the software testing pyramid. Unit tests validate individual functions in isolation, integration tests verify components work together, and system tests evaluate the complete application. Together, they provide layered defect detection from code to production.

Unit testing, integration testing, and system testing represent the three canonical layers of the software testing pyramid. Each layer has a distinct scope, a distinct defect class it is designed to catch, and a distinct cost-per-test profile. Understanding how they differ—and how they complement each other—is foundational to designing a testing strategy that provides comprehensive coverage without wasting resources on redundant or poorly targeted tests. This guide provides a definitive three-way comparison, a visual testing pyramid, and specific guidance on where Shift-Left API makes the integration layer accessible to any team without writing test code.

Table of Contents

- What Is Unit Testing?

- What Is Integration Testing?

- What Is System Testing?

- Why the Three-Layer Model Matters

- Key Characteristics of Each Layer

- The Testing Pyramid Architecture

- Three-Way Comparison Table

- Real Implementation Example

- Common Challenges and Solutions

- Best Practices for the Three Testing Layers

- Testing Pyramid Checklist

- FAQ

- Conclusion

Introduction

The testing pyramid is one of the most enduring concepts in software engineering, originally described by Mike Cohn in "Succeeding with Agile" (2009). Its core insight—that more tests should exist at the faster, cheaper layers and fewer at the slower, more expensive layers—remains as valid in 2026 as it was when first articulated. What has changed is the tooling available at each layer, and particularly the middle layer (integration and API testing), which has historically been the most under-invested due to its combination of technical complexity and lack of accessible no-code tooling.

This guide explains all three layers clearly, with precise definitions, a detailed three-way comparison table, and practical implementation guidance. It also addresses a common misconception: that integration testing and system testing are essentially the same thing (they are not) and that one can substitute for the other (it cannot).

By the end, you will understand exactly what each layer validates, what defects each layer catches, how to allocate your testing investment, and where Shift-Left API fills the integration layer gap for API-driven applications.

What Is Unit Testing?

Unit testing validates the smallest testable units of software—individual functions, methods, or classes—in complete isolation from external dependencies. Every external dependency (databases, APIs, message queues, file systems) is replaced with mocks, stubs, or fakes.

The defining characteristic of a unit test is isolation. If a unit test makes a network call or reads from a real database, it is not a unit test—it is a slow, unreliable integration test masquerading as a unit test.

What Unit Tests Validate

- Return values for given inputs

- Correct handling of edge cases and boundary conditions

- Correct error throwing/handling for invalid inputs

- Algorithm correctness

- State changes within a single object or module

- Business logic that is contained within one function or class

What Unit Tests Do Not Validate

- Whether component A correctly calls component B

- Whether the application correctly reads or writes to a database

- Whether HTTP requests are correctly serialized and deserialized

- Whether the whole application behaves correctly for a user

Unit Testing in Practice

# Example: Pure unit test (no external dependencies)

def test_calculate_order_total():

items = [

OrderItem(price=Decimal("10.00"), quantity=2),

OrderItem(price=Decimal("5.00"), quantity=1),

]

total = calculate_order_total(items, tax_rate=Decimal("0.1"))

assert total == Decimal("27.50") # (20 + 5) * 1.1

# This test validates the calculation logic in isolation.

# No database, no HTTP calls, no message queues.

Unit tests should compose 60–70% of the total test suite. They run in milliseconds, require no infrastructure, and provide the fastest feedback on code correctness.

What Is Integration Testing?

Integration testing validates that separately developed components, services, or modules work correctly when combined. Unlike unit tests, integration tests use real component instances (or carefully controlled doubles for specific boundaries) and validate the interactions across component boundaries.

The defining characteristic of an integration test is the presence of real dependencies. A test that validates that the order service correctly saves to PostgreSQL, or that the payment service correctly calls the Stripe API, is an integration test.

What Integration Tests Validate

- Service-to-database communication (correct queries, correct data handling)

- Service-to-service HTTP/gRPC communication (correct contracts, correct serialization)

- Service-to-message-queue communication (correct publishing and consuming)

- Authentication and authorization across service boundaries

- Error propagation between components (what happens when one component fails)

- Data transformation correctness across component boundaries

What Integration Tests Do Not Validate

- Whether the complete user-facing system meets all functional requirements

- Whether the system handles production-scale concurrent traffic

- Whether the UI correctly reflects backend changes

- End-to-end user workflows across the full application stack

Integration Testing in Practice

# Shift-Left API auto-generates integration tests from OpenAPI spec.

# Example of what gets validated automatically:

GET /api/orders/{orderId}

✓ Returns 200 with correct response schema for valid orderId

✓ Returns 404 for non-existent orderId

✓ Returns 401 without authentication token

✓ Returns 403 for unauthorized user (wrong tenant)

✓ Response latency within SLA threshold

POST /api/orders

✓ Creates order with valid payload (validates database write)

✓ Returns 400 with validation errors for invalid payload

✓ Returns 409 for duplicate order ID

✓ Correctly handles optional fields

Integration tests should compose 20–30% of the total test suite. They take seconds to run and require real component instances or controlled environments.

What Is System Testing?

System testing validates the complete, integrated application as a whole from the perspective of an end user or external system. System tests do not concern themselves with the internal implementation or component boundaries—they treat the application as a black box and validate that it meets its functional and non-functional requirements in its entirety.

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

System testing often subsumes:

- End-to-end (E2E) testing: Browser automation testing complete user workflows

- Performance testing: Validating response times, throughput, and stability under load

- Security testing: Validating that the complete system is resistant to attack

- Usability testing: Validating user experience quality

- Accessibility testing: Validating compliance with accessibility standards

What System Tests Validate

- Complete user workflows from start to finish (e.g., browse → add to cart → checkout → confirmation)

- System performance under realistic load conditions

- Cross-browser compatibility and responsive design

- Accessibility compliance (WCAG standards)

- Security controls at the system level

- Business process correctness across the full application

What System Tests Do Not Validate

- The internal correctness of individual components (that is unit testing's job)

- The correctness of individual service-to-service interactions (that is integration testing)

- Every possible edge case (system tests are expensive; they focus on critical paths)

System Testing in Practice

// Example: Playwright system/E2E test

test('User can complete checkout flow end to end', async ({ page }) => {

// Setup

await page.goto('/');

// Step 1: Browse and add to cart

await page.click('[data-testid="product-listing"]');

await page.click('[data-testid="add-to-cart"]');

// Step 2: Proceed to checkout

await page.click('[data-testid="cart-icon"]');

await page.click('[data-testid="checkout-button"]');

// Step 3: Complete payment

await page.fill('[data-testid="card-number"]', '4242424242424242');

await page.click('[data-testid="place-order"]');

// Assert: Order confirmation visible

await expect(page.locator('[data-testid="order-confirmation"]')).toBeVisible();

});

System tests should compose 5–10% of the total test suite. They take seconds to minutes each to run and require the full application stack to be deployed.

Why the Three-Layer Model Matters

Cost of Defects at Each Layer

NIST research established that defect cost increases dramatically the later it is found. Mapping this to the three-layer model:

- Defect caught by unit test: ~$1 (minutes of developer time)

- Defect caught by integration test: ~$10 (hours of investigation, environment setup)

- Defect caught by system test: ~$100 (multi-team debugging, full environment)

- Defect caught in production: ~$1,000+ (incident response, customer impact, reputation)

A team that invests primarily in system testing is paying 10–100x more per defect than a team that catches the same defects at the unit and integration layers.

Speed Determines Feedback Loop Quality

The testing pyramid is fundamentally about feedback loop speed:

- Unit tests: Milliseconds per test → 2-3 minute suite → Feedback within minutes of writing code

- Integration tests: Seconds per test → 5-10 minute suite → Feedback within a build cycle

- System tests: 10-30 seconds per test → 15-30 minute suite → Feedback at release time

A team that relies on system tests for primary defect detection gets feedback hours or days after a defect is introduced. A team that uses all three layers gets feedback within minutes. This is the core principle behind shift left testing—moving defect detection as early as possible in the development lifecycle. The DORA research consistently shows that tight feedback loops are the strongest predictor of software delivery performance.

Key Characteristics of Each Layer

Unit Testing

- Isolation: Complete. All dependencies mocked.

- Speed: Milliseconds per test

- Maintenance: Low. Tests change only when logic changes.

- Ownership: Developers

- CI trigger: Every commit

- Infrastructure required: None (runs in the developer's process)

- Coverage target: 70% of test suite, 80%+ code coverage

Integration Testing

- Isolation: Partial. Tests real component interactions, may mock some external boundaries.

- Speed: Seconds per test

- Maintenance: Medium. Tests change when APIs or contracts change.

- Ownership: QA engineers, shared with developers

- CI trigger: Every build (post-merge)

- Infrastructure required: Real component instances (Docker Compose, TestContainers)

- Coverage target: 20-30% of test suite, 100% of API endpoints

System Testing

- Isolation: None. Tests the complete system as deployed.

- Speed: 10-30 seconds per test

- Maintenance: High. Tests change when UI, workflows, or requirements change.

- Ownership: QA engineers, product teams

- CI trigger: Pre-release or nightly

- Infrastructure required: Full deployed application stack

- Coverage target: 5-10% of test suite, critical user journeys only

The Testing Pyramid Architecture

Three-Way Comparison Table

| Dimension | Unit Testing | Integration Testing | System Testing |

|---|---|---|---|

| Scope | Single function or class | Multiple components | Complete system |

| Dependencies | All mocked | Real components (some mocked) | Full stack deployed |

| Speed | Milliseconds | Seconds | 10–30 seconds |

| Cost per test | Very low | Medium | High |

| Maintenance | Low | Medium | High |

| Defects caught | Logic, validation, algorithms | Contracts, serialization, auth | Workflows, UX, performance |

| Ownership | Developers | QA + Developers | QA + Product |

| CI trigger | Every commit | Every build | Pre-release/nightly |

| Coverage target | 60–70% of suite | 20–30% of suite | 5–10% of suite |

| Primary tools | Jest, PyTest, JUnit | Shift-Left API, TestContainers, Pact | Playwright, Cypress, k6 |

Real Implementation Example

Problem

A fintech startup building an investment platform had the classic inverted pyramid problem: they had 800 E2E tests (written by a dedicated QA team over 18 months), 150 integration tests (mostly written ad hoc), and only 200 unit tests. The E2E suite took 4 hours to run and was 35% flaky. The CI pipeline was effectively useless—developers had learned to deploy despite red builds because the failures were usually infrastructure issues, not real defects. Meanwhile, a genuine business logic defect had slipped through and caused incorrect tax calculations in production for 3 weeks before being detected.

The problem: the inverted pyramid provides maximum defect visibility at the most expensive layer. Real defects hide in the noise of infrastructure failures.

Solution

Phase 1: Flip the pyramid (months 1–2)

Unit tests:

- Developers wrote unit tests for all new code immediately

- QA engineers identified the 50 highest-risk business logic functions

- Unit tests written for all 50 functions; coverage increased from 18% to 67%

Integration tests via Shift-Left API:

- Imported OpenAPI specifications for 4 core services

- Auto-generated 340 integration tests covering all endpoints

- Integrated into CI pipeline running on every build (4 minutes)

- Replaced 200 E2E tests that were actually testing API behavior, not UI

System tests:

- E2E suite audited: 800 tests reduced to 120 critical user journey tests

- Migrated from Selenium to Playwright (4 hours → 22 minutes)

- Scheduled nightly + triggered pre-release

Phase 2: CI pipeline correction (month 3)

- Unit tests gate every PR (2.5 minutes)

- Integration tests gate every build (4 minutes)

- E2E tests gate every release (22 minutes, nightly for regular monitoring)

Results After 90 Days

| Metric | Before | After |

|---|---|---|

| Unit test count | 200 | 1,847 |

| Integration test count | 150 | 340 (all via TSL) |

| System test count | 800 | 120 |

| E2E suite runtime | 4 hours | 22 minutes |

| E2E flakiness rate | 35% | 3% |

| CI pipeline time (PR gate) | 4 hours | 6 minutes |

| Production defect rate | 2.3/month | 0.2/month |

| Tax calculation defect class | Recurred 3x | Never recurred (caught in unit tests) |

Common Challenges and Solutions

Challenge: Team cannot distinguish between integration and system tests Solution: Define the boundary explicitly: integration tests do not use a browser, do not test user-visible UI, and validate component interactions. System tests use a browser or full deployment and test user-visible behavior. Document this definition and enforce it in code review.

Challenge: Integration tests require complex environment setup Solution: Containerize using Docker Compose or TestContainers. Define the integration test environment in code (infrastructure-as-code), commit it to the repository, and run it identically on every machine.

Challenge: Unit tests are slow because they are not really unit tests Solution: Audit your "unit" test suite. Any test that makes network calls, reads files, or queries databases is an integration test and should be moved to the integration layer. True unit tests run in under 100ms each.

Challenge: API integration tests break whenever the API changes Solution: Use Shift-Left API and re-import your OpenAPI spec when the API changes. Tests regenerate automatically, eliminating the manual update cycle that makes code-based integration tests expensive to maintain.

Challenge: No clear ownership for integration test failures Solution: Integration tests validate the contract between two components. The team that owns the API being tested owns the integration test for that API. For cross-team service interactions, implement contract testing with Pact.

Best Practices for the Three Testing Layers

- Follow the pyramid proportions. 60–70% unit, 20–30% integration, 5–10% system. Deviating creates cost and reliability problems.

- Treat each layer as distinct. Do not let integration tests creep into the unit layer (by using real dependencies) or system test logic creep into the integration layer.

- Own unit tests in the development team. Developers who write features write unit tests as part of the definition of done.

- Automate API integration coverage completely. Use Shift-Left API to generate tests from your OpenAPI spec—100% endpoint coverage without writing code. For microservices architectures, see our dedicated API testing strategy for microservices guide.

- Limit system tests to critical user journeys. More than 15–20% of your test suite in E2E/system tests is a warning sign.

- Match CI triggers to layer speed. Unit tests on every commit. Integration tests on every build. System tests nightly or pre-release. Our guide on how to build a CI/CD testing pipeline covers the pipeline configuration in detail.

- Measure and report coverage by layer. Know your unit coverage percentage, your API endpoint coverage percentage, and your critical user journey coverage percentage.

- Delete system tests that duplicate integration coverage. If a system test validates something already covered by an integration test, the system test is redundant and expensive.

- Define testing layer ownership in your test automation strategy. Clear ownership prevents gaps and duplicated effort across layers.

- Understand how these layers relate to functional testing vs integration testing. The terminology overlaps — your team needs a shared vocabulary.

Testing Pyramid Checklist

- ✔ Unit tests compose 60–70% of the total test suite

- ✔ Integration tests compose 20–30% of the total test suite

- ✔ System tests compose 5–10% of the total test suite

- ✔ Unit tests run on every commit via pre-commit hooks and PR gates

- ✔ Integration tests run on every build (all API endpoints covered via TSL)

- ✔ System tests run nightly and pre-release (critical user journeys only)

- ✔ No unit tests make network calls or access real databases

- ✔ Coverage metrics are tracked and reported for all three layers

Frequently Asked Questions

What is the difference between unit testing, integration testing, and system testing?

Unit testing validates individual functions or classes in isolation. Integration testing validates that multiple components work correctly together. System testing validates the entire application as a complete system from the user or external system perspective.

In what order should unit, integration, and system tests run?

Unit tests run first (on every commit), then integration tests (on every build), then system tests (pre-release or nightly). This order reflects cost and speed—cheaper, faster tests run more frequently and catch defects earlier.

What is the recommended ratio of unit to integration to system tests?

The standard testing pyramid recommends approximately 70% unit tests, 20% integration tests, and 10% system tests. More unit tests means faster feedback and lower maintenance cost at the highest coverage layer.

What tools automate integration and API testing in the test pyramid?

Shift-Left API operates at the integration and API layers of the testing pyramid, auto-generating integration tests from OpenAPI/Swagger specifications. This provides automated coverage for the middle layer of the pyramid—the most valuable and most commonly under-invested layer.

Conclusion

Unit testing, integration testing, and system testing are the three layers of a complete quality strategy. Each validates a distinct scope, catches a distinct defect class, and operates at a distinct cost point. The pyramid model prescribes more investment at the cheaper, faster layers and focused investment at the expensive system layer—a distribution that minimizes total quality cost while maximizing defect detection coverage. For the integration and API layer, Shift-Left API eliminates the technical barrier to comprehensive coverage by auto-generating tests from your OpenAPI specification. Start your free trial and build your integration layer coverage today.

Related: Functional Testing vs Integration Testing | What Is Shift Left Testing | Shift Left Testing Strategy | API Testing Strategy for Microservices | How to Build a CI/CD Testing Pipeline | DevOps Testing Strategy | No-code API testing platform | Start Free Trial

Ready to shift left with your API testing?

Try our no-code API test automation platform free.