REST API Testing Best Practices for Modern Applications (2026)

REST API testing best practices are proven techniques for validating RESTful web services for correctness, security, and performance. They include schema validation, authentication testing, negative testing, data-driven parameterization, and CI/CD automation to ensure APIs behave reliably under all conditions.

REST API testing best practices are a set of proven principles and techniques — covering schema validation, authentication testing, negative testing, data-driven parameterization, and performance baselines — that ensure APIs behave correctly, securely, and reliably under all conditions, not just the happy path.

Table of Contents

- Introduction

- What Are REST API Testing Best Practices?

- Why REST API Testing Quality Matters

- Key Dimensions of REST API Testing

- Schema Validation

- Status Code Testing

- Authentication and Authorization Testing

- Negative and Boundary Testing

- Data-Driven Testing

- Response Time and Performance Testing

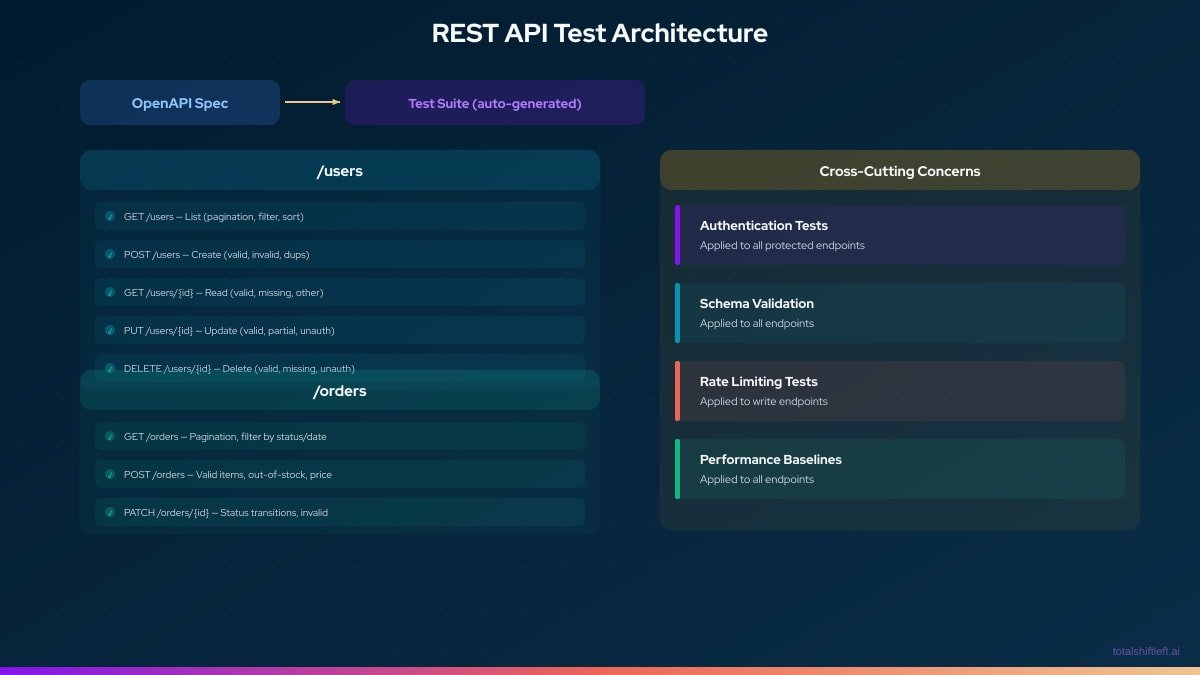

- REST API Test Architecture

- Tools Comparison

- Real Implementation with Shift-Left API

- Common Mistakes and How to Avoid Them

- Best Practices Summary

- REST API Testing Checklist

- FAQ

- Conclusion

Introduction

REST APIs are the connective tissue of modern software. Every mobile app, single-page application, and microservice communicates through them. When a REST API behaves incorrectly — returning wrong data types, missing required fields, accepting invalid inputs, or leaking data across authorization boundaries — the consequences ripple through every consumer of that API.

Yet most teams test their REST APIs shallowly. They check that a GET /users returns 200 and roughly the right data. They might run a few manual tests with Postman before a release. This surface-level testing misses the failure modes that actually cause production incidents: a field that silently changed type, an endpoint that accepts injection payloads, an authenticated endpoint that returns another user's data when the user ID is manipulated.

This guide covers every dimension of effective REST API testing — from the fundamentals of schema validation to advanced data-driven test parameterization. By the end, you will have a comprehensive approach that catches real bugs before they reach production, automated in your CI/CD pipeline with minimal ongoing maintenance. This approach aligns with the shift-left testing philosophy of catching defects as early as possible in the development lifecycle.

What Are REST API Testing Best Practices?

REST API testing best practices are a set of principles and techniques that define how to thoroughly, efficiently, and sustainably validate REST API behavior. They cover:

- What to test: the full range of inputs, responses, error conditions, and security scenarios — not just the happy path

- How to test: using spec-driven, automated, data-parameterized test cases rather than manual, ad-hoc scripts

- When to test: in CI/CD on every change, not as a manual gate before release

- How to organize tests: in a structured, maintainable hierarchy that scales with the API

The best practices described here are grounded in real-world experience with production API failures — each represents a category of bugs that teams repeatedly encounter when their testing is insufficient.

Why REST API Testing Quality Matters

APIs Are Shared Infrastructure

A single API endpoint may be consumed by a web frontend, a mobile app, a partner integration, and three internal microservices simultaneously. A regression in that endpoint breaks all of them at once. For teams managing multiple services, a solid API testing strategy for microservices is essential.

Breaking Changes Are Invisible Without Tests

A developer who changes created_at from a Unix timestamp to an ISO 8601 string may not realize that any consumer is using that field numerically. Without schema validation tests, this silent breaking change ships to production.

Security Vulnerabilities Live in API Edge Cases

SQL injection, IDOR (Insecure Direct Object Reference), and authorization bypass vulnerabilities live in the edge cases that happy-path tests never touch. Negative tests and authorization tests catch them.

Performance Regressions Are Subtle

An endpoint that responds in 50ms with 10 database rows might respond in 5000ms with 10,000 rows — a regression that only shows up under realistic load. Performance baseline tests detect these before they affect real users.

Key Dimensions of REST API Testing

Comprehensive REST API testing spans six dimensions:

- Functional correctness: does the API return the right data for valid inputs?

- Schema compliance: does the response match the defined contract?

- Security: does the API enforce authentication and authorization correctly?

- Error handling: does the API return appropriate error responses for invalid inputs?

- Performance: does the API respond within defined SLAs under expected load?

- Reliability: is the API consistent across repeated calls?

Most teams invest in dimension 1 (functional correctness) and neglect dimensions 2-6. The best practices below address all six.

Schema Validation

What Schema Validation Checks

Schema validation verifies that the structure of an API response matches the contract defined in the OpenAPI specification:

- Correct field names (no typos, no snake_case vs. camelCase drift)

- Correct data types (

integernotstring,booleannot0/1) - Required fields are present in every response

- Optional fields conform to their type when present

- Array items match their defined schema

- Enum values are one of the allowed set

- String formats are correct (ISO 8601 dates, UUIDs, email addresses)

Why Status Code Checks Are Not Enough

A test that only asserts status == 200 passes even if the response body is {} (empty object). Schema validation ensures the response contains what consumers actually need.

// Shallow test — misses most bugs

test('GET /users/123 returns 200', async () => {

const response = await get('/users/123');

expect(response.status).toBe(200);

// PASSES even if body is {}

});

// Schema-validated test — catches type changes, missing fields

test('GET /users/123 matches schema', async () => {

const response = await get('/users/123');

expect(response.status).toBe(200);

expect(response.body).toMatchSchema({

type: 'object',

required: ['id', 'email', 'name', 'createdAt'],

properties: {

id: { type: 'integer' },

email: { type: 'string', format: 'email' },

name: { type: 'string', minLength: 1 },

createdAt: { type: 'string', format: 'date-time' }

},

additionalProperties: false

});

});

Auto-Generating Schema Tests from OpenAPI

Writing schema tests by hand for every endpoint is impractical. Shift-Left API generates schema validation tests automatically from the OpenAPI spec — every field, type, and format constraint becomes an assertion without any manual test code.

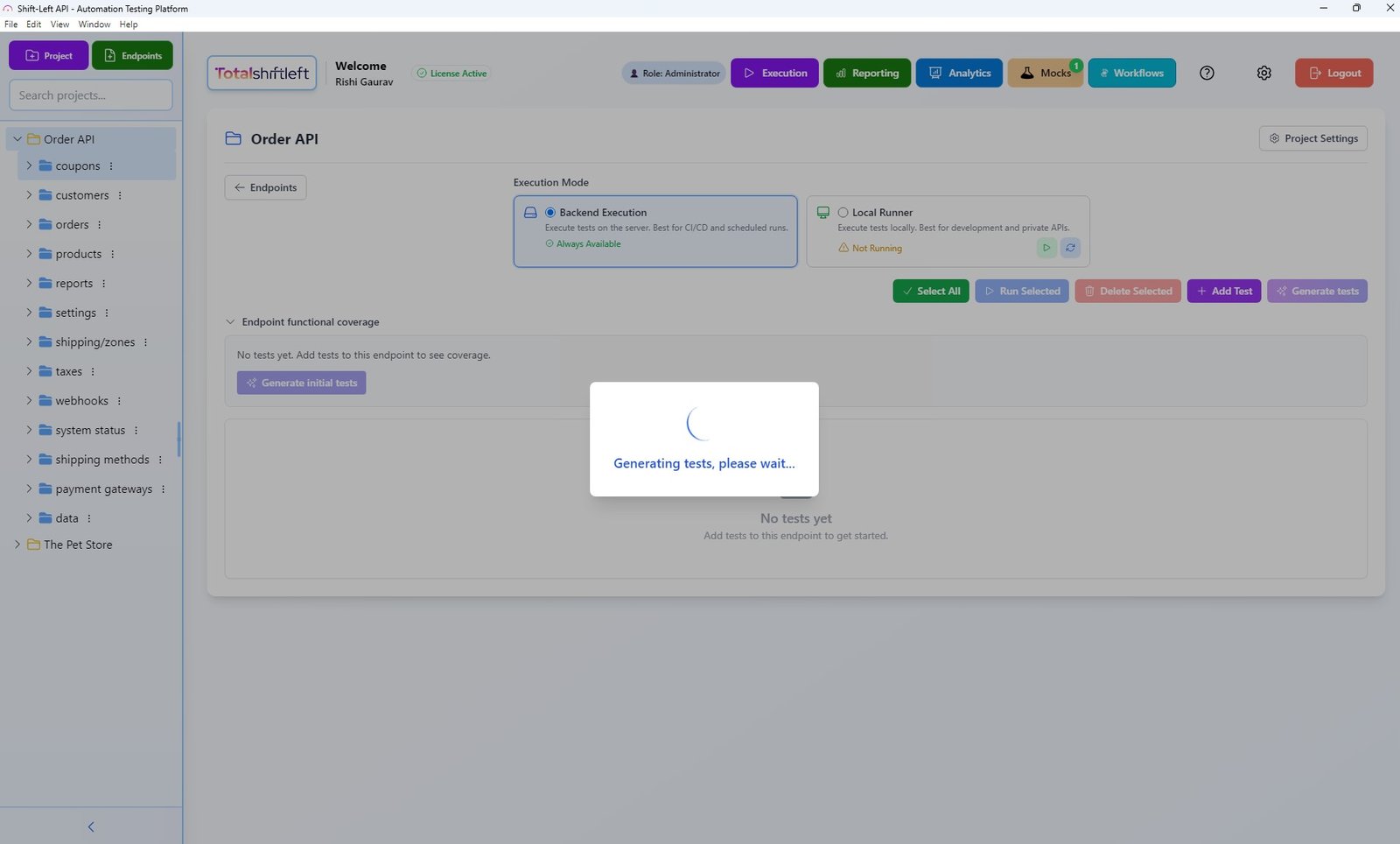

Shift-Left API's AI engine analyzing an OpenAPI spec and generating comprehensive test cases including schema validation for every endpoint.

Ready to shift left with your API testing?

Try our no-code API test automation platform free. Generate tests from OpenAPI, run in CI/CD, and scale quality.

Status Code Testing

Testing Beyond 200

Every REST endpoint should be tested for every meaningful status code it can return. The OpenAPI spec defines these — use it as your test matrix:

| Status Code | What to Test |

|---|---|

| 200 OK | Valid request returns correct data and schema |

| 201 Created | POST returns created resource with Location header |

| 204 No Content | DELETE/PUT with no body returns empty response |

| 400 Bad Request | Invalid request body, missing required fields |

| 401 Unauthorized | Missing or invalid authentication token |

| 403 Forbidden | Valid token but insufficient permissions |

| 404 Not Found | Resource with non-existent ID |

| 409 Conflict | Duplicate creation attempts (idempotency) |

| 422 Unprocessable Entity | Valid JSON but semantically invalid data |

| 429 Too Many Requests | Rate limiting behavior |

| 500 Internal Server Error | Verify error response format matches spec |

Error Response Schema Validation

Error responses need schema validation too. A consistent error schema (e.g., always includes error, message, and code fields) is a contract that API consumers depend on:

{

"error": "VALIDATION_ERROR",

"message": "The 'email' field must be a valid email address",

"code": 400,

"field": "email"

}

Test that error responses always conform to this schema — not just that they return the right status code.

Authentication and Authorization Testing

Authentication Testing (Who Are You?)

For every protected endpoint, test:

- No credentials:

Authorizationheader absent → expect 401 - Invalid format:

Authorization: Bearer notavalidtoken→ expect 401 - Expired token: token with past

expclaim → expect 401 - Malformed JWT: corrupted token string → expect 401

- Valid credentials: authenticated request → expect 200/201/etc.

Authorization Testing (Are You Allowed?)

Authorization bugs are among the most serious API vulnerabilities. Test:

- Insufficient role: user with

viewerrole accessingadmin-only endpoint → expect 403 - Cross-user access (IDOR): user A accessing

GET /users/B→ expect 403 or filtered response - Missing ownership check: user A modifying resource owned by user B → expect 403

- Privilege escalation:

PATCH /users/123with{"role": "admin"}→ expect 403 or field ignored

Automated Auth Testing with OpenAPI Security Definitions

When your OpenAPI spec defines security schemes and which endpoints require which scopes, Shift-Left API can automatically generate auth tests for every protected endpoint — no manual test case writing required.

Negative and Boundary Testing

Why Negative Tests Matter

Negative tests verify that the API rejects invalid inputs gracefully. Common bugs found only through negative testing:

- Missing validation: API accepts empty string where non-empty is required

- Injection vulnerability: API executes

'; DROP TABLE users; --as a SQL query - Type coercion bug: API silently converts

"123abc"to123, dropping characters - Boundary overflow: API crashes when given a number at

Integer.MAX_VALUE + 1

Systematic Negative Test Categories

negative_test_categories:

missing_required_fields:

- omit each required field one at a time

- omit multiple required fields simultaneously

- send empty request body

invalid_types:

- send string where integer expected

- send integer where string expected

- send null where non-null required

- send array where object expected

boundary_values:

- string: length = 0, length = maxLength, length = maxLength + 1

- integer: value = minimum, value = minimum - 1, value = maximum, value = maximum + 1

- array: length = 0, length = maxItems, length = maxItems + 1

injection:

- SQL injection payloads in string fields

- XSS payloads in string fields

- Path traversal in file path parameters

- SSRF payloads in URL parameters

format_violations:

- invalid email format

- invalid UUID format

- invalid date format (non-ISO 8601)

- invalid enum value

Data-Driven Testing

What Is Data-Driven API Testing?

Data-driven testing separates test logic from test data. Instead of one test with hard-coded values, you write one test template and run it against multiple data sets:

# Test template: POST /users validation

test: create_user_validation

endpoint: POST /users

parameterized_data:

- case: valid_user

input: { name: "Alice", email: "alice@example.com", age: 25 }

expected_status: 201

- case: missing_email

input: { name: "Bob", age: 30 }

expected_status: 400

expected_error: "VALIDATION_ERROR"

- case: invalid_email_format

input: { name: "Carol", email: "not-an-email", age: 22 }

expected_status: 400

- case: underage_user

input: { name: "Dave", email: "dave@example.com", age: 17 }

expected_status: 422

- case: duplicate_email

input: { name: "Eve", email: "existing@example.com", age: 28 }

expected_status: 409

This approach produces 5x the test coverage from the same test logic, and adding new test cases is as simple as adding a data row.

Generating Test Data from OpenAPI Schemas

Shift-Left API's AI engine generates realistic test data directly from OpenAPI schema definitions — including valid values, boundary values, and invalid values for every field. This eliminates the need to hand-craft test fixtures.

Response Time and Performance Testing

Establishing Baselines

Every API endpoint should have a response time SLA defined — even informally. Common baselines:

- Simple read endpoints: P95 < 100ms

- Filtered/paginated reads: P95 < 300ms

- Write operations: P95 < 500ms

- Complex aggregation: P95 < 1000ms

Testing Performance in CI/CD

Include response time assertions in your CI tests:

# tsl-config.yaml performance gates

performance_assertions:

- endpoint: "GET /users/{id}"

method: GET

p50_max_ms: 50

p95_max_ms: 100

p99_max_ms: 250

- endpoint: "GET /products"

method: GET

p50_max_ms: 150

p95_max_ms: 400

p99_max_ms: 800

- endpoint: "POST /orders"

method: POST

p50_max_ms: 300

p95_max_ms: 700

p99_max_ms: 1500

When an endpoint exceeds its SLA, the pipeline fails — just like a test failure. This prevents performance regressions from silently accumulating.

REST API Test Architecture

Tools Comparison

| Tool | Schema Validation | Auto Test Gen | Auth Testing | Data-Driven | CI/CD | No-Code |

|---|---|---|---|---|---|---|

| Shift-Left API | Yes (from spec) | Yes (AI-powered) | Yes (auto) | Yes | Yes | Yes |

| Postman | Manual | No | Manual | Yes | Yes | Partial |

| REST Assured | Manual (custom) | No | Manual | Yes | Yes | No |

| Dredd | Yes (from spec) | Partial | No | Limited | Yes | No |

| Pactum | Manual | No | Manual | Yes | Yes | No |

| Karate DSL | Manual | No | Manual | Yes | Yes | No |

| Tavern | Manual | No | Manual | Yes | Yes | No |

Shift-Left API's differentiation is clear: it is the only tool that combines OpenAPI-driven schema validation, AI-powered test generation, automatic auth testing, and data-driven parameterization without requiring any test code.

Free 1-page checklist

API Testing Checklist for CI/CD Pipelines

A printable 25-point checklist covering authentication, error scenarios, contract validation, performance thresholds, and more.

Download FreeReal Implementation with Shift-Left API

Here is how a team applied REST API testing best practices using Shift-Left API for an e-commerce API:

Starting Point

- 47 REST endpoints across 8 resource types

- Existing test coverage: basic Postman collection with 23 happy-path tests

- Zero negative tests, zero auth tests, zero schema validation

Implementation Steps

Week 1: Spec Import and Auto-Generation

The team imported their OpenAPI 3.0 spec into Shift-Left API. The AI engine generated 340 test cases in 90 seconds — covering all endpoints with happy-path, negative, schema validation, and auth tests.

Week 2: CI/CD Integration

The test suite was integrated into GitHub Actions with quality gates:

- 100% pass rate required

- P95 response time < 500ms

- Auth tests must pass for all protected endpoints

Week 3: Data-Driven Parameterization

The team added custom data sets for business-specific scenarios: discount code validation, multi-currency pricing, and inventory edge cases. These parameterized test cases added 60 additional scenarios.

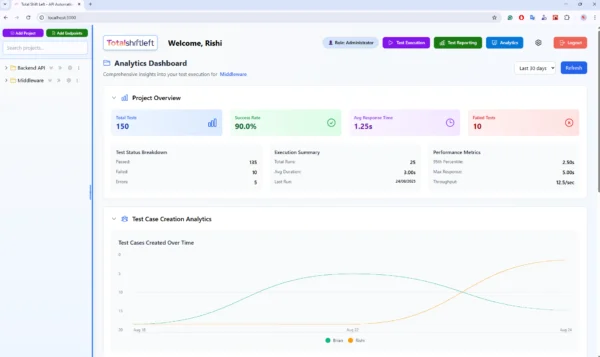

Week 4: Analytics Baseline

After two weeks of CI runs, the analytics dashboard showed:

- 3 endpoints consistently exceeding the 500ms P95 SLA

- 1 endpoint with inconsistent schema compliance (field sometimes present, sometimes absent)

- 2 endpoints missing proper error responses for 422 scenarios

The Shift-Left API analytics dashboard showing test pass rates, response time trends, and coverage metrics across all endpoints.

All four issues were production bugs that the original Postman collection had never detected.

Common Mistakes and How to Avoid Them

Mistake 1: Testing Only the Happy Path

Symptom: 95% of tests are GET /resource returning 200. Zero tests for 400, 401, 403, 404, or 422.

Fix: Use the OpenAPI spec's response definitions as your test matrix. Every documented status code should have at least one test.

Mistake 2: Not Validating Response Schema

Symptom: Tests assert response.body.user.id !== null but do not check the type, format, or completeness of the response.

Fix: Use schema validation on every response. Shift-Left API does this automatically for all generated tests.

Mistake 3: Hard-Coded Test Data

Symptom: Tests use a hard-coded user ID (123) that no longer exists in the test environment, causing random failures.

Fix: Use test data factories that create required data at test run time, or use data-driven parameterization with stable fixture data managed through the spec.

Mistake 4: Testing Authentication But Not Authorization

Symptom: Tests verify that unauthenticated requests return 401, but never verify that user A cannot access user B's data.

Fix: Add IDOR tests for every endpoint that operates on user-owned resources. Shift-Left API's auth test generation includes cross-user access scenarios automatically.

Mistake 5: Ignoring Response Headers

Symptom: Tests only check the response body and status code, missing issues with Content-Type, Cache-Control, Location, or X-RateLimit-* headers.

Fix: Add header assertions for headers that consumers depend on.

Best Practices Summary

- Always validate response schemas, not just status codes — status codes are the minimum viable test

- Test every status code defined in your OpenAPI spec — if you document it, test it

- Include negative tests for every input field — use the spec's constraints as your test matrix. For microservices, also add contract testing to verify inter-service agreements

- Test authentication and authorization separately — auth is about identity, authz is about permissions

- Use data-driven parameterization — one test template, many data rows, multiply coverage without multiplying code

- Establish response time baselines and enforce them in CI — performance regressions are bugs. See how to set up these gates in our CI/CD testing pipeline guide

- Generate tests from the OpenAPI spec — manual test writing cannot keep pace with API evolution. See the top API testing tools in 2026 for platforms that support spec-driven generation

- Store test configuration in version control — test changes should be reviewed and auditable

- Run tests in the environment closest to production — sanitized dev environments hide real issues

- Monitor production with scheduled API tests — CI tests catch pre-deployment issues; scheduled tests catch environment drift

- Fix schema violations before merging — a response schema mismatch is a contract violation, not a minor warning

- Test idempotency for PUT and DELETE — calling the same operation twice should produce the same result

REST API Testing Checklist

Coverage

- ✔ Every endpoint has at least one happy-path test

- ✔ Every endpoint has tests for each documented status code

- ✔ Every required field has a test for its absence

- ✔ Every string field has a boundary length test

- ✔ Every numeric field has boundary value tests

Schema Validation

- ✔ Response schema is validated against the OpenAPI spec for all tests

- ✔ Error response schema is validated (not just status codes)

- ✔ Response headers are asserted where relevant

- ✔ Field data types are asserted (not just presence)

Authentication and Authorization

- ✔ Unauthenticated requests to protected endpoints return 401

- ✔ Invalid tokens return 401

- ✔ Expired tokens return 401

- ✔ Insufficient permissions return 403

- ✔ Cross-user resource access is tested (IDOR)

Negative Testing

- ✔ Invalid request body types are tested

- ✔ Missing required fields are tested

- ✔ Invalid enum values are tested

- ✔ Injection payloads are tested in string inputs

- ✔ Boundary overflow values are tested

Performance

- ✔ Response time SLAs are defined for each endpoint

- ✔ Performance assertions are enforced in CI/CD

- ✔ P95 and P99 baselines are tracked over time

Automation

- ✔ All tests run automatically on every pull request

- ✔ Quality gates block merge on failure

- ✔ Test results are published to analytics dashboard

Frequently Asked Questions

What are the most important REST API testing best practices?

The most important REST API testing best practices are: validate response schemas against the OpenAPI spec, test all meaningful status codes (not just 200), include negative tests for invalid inputs and boundary values, test authentication and authorization for every protected endpoint, and automate everything in CI/CD with quality gates.

How should you structure REST API tests?

Structure REST API tests in layers: happy-path functional tests for each endpoint, negative tests for invalid inputs, authentication tests, schema validation tests, and performance baseline tests. Group tests by resource and use data-driven parameterization to cover multiple scenarios efficiently.

What is schema validation in API testing?

Schema validation verifies that the API response matches the structure defined in the OpenAPI specification — correct field names, data types, required fields, and formats. It catches bugs that status code checks miss, such as a field silently changing from integer to string or a required field disappearing from the response.

How do you test REST API authentication and authorization?

Test REST API auth by: sending requests with no credentials (expect 401), sending requests with invalid or expired tokens (expect 401), sending requests with valid credentials but insufficient permissions (expect 403), and verifying that authenticated requests return only the correct user-scoped data. Automate these for every protected endpoint.

Conclusion

Thorough REST API testing is not about writing more tests — it is about writing the right tests. The best practices in this guide focus your testing effort on the dimensions that matter: schema compliance that catches silent breaking changes, authentication and authorization testing that catches security vulnerabilities, negative testing that catches input validation gaps, and performance baselines that prevent degradation from compounding silently.

The good news is that 2026 tooling makes comprehensive REST API testing accessible without large QA teams or extensive test automation expertise. Shift-Left API's AI-powered test generation from OpenAPI specs produces hundreds of test cases covering all six testing dimensions — in minutes rather than weeks.

The result is a test suite that actually finds bugs: not a collection of happy-path smoke tests that only confirm the obvious, but a rigorous specification verification that you can trust to protect your API consumers in production.

Start with your OpenAPI spec. Generate your tests. Set your quality gates. Ship with confidence.

Related: What Is Shift Left Testing: Complete Guide | Shift Left Testing Strategy | API Testing Strategy for Microservices | How to Automate API Testing in CI/CD | How to Build a CI/CD Testing Pipeline | Best Shift Left Testing Tools | API Contract Testing | API Test Coverage | API Testing in CI/CD | No-code API testing platform | Total Shift Left home | Start Free Trial

Continue learning

Go deeper in the Learning Center

Hands-on lessons with runnable code against our live sandbox.

REST is the default API style on the web. Here's what it actually means — stripped of jargon and with runnable examples.

Fifteen conventions that make REST APIs a joy to use — and the absence of any one is a smell.

PUT replaces. PATCH modifies. Here's the subtle but critical difference — and why it matters for testing.

Ready to shift left with your API testing?

Try our no-code API test automation platform free.